I am referring to a resistor in series with the gate. It protects the gate from transients, and slows the edges for EMI. Some people like 100 ohms. Some people like 1k to 10k. Others like 10k and more. The proper choice depends on your system speed and how fast you want to switch the gate.

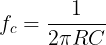

The series resistor and the gate capacitor form a low pass filter that slows the edges down, so many designers will choose a resistor that allows fast enough edges for the system switching speed (at that node), but not too fast so as to cause future EMI issues. If you assume the gate capacitance is 5 pf to 10 pf, then you get the following cut-off frequencies for various choices of gate resistor:

| R | 5 pf | 10 pf |

| 100 | 320 MHz | 160 MHz |

| 1K | 32 MHz | 16 MHz |

| 4.7K | 6.8 MHz | 3.4 MHz |

| 10K | 3.2 MHz | 1.6 MHz |

| 47K | 680 KHz | 340 KHz |

Lower R values for the gate series resistor allow it to switch faster. Since 12V is often from an off-board, external event, I expected that they would be relatively slow, so I said 10k to 47k would be good. If you are switching faster than that, then choose an appropriate gate resistor that allows the edges to be fast enough for your needs, but not excessively fast as to cause unnecessary EMI.

The choice of series resistor also allows you to control the current surge into the gate, to limit the gate charging current. So if your source 12V can only provide 10 mA with a 50 mA peak, then you will want to choose a gate resistor that will limit the gate charging current as well, to stay below some reasonable value, like 25mA. In this case, a 10k series gate resistor will limit the current to 1.2 mA max (12V/10k). If you used a 1k resistor, that would be 12 mA, and if you used a 100 ohm, it will be 120 mA. Those are short transient peak currents, but if your 12V supply can't handle it, you might have problems.