Yup, that is the most extreme example. Things like viewing angle, color reproduction, dynamic range, and so on are much more important.

Besides, Youtube videos are quite heavily compressed. The effective resolution is somewhat less than the actual resolution.

Just as a personal hobby, I've done some research on psychovisual perception, specifically into how to control the information density, and the information conveyed to a human observer, when describing atomic structures. For example, when you have a model of a molecule, or say some nanotubes and clusters in some interesting configuration, doing a "photorealistic" rendering -- as if the atoms were marbles, often interatomic bonds described using sticks -- can give the utterly wrong intuition as to what is important in the picture. It is as if some people saw a completely different thing than others. You need explanatory text, and tell the viewers what to look for, completely the opposite what the old "a picture says more than a thousand words" proverb states!

The best-working solutions I found was

simplification, and leveraging cartoon imagery: cel shading, outlines. In particular, varying outline thickness to establish the depth difference at an edge (so that an edge with the background close to the edge is thin, but an edge with the background far away is thick), works for molecular models better than e.g. photorealistic shadows do. (A combination of cel-shading, simple penumbra shadows, and dark edge thickness control, seems to work best, although my human test

victimsubject sample is tiny.)

(There is no physical reason why that is so, though. My guess is that it helps the visual centers in the brain separate the visual entities better, and acts like "visual annotation" helpers for the human brain. Similar to, say, speed lines in comics, that probably works by "hinting" to the brain that "this part of the background is perceived as blurry, because there is motion here".)

For photographs and video, you almost never get perfectly horizontal or vertical edges with a full 100% intensity difference between neighboring pixels. This is the main reason why DCT/iDCT compression methods work so well. For video, a much better test is something like how small text (compared to the size of the display) you can read at standard distance, or how narrow a moving and rotating wire or string you can perceive on top of different backgrounds. It is also possible that DCT compression followed by downsampling and then recompression yields a better visual quality with the same bitrate than downsampling alone, although I haven't seen any research into that; it's just a personal guess.

Sharpness in itself is not an end goal. As long as there have been movies, filtering ("smoothing") human faces has been used as a cinematic effect to bring something ethereal, "more beautiful than nature" quality to the visuals. If you can perceive more in the face of some person displayed than in real life (where the faces cover the same solid angle in your vision), you get another "uncanny valley" effect; you lose the immersion effect. You really only need sharpness for details humans will try to perceive.

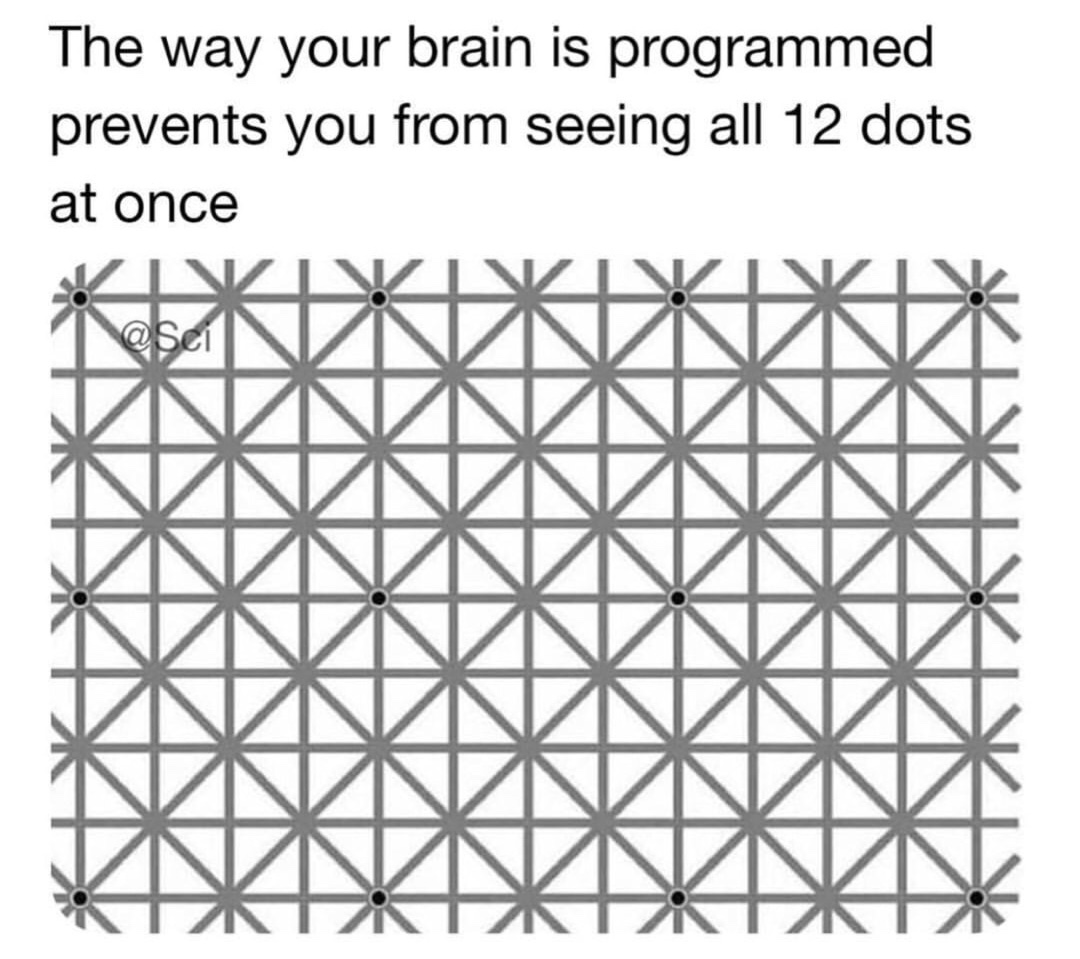

Finally, the human eye is not an uniform sensor (it is sharpest at a small region, with relatively poor resolution elsewhere, more suited for motion detection), and even when we

see something, we may

perceive it differently. It is trickier than you might think. Here's an example: