-

M.2 can have USB, SATA(1,2 or 3), PCIe(from 1x to 4x), also as i2c and some extras, M.2 is a physical connector, not a protocol.

Even PCIe SSD's can be either AHCI or NVMe, the AHCI ones are slower than NVMe ones, and a bit rare today.

You feel the difference between a SATA SSD and a NVMe drive as the OS/programs drive, one of my laptops support both, and the NVMe is a bit faster and even more snappy than SATA SSD.

Why is a modular PSU more efficient than a non modular one?

The cables can come out, but that doesn't interfere with the PSU rating, if you get a bronze modular vs a platinum non modular, the later will have better efficiency.

I wouldn't use an SSD as a cache, if you are going the route of x99 cpu's, throw RAM into it and use a RAM disk. -

The efficiency curve for power supplies is not that sharp and power derating the power supply results in higher reliability. I am getting really tired of replacing improperly derated capacitors in PC power supplies.

-

Why is a modular PSU more efficient than a non modular one?

The cables can come out, but that doesn't interfere with the PSU rating, if you get a bronze modular vs a platinum non modular, the later will have better efficiency.

I wouldn't use an SSD as a cache, if you are going the route of x99 cpu's, throw RAM into it and use a RAM disk.

Using SSD as a disk cache is all about reliable persistence. Using a RAM drive as disk read/write cache is not reliable, pointless actually, when you want to persist data.

RAM is overall more reliable (especially ECC), faster, and survives more read/writes. But that is does not fulfill the requirements of the Read/Write cache for persisting data. RAM is temporary, a disk cache is NOT.

How many servers have you seen that uses RAM-drives as Read/Write cache for databases? I bet 0! They use NVMe drives in RAID configuration to speed up the read/write of the most recent or most recently accessed data.

Modular PSUs have multiple power rails and the best ones can intelligently enable and disable and also switch/combine the Rails on the fly depending on load on each output cable. This improves/Optimize overall efficiency. No ordinary PSU can compete with that.

Best part of modular PSUs is that you can remove all extra cables and make the case more tidy and have better airflow to all circuits. -

The efficiency curve for power supplies is not that sharp and power derating the power supply results in higher reliability. I am getting really tired of replacing improperly derated capacitors in PC power supplies.

-

Using SSD as a disk cache is all about reliable persistence. Using a RAM drive as disk read/write cache is not reliable, pointless actually, when you want to persist data.

+1. Yet I still see people recommending this (as in, "I just bought a workstation with 96 GB RAM, I'm going to use 32 GB to make a RAM disk and put my swap file there"). Doesn't make sense because all modern operating systems do this anyway with unused RAM (ie, use it to cache disk reads). It's taken to an extreme with certain filesystems like ZFS. -

Just to make this Ramdisk or SSD write cache a bit more clear....

Scenario:

I produce 2 videos on 2 separate computers, computer A and computer B. On A I use a RAM disk, on B I use an SSD cache. Both videos are the same, batch produced from a 3d software into 4k video , are multiple hours long and are first encoded/rendered into raw video, then encoded into MPEG-4 (pick any standard and container you want, does not matter)

For best quality / best compression ratio and after the perfect filters added, it takes me 3 full passes of encoding before the compressed video is in it's final state

Each pass takes about 8 hours to complete, and the rendering takes about 6 days.

Now what happens after 6 days and 22hours (6h into 3rd pass of video encoding) of encoding and rendering is that we we have a power loss. All electricity GONE!

Question:

1. What happened to the data in the computer with the RAM disk during the power loss?

2. Could all data fit in the RAM disk?

3. What happened to the data in the computer with the SSD cache?

4. Could the data fit into the disk array?

5. Where can I expect to continue my rendering/encoding process, on computer A and computer B, after the power comes back on and computers have rebooted?

6. How many hours difference in rendering/encoding time will I have between A and B?

Edit: added numbers to the questions for better separation/identification -

Thank you for all of your detailed posts. Sorry, I have not replied in a little while, I was busy.

Slicendice, thank you for that detailed list that you put up. I will be putting up a list of my current selection based on everything that has been suggested to me, tomorrow.

The quad CPU is definitely not necessary anymore, so I will be sticking to a dual CPU instead. I think it should be enough. Thank you for clarifying that. The 10 core thing came to mind after I had already posted.

Does anybody think that this motherboard is a smart choice?

https://www.supermicro.nl/products/motherboard/Xeon/C600/X10DRX.cfm

Like I said, tomorrow I'll be posting a full list of the components I have compiled so far. I have also finished the idea for the custom built server rack that I will be building the computer into, and I would like to run it by everyone. I want to make sure I will have all of the proper cooling equipment.

Thank you again, everyone! -

You're welcome!

Can you please put together a short list of alternative dual Xeon motherboards including approximate price and links to specs? -

Does anybody think that this motherboard is a smart choice?

https://www.supermicro.nl/products/motherboard/Xeon/C600/X10DRX.cfm

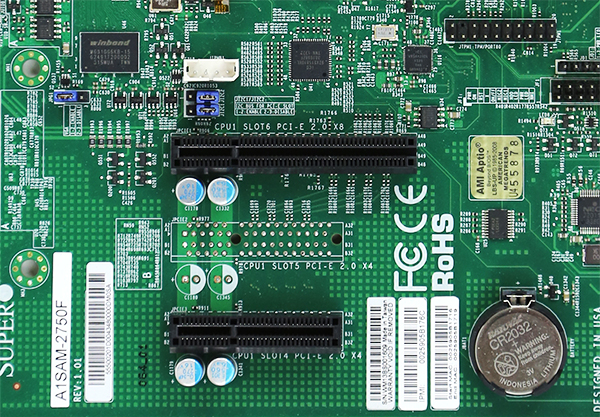

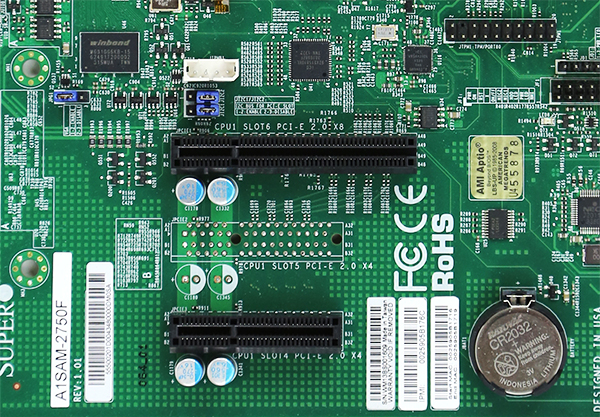

PCIe slots are x8. This will slow down most GPUs which have x16. It's also a proprietary form factor, which means it will only work with a specific Supermicro chassis.

Better option would be to look under their barebones and pick a system that meets your needs. For example, the 7048A-T, a tower/rack convertible, advertised as quiet - this is important because most rackmount servers sound like mini jet engines. This is why I was surprised you wanted a rackmount as a workstation. Need more GPUs? Consider a 7048GR-TR with support for up to four, with a 2kW PSU.

Supermicro boards have a reputation of being somewhat picky about their memory modules. Sticking to the recommended list will avoid headaches. -

Yes I got a bit worried about the Supermicro choice too, this is why I would like to see a list of possible alternatives.

-

Does anybody think that this motherboard is a smart choice?

https://www.supermicro.nl/products/motherboard/Xeon/C600/X10DRX.cfm

PCIe slots are x8. This will slow down most GPUs which have x16.

Slow down? Good luck even installing them. -

Does anybody think that this motherboard is a smart choice?

https://www.supermicro.nl/products/motherboard/Xeon/C600/X10DRX.cfm

PCIe slots are x8. This will slow down most GPUs which have x16.

Slow down? Good luck even installing them.

I'll be surprised if any GPU doesn't work at lower PCIe lanes. Usually a x16 GPU can happily work on even x1 PCIe link.

Performance penalty is not severe either. In fact, most GPUs don't really need x16 speed at all unless running some bandwidth intensive operations such as GPGPU with badly optimized host memory accessing pattern.

When running graphics tasks such as gaming, x8 has no perceptible difference compared to x16, according to LinusTechTips' benchmark (search "4 way SLI").

......

You cannot physically install them in the slots. -

You cannot physically install them in the slots.

There are x16 physical slots with only x8 or even x4 signal connection, commonly seen on mobos. In fact, consumer grade Intel chips only have 16 PCIe lanes, so to have a dual GPU configuration, they must operate at x8 mode, let alone 3-way SLI configuration, which usually has x8/x4/x4 or x4/x4/x4 configuration if NVMe SSD is used.

Irrelevant. Look at the board we are discussing.QuoteThere are advanced enthusiast level mobos with multiple true x16 slots supported by a PLX chip, which is essentially a PCIe hub that allows "on demand" bandwidth allocation, instead of fixed or boot-time selectable allocation implemented on cheap boards.

Irrelevant.QuoteAlso, keep in mind that you can always hacksaw (pun intended) your PCIe slot to make it a semi-open slot to accommodate any length of cards if you wish. Though ID pin will be missing, auto link negotiation will still make sure the connection will work at maximum bandwidth.

Somehow I knew you'd bring this up, and if you want to take a hacksaw to a $650 motherboard, more power to you. Nobody else does. -

I did this on my SuperMicro C226 mobo to fit an Intel Xeon and make it coexist with GTX750Ti.

I did similar things (though non destructive, just riser cards) to my AsRock X99 to bring M2 slot to U2 for SSD, and to bring mSATA/mPCIe to PCIe.

If you ever fix any of my computer equipment. I will insist that you DON'T, bring the hacksaw. Please leave the hacksaw, safely under lock and key at home. (I'm both 100% completely joking, and 100% serious at the same time, if you understand what I mean).

(I'm both 100% completely joking, and 100% serious at the same time, if you understand what I mean).

tl;dr

If I were to have a brand new, £10,000 Workstation. I would NOT like someone to use a hacksaw on the motherboard, to make it. (ignoring the initial PCB production, obviously).

EDIT:

Googling the hacksawing. Apparently some people, who buy the servers when they are rather old, and not worth very much, e.g. £100.

Might use the hacksaw method, to the graphics card (a cheap one) or the motherboard, so they can put in a powerful gaming Graphics card.

But I think it is a different matter, if it an old £100 server, used as a spare gaming machine or something. Rather than a >£5,000?? workstation for serious work. Which might need to be returned under warranty. -

You cannot physically install them in the slots.

Yes you can (look closely at the right edge of the x8 slot):

On the motherboard in question, note the large open space below the PCIe slot area. This is to take up any overhang from x16 boards.I'll be surprised if any GPU doesn't work at lower PCIe lanes. Usually a x16 GPU can happily work on even x1 PCIe link.

You may be right, especially with PCIe gen 3 which can stuff a lot of data down each lane.

Performance penalty is not severe either. In fact, most GPUs don't really need x16 speed at all unless running some bandwidth intensive operations such as GPGPU with badly optimized host memory accessing pattern.

When running graphics tasks such as gaming, x8 has no perceptible difference compared to x16, according to LinusTechTips' benchmark (search "4 way SLI"). -

As a quick note again, do not worry about the size of the motherboard or what it can fit into. I am going to customize something myself in order to build the PC into it.

I did have some other alternative boards that I posted earlier, but they weren't smart choices, or they were quad CPU boards, which turned out unnecessary. The supermicro decision was a recommendation from Monkeh. That wasn't the suggested board itself, but another supermicro board.

The only other board I was looking at was this one...

https://www.asus.com/us/Motherboards/Z10PED8_WS/

I think it's even worse off than the supermicro one at this point, though.

I'm going to list all of my current pieces. Would someone be willing to help me pick a good motherboard? Again, price is no concern. I just want it to be something future-proofed. Something that I can continue to upgrade further and further in the future.

Here are my components so far...

RAM memory: http://www.crucial.com/usa/en/ct4k32g4lfd424a

Graphics card: https://www.bhphotovideo.com/bnh/controller/home?O=&sku=1207157&gclid=CJ-2wM6UpdECFceIswodEpIAoA&Q=&ap=y&m=Y&c3api=1876%2C92051677442%2C&is=REG&A=details

CPU Processor X2: https://www.bhphotovideo.com/bnh/controller/home?O=&sku=1226971&gclid=CI_okcWSpdECFdeEswodSLcOWQ&is=REG&ap=y&m=Y&c3api=1876%2C92051677442%2C&A=details&Q=

SSD PCIe: http://www.newegg.com/Product/Product.aspx?Item=9SIA12K4ND8252&ignorebbr=1&nm_mc=KNC-GoogleMKP-PC&cm_mmc=KNC-GoogleMKP-PC-_-pla-_-Solid+State+Disk-_-9SIA12K4ND8252&gclid=CKPyxYqPstECFc5LDQodKOEJ6g&gclsrc=aw.ds

I haven't found anything wrong yet, except that I don't have a motherboard yet. These were all taken and/or based off of other users suggestions. -

For this build I really don't see any reason to build a dual CPU system. If it was going to be a do it all server or a virtualization station then the more CPUs, the better as a lot of CPUs gives a lot more cores/threads and RAM too.

-

If you ever fix any of my computer equipment. I will insist that you DON'T, bring the hacksaw.

If I were to have a brand new, £10,000 Workstation. I would NOT like someone to use a hacksaw on the motherboard, to make it.

In fact, I use a Dremel Micro (regretted not getting a Dremel 12V version) with 405 saw blade tool .

.

BTW, my main workstation is not a cheapo by any means, especially considering its water cooled 22-core Xeon E5, 64GB REG ECC RAM and 1.2TB Intel SSD plus a pair of Dell premium line LCD monitor plus $5-digit I spent on software. The money I spent on RAM and SSD along can build a top gaming machine.

I think it is just that opinions/methods can vary, as regards, physically modifying computer equipment. I.e. It is not necessarily a matter of either of us being right or wrong. Just our opinions are varying about this.

To the OP. There are some big developments, coming to server computers, in 6 months (estimated, very approximate), and later.

AMD are going to be releasing new server processors (they have been quiet on the server front for many, many years), which may dramatically lower server cpu prices and/or improve the number of cores you get, for your money.

Intel are releasing the next generation of server processors, with the significantly improved platform. -

You cannot physically install them in the slots.

Yes you can (look closely at the right edge of the x8 slot):

On the motherboard in question, note the large open space below the PCIe slot area. This is to take up any overhang from x16 boards.

Some slots being open backed != all slots being open backed.

Sadly in this case, having checked the manual for actual details, your assumption is closer to correct - most slots on that board are actually open backed. -

Quote

There are OEM version chips out there that are cheaper and more powerful. These are NOT the crappy non-reliable ES/QS ones (which are essentially silicon lottery).

OEM chips are massively produced chips that target only big customers like Oracle or Cisco, that build specialized servers in massive quantity.

Some of the chips are leaked to eBay through grey market. There is a vivid market that smuggles OEM chips to evade IP cost impinged on retail version counterparts.

I would suggest a single socket E5-2679v4 or E5-2696v4, which is more powerful than 2*your chosen CPU, at only slightly higher price, plus it doesn't have QPI performance penalty for multi-socket systems.

When you really need more power, just add another one to double the performance.

My E5-2696v4 absolutely rocks, it is only 1/3 the price of its retail counterpart, E5-2699v4, while all specs are exactly the same except for max turbo boost frequency -- it is actually 0.1GHz higher than 2699v4, and the specific step (silicon revision) I have can turbo boost to 2.9GHz when all cores are used even in AVX mode while maintaining <110W power dissipation.

The use of single socket instead of dual socket not only circumvented QPI performance penalty, but also saved my $$$ on a proper server mobo as I can use a regular X99 mobo with tons of DIYer friendly features. What's more, it makes ITX form factor possible as now I can own the world's most powerful shoe box computer.

blueskull, thank you for your advice. I looked into the processors you suggested, and I think I will be getting one of those. They seem to be a much better deal.

As for the rest of my components, are they any good?

Most importantly, can anyone suggest to me a good motherboard, please? I've continued searching, but I can't find one that is any better than my other suggestion by supermicro?

I'll be back on tomorrow with my concept for the server rack build.

-

I just want it to be something future-proofed. Something that I can continue to upgrade further and further in the future.

That's why I mentioned that (or hinted at) Intel every (approx) ten years, brings out a dramatically new/improved overall scheme of things, for servers/workstations. We are getting somewhat close to the next big one.

I'm NOT sure if it is weeks, months or later this year, away. Maybe someone who knows more about the release schedule (probably secret) can chime in.

But buying now, is not the end of the world, and will still be sort of upgradable for some time.

I'm looking forward to Skylake-EP, the new platform purley, and its improvements.

Since the exact details tend to be by rumors/leaks, I can't accurately attempt to put you off buying the current motherboards and cpus.

If you need the thing NOW, then it can't be helped.

N.B. I'm known to be too enthusiastic about new/upcoming products, and tend to over-emphasis waiting for them. So feel free to ignore this post and buy the existing one.

But the most future proof system, is the next one (hopefully not too long away). Because it will remain for something like ten years (ultra approx), in its basic overall concept.

tl;dr

Due to secrecy surrounding the new system, I'm not sure how much better it will be. But it sounds very promising, in some areas. -

For the last 5-10 years, nothing really impressive has been released regarding CPU performance. On Intel chips the focus has been on less power consumption and faster integrated graphics, that's pretty much it. I don't expect the next generation of CPUs to be that much different. Only AMD is going to release it's next gen CPU and GPU really soon, which sounds amazing, but is it worth to wait? Maybe, maybe NOT. Only future will tell.

I think you should at least take a look at ASUS Professional (WS line) motherboards, they are quite reliable and has a lot of features for a decent price. But as I stated earlier, you don't need something like Dual CPU system with 32-cores on each CPU, waste of money for your purposes.

Only real valuable upgrades later on would be to have room for more HDDs and RAM possibly Graphics card, but if you're going for a Quadro then an upgrade will be quite expensive. -

I'm looking forward to Skylake-EP, the new platform purley, and its improvements.

There are already top of the line chips leaked out for LGA3467 platform as well as mobos from SuperMicro on Taobao.

The top of the line chip is 32-core, 2.1GHz E5-2699v5, which has a 220W TDP.

The currently revealed E5v5 has 28 cores at 1.8GHz, but the new unreleased beast will have 32 cores at 2.1GHz.

Even though, 220W TDP makes it not anywhere more advanced compared to v4 platform -- 220W/32/2.1GHz=3.27W/GHz per core, and current flagship E5v4 has 145W TDP, which corresponds to 145W/22/2.2GHz=3.00W/GHz.

Also, it is unknown what will be all-core turbo boost frequency of the new chip, while the v4 generation can turbo boost up to 2.8GHz when all cores are crunching numbers.

I'm also hoping that it may allow people to get dual socket motherboards, WITHOUT needing to get hold of special (often server only), special "NUMA" aware software. Which can properly exploit dual socket cpu systems, as regards memory. I have not seen details yet, showing to what extent Intel have achieved this. There will probably still be a need/improvement, by using special software (NUMA etc).

It is perfectly possible Intel show little or no improvement in this respect. I just don't know yet.

Because (as you said in an earlier post), the existing two socket motherboards, have relatively slow inter-memory (QPI) links between them. Making NUMA aware/capable software somewhat important, if you want to get the best performance out of it.

Hopefully the new platform (Purley) will have much better/faster memory links between the two cpu sockets.

If not, a maximum of 32 cpu cores (probably more later, on the new/fresh platform), even on a single socket. Does not sound too bad.

The next one will be rather "future proof", because it will take (the motherboard), at least two or more generations of Intel server chips. I.e. will remain current for probably 5 or more years (NOT guaranteed), for the new socket type.

Whereas the existing/current one (the OP seems to want to get), has probably seen the last new Intel cpu, it is ever going to get, and will gradually disappear completely, once the new ones come out. But it will be still supported, for some time. -

For the last 5-10 years, nothing really impressive has been released regarding CPU performance.

For some people, it may be considerably faster. Because of the new platform "Purdy". The existing platforms have limited the available performance, this limit maybe considerably extended in the upcoming new platform.

E.g. The inter-cpu memory links, are especially performance critical (for some software), and may be considerably faster, by a huge factor, in these new processors.

Or to put it another way. You can currently get lots and lots of cpu cores, with the two sockets. Something like 44 cores in total. But for some software, you CAN'T properly/usefully use so many cores. Because it spends all its time, transferring stuff across the memory links and other delays.

A well designed, new platform, can considerably speed up those memory links (and other stuff), so that instead of only being able to use (say) 15 of the 44 cores, usefully.

You may then be able to use 55 out of a total of 64 cores, giving a huge speed improvement.

I.e. using 55 cores instead of 15, because of the memory link bottleneck. -

Because (as you said in an earlier post), the existing two socket motherboards, have relatively slow inter-memory (QPI) links between them. Making NUMA aware/capable software somewhat important, if you want to get the best performance out of it.

Correct. The new UPI might help, but I don't expect OmniPath to be equipped on low cost LGA2066 platform. It will be an LGA3467 exclusive feature.The next one will be rather "future proof", because it will take (the motherboard), at least two or more generations of Intel server chips. I.e. will remain current for probably 5 or more years (NOT guaranteed), for the new socket type.

I will think twice. Starting from Skylake, HEDT will not be Xeon compatible. There will be an X299 mobo that uses LGA2066, targeting workstation and enthusiast gamers, and there will be a C622 mobo that uses LGA3467, which targets multi-socket servers or MIC chips.

Since starting from then, cheap grey market server CPUs won't be able to be used on cheap consumer grade mobos, I would say the platform will be considerably more expensive compared to current X99/LGA2011-3 platform. You either have to pay Intel for more expensive server mobo, or retail CPU. Use of OEM CPUs on consumer mobo will be the past.

Intel may be being a bit too interested in their high profit margins, by not allowing the xeons to work on the cheaper/consumer motherboards. But AMD's upcoming new zen (and different code names) cpus, will hopefully provide much needed competition. So that Intel will play better/fairer in the future.

You should still be able to buy reasonable (but a lot more expensive) single server socket motherboards, for the xeons. So it should not be so bad. Except your tiny ITX water cooled computer, may no longer exist as an option, if you are unlucky about it.