-

Memory management bug in Intel CPUs threatens massive performance hits.

Posted by

Ampera

on 03 Jan, 2018 13:47

-

https://www.theregister.co.uk/2018/01/02/intel_cpu_design_flaw/Lovely for people like me who run an i7-4790k.

Curious to know what this crippling big actually is, but from what was described, I'm about ready to join with the rest of the Intel users here in giving Intel a collective backhand slap to the head.

So the question is now what sort of performance hits could we be seeing...

-

#1 Reply

Posted by

stj

on 03 Jan, 2018 14:33

-

it's a memory management "bug" that should stop regular code seeing kernel workings - in simple terms.

if fixed in the way they are sugesting the hit will be absolutly huge.

they are talking about flushing the cpu cache every time a user-space thread makes a system call.

keep in mind the cache is what makes a difference between a celeron and a xeon!!!

-

#2 Reply

Posted by

bd139

on 03 Jan, 2018 14:51

-

-

#3 Reply

Posted by

Jeroen3

on 03 Jan, 2018 15:03

-

-

#4 Reply

Posted by

wraper

on 03 Jan, 2018 15:23

-

As fix, they reset the Translation lookaside buffer each context switch.

The linux devs called it FUCKWIT

Forcefully Unmap Complete Kernel With Interrupt Trampolines

-

#5 Reply

Posted by

Avacee

on 03 Jan, 2018 15:36

-

Short-term can expect Intel's share price to drop - especially when the class action law suits start.

But I can't help but wonder if Intel's share price will go up in the mid-term as lots of people replace/upgrade their CPU's :p

-

#6 Reply

Posted by

dr.diesel

on 03 Jan, 2018 15:47

-

Looks like a fix might take a branch prediction rework. If so, not trivial, and if a fix wasn't already in the works, something like this could take quite some time to fix.

-

#7 Reply

Posted by

bd139

on 03 Jan, 2018 16:14

-

-

#8 Reply

Posted by

JoeO

on 03 Jan, 2018 16:17

-

And to think that just today I was reading a post here on the EEVBLOG about how great Intel's processors are compared to AMD's.

-

#9 Reply

Posted by

bd139

on 03 Jan, 2018 16:30

-

They're both as shit as each other. AMD has had a fair number of problems too. If that makes you feel better

Really this has been on the cards for a number of years. All current Intel (and AMD) CPUs are pretty much emulators. They are actually a crazy hyper-pipelined RISC microcoded virtual machines that happens to run x86 and x86-64 instructions. The problem here is that most of the bugs you can fix by changing the virtual machine implementation (microcode) but this one is actually in the physical virtual machine implementation at the bottom of the pile of turds. They hire hoards of design verification engineers to make sure that there are no holes in the native and virtual execution environments but this one slipped through. Actually quite a few have slipped through causing everything from random process crashes to big security holes like ASLR bypass.

And this is what happens people when you layer abstractions so deep and so complicated that you require several volumes of books to just explain the ISA and to maintain backwards compatibility to what is fundamentally some crack smoke inspired architecture from the late 1970s.

I hope the hell POWER wins some fans out of this.

-

#10 Reply

Posted by

Ampera

on 03 Jan, 2018 17:01

-

It's still a case of sit back and see how bad shit gets. Hope for the best, expect the worst.

-

#11 Reply

Posted by

dr.diesel

on 03 Jan, 2018 18:28

-

-

#12 Reply

Posted by

bd139

on 03 Jan, 2018 18:42

-

To be clear the problem doesn’t affect ARM as far as anyone knows but the architectural change in Linux is being applied as a “defence in depth” strategy.

-

#13 Reply

Posted by

dr.diesel

on 03 Jan, 2018 18:46

-

-

#14 Reply

Posted by

Mr. Scram

on 03 Jan, 2018 18:53

-

This is a pretty big one and they can't fix it with microcode either.

Interested to see real world load changes before everyone shits the bed however. Either way it's going to cost us a percentage more, particularly on AWS.

Intel CEO knew something was going down as well: https://www.fool.com/investing/2017/12/19/intels-ceo-just-sold-a-lot-of-stock.aspx

He wouldn't be that stupid, right? That's how you get torn apart by investigators or even go to jail.

-

#15 Reply

Posted by

bd139

on 03 Jan, 2018 18:55

-

-

#16 Reply

Posted by

Lightages

on 03 Jan, 2018 18:58

-

So do I start shopping for a Threadripper right now? Do I disable W7 updates until I get something that isn't going to pulled back to 2010 levels of performance?

-

#17 Reply

Posted by

bd139

on 03 Jan, 2018 19:11

-

I would sit down and do nothing for now and see what happens. Most of the embargoes are only lifted tomorrow with patches as well so time will tell.

-

#18 Reply

Posted by

Gyro

on 03 Jan, 2018 19:19

-

This is a pretty big one and they can't fix it with microcode either.

Interested to see real world load changes before everyone shits the bed however. Either way it's going to cost us a percentage more, particularly on AWS.

Intel CEO knew something was going down as well: https://www.fool.com/investing/2017/12/19/intels-ceo-just-sold-a-lot-of-stock.aspx

He wouldn't be that stupid, right? That's how you get torn apart by investigators or even go to jail.

Combined with this quote from the link that dr.diesel posted...

Microsoft has been testing the Windows updates in the Insider program since November,

It does look dangerously close to insider trading.

-

#19 Reply

Posted by

Ampera

on 03 Jan, 2018 20:23

-

I am hearing anecdotal claims that the effect isn't as bad in 3D workloads as claimed, but it's still yet to be seen.

I don't think it will affect the consumer or even generic power user as much as people who work with hypervizors.

It's definitely a dancing day for AMD, though. With AMD back in the game, who knows if this is going to sink Intel's 5-6 year strong lead.

-

#20 Reply

Posted by

Mr. Scram

on 03 Jan, 2018 20:30

-

I am hearing anecdotal claims that the effect isn't as bad in 3D workloads as claimed, but it's still yet to be seen.

I don't think it will affect the consumer or even generic power user as much as people who work with hypervizors.

It's definitely a dancing day for AMD, though. With AMD back in the game, who knows if this is going to sink Intel's 5-6 year strong lead.

AMD was lagging a single digit percentage in performance, but if these percentages turn out to be correct AMD might very well lead by the same margin. I loathe to think what discussions this will cause amongst the fanboys on either side.

-

#21 Reply

Posted by

tszaboo

on 03 Jan, 2018 20:36

-

Are you people crazy? It affects Virtual machines that can read from each other. It only affects you, if you are running more than 1 virtual machines on your PC server, and one would run malicious code, specifically designed to attack the other virtual machine. This is only an issue for cloud providers.

99.9999% of PC users are not affected.

-

#22 Reply

Posted by

Monkeh

on 03 Jan, 2018 20:40

-

Are you people crazy? It affects Virtual machines that can read from each other. It only affects you, if you are running more than 1 virtual machines on your PC server, and one would run malicious code, specifically designed to attack the other virtual machine. This is only an issue for cloud providers.

99.9999% of PC users are not affected.

.. no, no, that isn't it.

This is an issue which can potentially allow an unprivileged user-mode process to read kernel memory.

-

#23 Reply

Posted by

pigrew

on 03 Jan, 2018 20:45

-

Do VM hypervisors normally allow multiple VMs to execute simultaneously (by dividing up cores)?

-

#24 Reply

Posted by

Monkeh

on 03 Jan, 2018 20:49

-

Do VM hypervisors normally allow multiple VMs to execute simultaneously (by dividing up cores)?

Sure. Or you'd have a VM with one core assigned blocking the whole shebang.

-

-

Are you people crazy? It affects Virtual machines that can read from each other. It only affects you, if you are running more than 1 virtual machines on your PC server, and one would run malicious code, specifically designed to attack the other virtual machine. This is only an issue for cloud providers.

99.9999% of PC users are not affected.

Nope. The ASLR leak has been demonstrated from Javascript so any code running from a web page you have visited can exploit MMU timing to resolve the address of kernel mode data structures and subsequently it just needs an exploit for buffer overflow etc or rewriting the stack return address and you are pwned. But ignorance is bliss.

https://www.vusec.net/projects/anc/

-

-

Is this even an issue for standalone PCs ?

-

#27 Reply

Posted by

dr.diesel

on 03 Jan, 2018 20:56

-

-

#28 Reply

Posted by

Monkeh

on 03 Jan, 2018 20:57

-

Is this even an issue for standalone PCs ?

Yes - your applications aren't meant to be able to find the kernel, let alone read it.

-

#29 Reply

Posted by

Ampera

on 03 Jan, 2018 21:00

-

-

#30 Reply

Posted by

PA0PBZ

on 03 Jan, 2018 21:00

-

Intel's PR response:

https://newsroom.intel.com/news/intel-responds-to-security-research-findings/

Look what they did there:

Intel is committed to product and customer security and is working closely with many other technology companies, including AMD, ARM Holdings and several operating system vendors, to develop an industry-wide approach to resolve this issue promptly and constructively.

-

#31 Reply

Posted by

Mr. Scram

on 03 Jan, 2018 21:04

-

This is rather interesting. I read that this only affects Intel chips, yet Intel is stating it affects AMD and Acorn chips as well.

They don't seem to be actually saying this. Just conveniently mentioning it together.

Obviously, Intel is in full damage control mode right now. This might be the moment they lose the crown to AMD, especially considering they've taken a few hits in the recent past. There is no way they wouldn't downplay the issue on their side and attempt to shift the focus elsewhere.

Regardless of which party you like more, Intel has shown to be very shrewd and ruthless when it comes to marketing again and again.

-

#32 Reply

Posted by

langwadt

on 03 Jan, 2018 21:05

-

-

#33 Reply

Posted by

Ampera

on 03 Jan, 2018 21:09

-

Darn me and my inability to read.

Yeah, this does seem like Intel is starting to freak out. Good.

AMD should take the x86 helm and keep it. Intel has been being a dick about things for way too long. Before now there just wasn't another alternative.

-

#34 Reply

Posted by

Cerebus

on 03 Jan, 2018 21:15

-

Intel's PR response:

https://newsroom.intel.com/news/intel-responds-to-security-research-findings/

This is rather interesting. I read that this only affects Intel chips, yet Intel is stating it affects AMD and Acorn chips as well.

To quote

Mandy Rice-Davies "Well, 'e would [say that], wouldn't he?".

There is no currently extant evidence that this problem affects anyone else, just Intel.

Although the "official" explanation isn't out yet what the problem appears to be is: On Intel's chips that support speculative execution, tests for whether a privilege violation has taken place are delayed until

retirement of speculative executions. Thus, say, a speculative read of kernel space by a user process can actually retrieve results from kernel space before being 'caught' by a privilege violation exception rather than being prevented from making the access in the first place. Quite how one exploits that to grab the accessed information before the exception takes place is the tricky bit, but the process of catching the violation after is has actually taken place, as opposed to preventing the violation taking place, is clearly flawed by design.

-

#35 Reply

Posted by

Ampera

on 03 Jan, 2018 21:16

-

I thought speculative execution was a P5 feature, unless I am thinking of something else.

If that is the case I don't own an Intel chip without it.

-

#36 Reply

Posted by

MT

on 03 Jan, 2018 21:17

-

https://www.theregister.co.uk/2018/01/02/intel_cpu_design_flaw/

Think of the kernel as God sitting on a cloud, looking down on Earth. It's there, and no normal being can see it, yet they can pray to it.

And those who have more then a decade old CPU and not religious are safe? This time we can actually see and poke god in his/her eye!

-

#37 Reply

Posted by

JoeO

on 03 Jan, 2018 21:23

-

-

#38 Reply

Posted by

bd139

on 03 Jan, 2018 21:27

-

Some comments and anger.

----

Intel quote "Intel believes its products are the most secure in the world and that, with the support of its partners, the current solutions to this issue provide the best possible security for its customers."Fucking bollocks.

https://danluu.com/cpu-bugs/ - Intel have a very bad security record when it comes to microcode, Intel ME, horrible Atom bugs, FDIV etc. That's just basically someone saying

"hey chaps Chernobyl wasn't all that bad! We've still got the best nuclear power plant in the world. Can you poke the cameras the other way please, away from the blue glow in the windows from the Cherenkov radiation"Also going back to 2007 (!) Theo de Raadt on x86:

https://marc.info/?l=openbsd-misc&m=119318909016582IA32 and x86-64 are piles of rancid shit. There are people who have left Intel now shitposting about how bad their design verification was and the management team pushing "velocity" because they got urinated upon in the mobile sector

----

Intel quote 2: "Intel is committed to product and customer security and is working closely with many other technology companies, including AMD, ARM Holdings and several operating system vendors, to develop an industry-wide approach to resolve this issue promptly and constructively."Fuck them in the ass. What a bunch of spin doctoring cunts. They have literally zero honour dragging AMD and ARM into this. I would be fucking pissed. This could hurt stock and reputation for a potential non issue. That's just evil.

----

Are you people crazy? It affects Virtual machines that can read from each other. It only affects you, if you are running more than 1 virtual machines on your PC server, and one would run malicious code, specifically designed to attack the other virtual machine. This is only an issue for cloud providers.

99.9999% of PC users are not affected.

As alluded to in a previous post, this is a privilege escalation bug which allows kernel memory to be read by user processes. There is a proof of concept already demonstrated which is enough to pull vectors out of kernel RAM. This entirely defeats ASLR and entirely deletes the privsep implementation of x86-64. Virtualization via VT-x is another layer of abstraction above this and we don't know exactly how that is affected yet. The big worry with this and me is VMCS shadowing. I am almost 100% sure this is a turd which is going to fall under this. Maybe not for a few months yet. If you look at how the EPT / TLB implementation is, adding IOMMU and virtualization support, it's difficult to consolidate exactly how the hell it all fits together. It's that complicated that it's like the film "the Cube". I don't think any engineering team be it forward engineering or design validation can actually rationally test the whole thing.

Ugh this is a nightmare just unfolding.

-

#39 Reply

Posted by

Decoman

on 03 Jan, 2018 21:28

-

I think it is fair to assume that every Intel cpu has "NSA inside" with Intel's management engine. :|

Personally, I think owning a computer these days is just a horror show. No privacy, bad security, bad software and what I like to think of as being the police state (what people call 'surveillance state').

Afaik, any catastrophic security flaw involving the management engine has been expected for quite some time now.

-

#40 Reply

Posted by

tszaboo

on 03 Jan, 2018 21:50

-

Are you people crazy? It affects Virtual machines that can read from each other. It only affects you, if you are running more than 1 virtual machines on your PC server, and one would run malicious code, specifically designed to attack the other virtual machine. This is only an issue for cloud providers.

99.9999% of PC users are not affected.

.. no, no, that isn't it.

This is an issue which can potentially allow an unprivileged user-mode process to read kernel memory.

Then either they have more than 1 issue at the same time, or IDK what is going on.

https://www.techpowerup.com/240174/intel-secretly-firefighting-a-major-cpu-bug-affecting-datacentersThe vulnerability lets users of a virtual machine (VM) access data of another VM on the same physical machine (a memory leak).

Anyway, others write that all x86 is affected, even ARM (sounds bullshit, but possible). We see it in about a week, until that it is all a speculation.

-

#41 Reply

Posted by

bd139

on 03 Jan, 2018 21:56

-

Then either they have more than 1 issue at the same time, or IDK what is going on.

Some people are responding to Xen hypervisor embargoed XSA-253:

https://xenbits.xen.org/xsa/ ...

I am currently hating that dealing with all this shit is my hat. I should have migrated to China in the late 1990s and done EE there

Also Amazon have started randomly rebooting AWS instances now, probably applying patches. Fun fun fun for me over the next few days.

-

-

These look like extra juicy news. I'm looking forward to seeing how this unrolls.

-

#43 Reply

Posted by

Macbeth

on 03 Jan, 2018 21:59

-

maybe the Intel guys fixing the bug is overly cautious, or they just don't want AMD to have an advantage, but ...

https://lkml.org/lkml/2017/12/27/2

PMSL

if (c->x86_vendor != X86_VENDOR_AMD)

setup_force_cpu_bug(X86_BUG_CPU_INSECURE);Enough said. But shit all my CPU's are Intel right now...

-

#44 Reply

Posted by

Ampera

on 03 Jan, 2018 22:01

-

In essence, it's half the people in the industry collectively shitting their pants and the other half waiting to see how bad it stinks.

maybe the Intel guys fixing the bug is overly cautious, or they just don't want AMD to have an advantage, but ...

https://lkml.org/lkml/2017/12/27/2

PMSL

if (c->x86_vendor != X86_VENDOR_AMD)

setup_force_cpu_bug(X86_BUG_CPU_INSECURE);

Enough said. But shit all my CPU's are Intel right now...

Lol.

I own one Intel CPU that's affected, my main i7-4790k. All others are either AMD or too old to be affected (Pentium 3, Pentium 4, Pentium Pro, basically, I'm all about the Pentiums).

CPUs get more annoying by the day. It's a battle with no victor between people who wish to break down computers for profit and people who want to keep people secure. What it comes down to is people don't care about security, they care about a fast, simple, speedy device, with little else.

-

#45 Reply

Posted by

bd139

on 03 Jan, 2018 22:02

-

That AMD patch enabled this to happen:

-

#46 Reply

Posted by

amyk

on 03 Jan, 2018 22:27

-

The link posted before,

https://twitter.com/brainsmoke/status/948561799875502080 , is currently the only public demonstration I know, but you can see how it works in general --- if an address has been recently accessed, then it will be in the cache so it will be faster to access than one which hasn't. My guess is that the CPU will do a speculative access and cache the data even if the access turns out to be invalid, altering the timing thereafter.

Intel's response that it's "operating as designed" is because no one ever thought this would be a real problem, and so far it remains to be seen how much of one it really is.

Is this even an issue for standalone PCs ?

Yes - your applications aren't meant to be able to find the kernel, let alone read it.

It depends on what applications you run, and whether you trust them. Obviously if you trust everything running on the CPU, e.g. like in an embedded system, this has little relevance. If you're a cloud provider or user with hardware being shared by dozens if not more users who don't trust each other at all, then it's a big problem.

This also theoretically includes things like Javascript running in browsers, so you need to be careful of

any untrusted code running on your system, but if you don't have any, the situation hasn't changed.

It will be interesting to see what happens...

-

#47 Reply

Posted by

station240

on 03 Jan, 2018 22:31

-

Speculation that Intel will have to repeat the FDIV bug offer of replacement CPUs.

I can't imagine large data center companies like Amazon not demanding replacement silicon, given how huge the CPU hit is for the workaround for this bug.

Given how much shit Apple got in for slowing down CPUs in iPhones with weak batteries, I cannot imagine consumers being too pleased with Intel either.

-

#48 Reply

Posted by

bd139

on 03 Jan, 2018 22:33

-

If they offered replacements it would destroy them entirely. Watch the corporate wriggling over the next few months.

-

#49 Reply

Posted by

Mr. Scram

on 03 Jan, 2018 22:39

-

Speculation that Intel will have to repeat the FDIV bug offer of replacement CPUs.

I can't imagine large data center companies like Amazon not demanding replacement silicon, given how huge the CPU hit is for the workaround for this bug.

Given how much shit Apple got in for slowing down CPUs in iPhones with weak batteries, I cannot imagine consumers being too pleased with Intel either.

Intel never guaranteed performance and the chips still work, so I guess they're off the hook there. A problem with Apple was that they hid it and looked like they were slowing down old hardware to sell new hardware. Intel isn't hiding the problem and not hiding the performance hit.

-

#50 Reply

Posted by

glarsson

on 03 Jan, 2018 22:46

-

-

#51 Reply

Posted by

wraper

on 03 Jan, 2018 23:08

-

Intel's PR response:

https://newsroom.intel.com/news/intel-responds-to-security-research-findings/

This is rather interesting. I read that this only affects Intel chips, yet Intel is stating it affects AMD and Acorn chips as well.

Intel are some huge dicks. They wrote statement in a sleazy way as if it suggests AMD is affected as well but without actually saying so:

Recent reports that these exploits are caused by a “bug” or a “flaw” and are unique to Intel products are incorrect. Based on the analysis to date, many types of computing devices — with many different vendors’ processors and operating systems — are susceptible to these exploits.

Intel is committed to product and customer security and is working closely with many other technology companies, including AMD, ARM Holdings and several operating system vendors, to develop an industry-wide approach to resolve this issue promptly and constructively.

That caused AMD stock dropping a few %, then AMD replied with:

To be clear, the security research team identified three variants targeting speculative execution. The threat and the response to the three variants differ by microprocessor company, and AMD is not susceptible to all three variants. Due to differences in AMD’s architecture, we believe there is a near zero risk to AMD processors at this time. We expect the security research to be published later today and will provide further updates at that time.

-

#52 Reply

Posted by

andersm

on 03 Jan, 2018 23:32

-

The details have now been released at

https://spectreattack.com/. The Meltdown attack, which is more serious at least in the short term, affects only Intel CPUs, while the Spectre attacks probably affect every processor featuring speculative execution.

-

#53 Reply

Posted by

bd139

on 03 Jan, 2018 23:33

-

-

#54 Reply

Posted by

wraper

on 03 Jan, 2018 23:44

-

Variant 1: Bounds check bypass

This section explains the common theory behind all three variants and the theory behind our PoC for variant 1 that, when running in userspace under a Debian distro kernel, can perform arbitrary reads in a 4GiB region of kernel memory in at least the following configurations:

Intel Haswell Xeon CPU, eBPF JIT is off (default state)

Intel Haswell Xeon CPU, eBPF JIT is on (non-default state)

AMD PRO CPU, eBPF JIT is on (non-default state)

Apparently the only AMD which tested to be affected are old models running Linux with non default config.

-

#55 Reply

Posted by

bd139

on 03 Jan, 2018 23:51

-

I'm not sure they actually cover every CPU stepping and architecture with the test cases. What would be nice is a red/green test book of what has and hasn't been tested.

Well looks like I'm in for a late night

-

#56 Reply

Posted by

Koldman

on 04 Jan, 2018 00:48

-

I don't quite understand the whole thing, but I feel like the kid that saved all his paper route money and bought a new bike only for it to fall apart.

-

#57 Reply

Posted by

MT

on 04 Jan, 2018 01:07

-

Soo are Intel huge dicks or not?

-

#58 Reply

Posted by

wraper

on 04 Jan, 2018 01:13

-

Soo are Intel huge dicks or not?

Average

-

#59 Reply

Posted by

MT

on 04 Jan, 2018 01:16

-

So Intel are average dicks!? Soooo what are AMD and ARM?

-

#60 Reply

Posted by

wraper

on 04 Jan, 2018 01:19

-

So Intel are average dicks!? Soooo what are AMD and ARM?

Not enough data yet.

-

#61 Reply

Posted by

MT

on 04 Jan, 2018 01:25

-

Ahhh! so in meantime we just go ape shouting "world end is near"!

-

#62 Reply

Posted by

wraper

on 04 Jan, 2018 01:34

-

https://www.amd.com/en/corporate/speculative-executionVariant One Bounds Check Bypass Resolved by software / OS updates to be made available by system vendors and manufacturers. Negligible performance impact expected.

Variant Two Branch Target Injection Differences in AMD architecture mean there is a near zero risk of exploitation of this variant. Vulnerability to Variant 2 has not been demonstrated on AMD processors to date.

Variant Three Rogue Data Cache Load Zero AMD vulnerability due to AMD architecture differences.

-

#63 Reply

Posted by

wraper

on 04 Jan, 2018 01:47

-

As I understand it from data currently available, AMD is only affected on Linux with non default configuration.

AMD PRO CPU, eBPF JIT is on (non-default state)

-

#64 Reply

Posted by

andersm

on 04 Jan, 2018 01:48

-

-

#65 Reply

Posted by

David Hess

on 04 Jan, 2018 05:00

-

Looks like a fix might take a branch prediction rework. If so, not trivial, and if a fix wasn't already in the works, something like this could take quite some time to fix.

The problem is speculative execution accessing protected memory. The fix would be to fault the speculated instructions before they access memory instead of at retirement which is what AMD does by tagging the TLBs so the speculated memory accesses to protected memory do not occur.

-

#66 Reply

Posted by

David Hess

on 04 Jan, 2018 05:03

-

And this is what happens people when you layer abstractions so deep and so complicated that you require several volumes of books to just explain the ISA and to maintain backwards compatibility to what is fundamentally some crack smoke inspired architecture from the late 1970s.

The problem occurs because of how speculative execution works so it applies to RISC designs as well. ARM is apparently vulnerable to it but AMD is not because they tag and invalidate their TLBs which prevents this very problem.

-

#67 Reply

Posted by

andersm

on 04 Jan, 2018 06:25

-

But AMD CPUs have this Cortex A5 management unit running inside, and since it's part of the security subsystem, I assume it has a higher security clearance. What if the hacker can inject some bad code pieces to the ARM firmware, then using it to attack the ARM, then using the ARM to attach the Zen cores?

The Cortex-A5 is an in-order core, so it is not vulnerable to anything involving speculative execution. Also, these attacks only allow for extracting data, they can't (directly) be used to modify anything.

-

#68 Reply

Posted by

Mr.B

on 04 Jan, 2018 07:30

-

Thank you to all the very knowledgeable low level CPU experts here.

This post is just to acknowledge the community experts and bookmark this thread so that I can follow it easily.

The gravity of this situation intrigues me….

The combination of the immense possible damage to Intel and the resulting fallout in the general computing arena, be it attacks or a resultant processing impact due to an OS level patch, cannot be underestimated IMHO.

-

#69 Reply

Posted by

Jeroen3

on 04 Jan, 2018 07:30

-

If they offered replacements it would destroy them entirely. Watch the corporate wriggling over the next few months.

Since when is bug present? I read apocalyptic headlines saying two decades, but that seems a bit long.

-

#70 Reply

Posted by

Mr. Scram

on 04 Jan, 2018 07:38

-

Since when is bug present? I read apocalyptic headlines saying two decades, but that seems a bit long.

I think I read Sandy Bridge and up, which seems to make some sense from an architectural point of view.

-

#71 Reply

Posted by

bd139

on 04 Jan, 2018 07:53

-

I think this could go a long way back as suggested. Speculative out of order execution goes back to Pentium Pro if I remember correctly. It would be nice to confirm it either way but the effort required is likely extensive.

You have to ask: how long have the security services known about this?

As an example of where this is heading it looks like we’ve already had patches for AWS deployed quietly. No word from some vendors yet on patch status. I suspect some are as surprised as we are.

-

#72 Reply

Posted by

Mr. Scram

on 04 Jan, 2018 08:10

-

I think this could go a long way back as suggested. Speculative out of order execution goes back to Pentium Pro if I remember correctly. It would be nice to confirm it either way but the effort required is likely extensive.

You have to ask: how long have the security services known about this?

As an example of where this is heading it looks like we’ve already had patches for AWS deployed quietly. No word from some vendors yet on patch status. I suspect some are as surprised as we are.

There's a huge load of very critical leaks surfacing lately. If you stack those together, you basically have free reign over almost every computer. Intel ME, the various macOS vulnerabilites where you can get root access without much trouble and a few more.

-

#73 Reply

Posted by

bd139

on 04 Jan, 2018 08:19

-

Yes indeed. It doesn’t look good for the IT business at all. I have, as someone deeply involved in the security side of things, considered cashing everything I have in and bailing. It’s too bloody stressful keeping the snowflakes covered in piss alive (google “programming sucks” for context of that comment).

There’s a bigger one on the cards as well. While this is confined to a single machine we’re actually running short on viable crypto tech at the moment. The cat and mouse game that is played against ciphers, key exchange and transport layer protocols is currently letting the cat doing some serious catching up...

-

#74 Reply

Posted by

JoeN

on 04 Jan, 2018 09:41

-

Is this even an issue for standalone PCs ?

The Spectre attack can be delivered as Javascript which means some site you go to could deliver it and search your memory for something interesting and phone home. The attack is actually pretty slow though, I guess maybe it's not likely to find anything, but it can randomly poke around. Fixing Javascript to disallow it should be easy, though.

https://spectreattack.com/spectre.pdfhttps://meltdownattack.com/meltdown.pdf"The unoptimized code in Appendix A reads approximately 10KB/second on an i7 Surface Pro 3."

The attack is right in this document in C, they don't give a Javascript example, I think for a good reason.

This is Meltdown reading memory from another process:

-

#75 Reply

Posted by

dmills

on 04 Jan, 2018 10:33

-

The cat and mouse game that is played against ciphers, key exchange and transport layer protocols is currently letting the cat doing some serious catching up...

I thought the underlying math was still safeish for all the work being done on number theoretic sieves and the discrete log problem?

Now attacks on protocols and implementations, that has always been the low hanging fruit when breaking these things, between side channel and just plain broken implementations.... I just LOVE people who write their own crypto.

Regards, Dan.

-

-

Is this even an issue for standalone PCs ?

The Spectre attack can be delivered as Javascript which means some site you go to could deliver it and search your memory for something interesting and phone home. The attack is actually pretty slow though, I guess maybe it's not likely to find anything, but it can randomly poke around. Fixing Javascript to disallow it should be easy, though.

"Spectre attacks can also be used to violate browser sandboxing, by mounting them via portable JavaScript code." (from the first .pdf)

They say "portable js code" sort of implying it can break any javascript engine sandbox which is hardly believable because no two OS/browser/browser version/cpu/cpu version combos are the same, have the same js engine, nor produce the same code after jitting, etc. The code they show is hand tweaked javascript "Like other optimized JavaScript engines, V8 performs just-in-time compilation to convert JavaScript into ma- chine language. To obtain the x86 disassembly of the JIT output during development, the command-line tool D8 was used. Manual tweaking of the source code lead- ing up to the snippet above was done to get the value of simpleByteArray.length in local memory (instead of cached in a register or requiring multiple instructions to fetch)." hardly "portable" as they say.

"We wrote a JavaScript program that successfully reads data from the address space of the browser process running it." means they could only read the browser's memory space, which is not good but not the same nor as dangerous as "search your memory for something interesting and phone home".

OTOH, I strongly believe, I have no doubt, that ALL the browsers have, on purpose, some sort of very well hidden backdoor to pwn our computers. The keys are either in Apple/Google/Mozilla/Brave/Opera or in the NSA hands. I don't think either that heartbleed was an accident.

-

#77 Reply

Posted by

bd139

on 04 Jan, 2018 11:03

-

The cat and mouse game that is played against ciphers, key exchange and transport layer protocols is currently letting the cat doing some serious catching up...

I thought the underlying math was still safeish for all the work being done on number theoretic sieves and the discrete log problem?

Now attacks on protocols and implementations, that has always been the low hanging fruit when breaking these things, between side channel and just plain broken implementations.... I just LOVE people who write their own crypto.

Regards, Dan.

At the moment, yes we're safeish but as always, the transition time between safeish and unsafe gets exponentially shorter. There's a lot of progress in quantum computing which I'm keeping one eye on. There's also some of which we probably can't see and is likely well funded. They're only factoring relatively small numbers now (tangibly brute forceable on traditional compute with an eye shut) but the gains are exponential. That could make the discrete log problem trivial or at least affordable. On a decade scale, shit might be hitting the proverbial fan.

Implementations are easy pickings, especially as everything is written in bloody C still. Also look at logjam as well where the implementation was good but a bad assumption was made on the mathematical side of things (shipping same primes everywhere).

Is this even an issue for standalone PCs ?

The Spectre attack can be delivered as Javascript which means some site you go to could deliver it and search your memory for something interesting and phone home. The attack is actually pretty slow though, I guess maybe it's not likely to find anything, but it can randomly poke around. Fixing Javascript to disallow it should be easy, though.

"Spectre attacks can also be used to violate browser sandboxing, by mounting them via portable JavaScript code." (from the first .pdf)

They say "portable js code" sort of implying it can break any javascript engine sandbox which is hardly believable because no two OS/browser/browser version/cpu/cpu version combos are the same, have the same js engine, nor produce the same code after jitting, etc. The code they show is hand tweaked javascript "Like other optimized JavaScript engines, V8 performs just-in-time compilation to convert JavaScript into ma- chine language. To obtain the x86 disassembly of the JIT output during development, the command-line tool D8 was used. Manual tweaking of the source code lead- ing up to the snippet above was done to get the value of simpleByteArray.length in local memory (instead of cached in a register or requiring multiple instructions to fetch)." hardly "portable" as they say.

"We wrote a JavaScript program that successfully reads data from the address space of the browser process running it." means they could only read the browser's memory space, which is not good but not the same nor as dangerous as "search your memory for something interesting and phone home".

OTOH, I strongly believe, I have no doubt, that ALL the browsers have, on purpose, some sort of very well hidden backdoor to pwn our computers. The keys are either in Apple/Google/Mozilla/Brave/Opera or in the NSA hands. I don't think either that heartbleed was an accident.

You may be right. You don't have to look far to find state interference in crypto implementations. Browsers are likely easier targets.

https://en.wikipedia.org/wiki/IPsec#Alleged_NSA_interferencehttps://en.wikipedia.org/wiki/Bullrun_(decryption_program)http://blog.erratasec.com/2013/09/tor-is-still-dhe-1024-nsa-crackable.html... etc etc ...

-

#78 Reply

Posted by

dr.diesel

on 04 Jan, 2018 11:51

-

-

#79 Reply

Posted by

nfmax

on 04 Jan, 2018 11:59

-

I have now turned Javascript OFF in all browsers, until further notice. youTube no longer works. Bye bye, Dave!

-

#80 Reply

Posted by

Rerouter

on 04 Jan, 2018 12:13

-

Dr.Diesel, to better understand, no matter what, 2 of those vulnerabilities are present and unfixable in all affected Intel products, no matter how its patched? or is there ways to avoid it, e.g. the other poster disabling java script.

-

#81 Reply

Posted by

Decoman

on 04 Jan, 2018 12:26

-

From the linked article below some guy (lol, this was my way of trying to reference a quotation about a quotation ) is referenced as having pointing out the following about Intel's Management Engine:

According to Zammit, the ME:

* has full access to memory (without the parent CPU having any knowledge);

* has full access to the TCP/IP stack;

* can send and receive network packets, even if the OS is protected by a firewall;

* is signed with an RSA 2048 key that cannot be brute-forced; and

* cannot be disabled on newer Intel Core2 CPUs.https://www.techrepublic.com/article/is-the-intel-management-engine-a-backdoor/This is the kind of shit that makes me sit here and think I am not really the owner or manager of my own damn computer.

-

#82 Reply

Posted by

bd139

on 04 Jan, 2018 12:30

-

I have now turned Javascript OFF in all browsers, until further notice. youTube no longer works. Bye bye, Dave!

I don't use the browser for youtube!

https://rg3.github.io/youtube-dl/This downloads which are then carted off to my iPhone via VLC and I sit and watch them on the sofa with my headphones on.

I have teenagers and a shitty Internet connection so watching youtube without horrible buffering is off the cards.

This is the kind of shit that makes me sit here and think I am not really the owner or manager of my own damn computer.

You're right. Welcome to serfdom.

Really though, I've got a few Z84C0008 parts, a whole tube of MCM6810P SRAMs, some stripboard and about 50 tubes of TTL ICs here. Build my own shit computer instead!

-

#83 Reply

Posted by

dr.diesel

on 04 Jan, 2018 12:30

-

Dr.Diesel, to better understand, no matter what, 2 of those vulnerabilities are present and unfixable in all affected Intel products, no matter how its patched? or is there ways to avoid it, e.g. the other poster disabling java script.

Patches are out for Meltdown, comes with a varying performance hit, but looks like Spectre will take a hardware fix, though can be made more difficult to exploit via patches.

Disabling java helps prevent a browser/webpage based attack.

This is still developing, and will lead to interesting speculative execution changes for all players, including AMD i'd bet.

-

#84 Reply

Posted by

tszaboo

on 04 Jan, 2018 12:53

-

Are you people crazy? It affects Virtual machines that can read from each other. It only affects you, if you are running more than 1 virtual machines on your PC server, and one would run malicious code, specifically designed to attack the other virtual machine. This is only an issue for cloud providers.

99.9999% of PC users are not affected.

Nope. The ASLR leak has been demonstrated from Javascript so any code running from a web page you have visited can exploit MMU timing to resolve the address of kernel mode data structures and subsequently it just needs an exploit for buffer overflow etc or rewriting the stack return address and you are pwned. But ignorance is bliss.

https://www.vusec.net/projects/anc/

That sounds pretty bad. Also, excecuting data? So any webpage can overtake my PC. Great.

Let's just hope they fix it, the effect is not mayor with normal workload, and they fix Windows 7 also. I dont feel like downgrading my PC to windows 10.

-

#85 Reply

Posted by

bd139

on 04 Jan, 2018 13:04

-

-

#86 Reply

Posted by

Decoman

on 04 Jan, 2018 13:10

-

You're right. Welcome to serfdom.

Well, I have to say it is even worse than that. Given the reach of surveillance and hacking and other terrible things, when nation states targets individuals, the threat is real. I personally don't think I can really travel to USA, nor UK because I have opinions that basically deem these government institutions as being villains. But enough about that. I am confident that I am on some list somewhere, and yet I have done nothing wrong. I never forget that one time some random guy in an irc chat once asked me if I owned a firearm (iirc)and if I was a member of an organization. And the truth was ofc that I had none and weren't in any organization. I like playing Arma 3 (most fun game as multiplayer, but terrible game mechanics, and you can drive ground vehicles and fly helicopters and build bases), and one time, without me even really bringing up any issue at all, this one guy who at one point claimed to be working in the arms industry, suddenly had this urge to start having a personal conversation with me about something vague and talked about causing attention like ripples in the water, and other weird stuff, making me having to now wonder if playing on that one server flagged my other co players in some way. And later when this guy in what I thought was Californian accent (obviously a foreigner) sneaks up on me in this local park and says to me "Don't be scared!" as he passes by on his skateboard, I start to wonder if I ought to get a little paranoid or not.

In the proverbial" perfect world", I am sure I wouldn't be bothered by relying on others for my security, but as it stands today, there is literally nobody to trust the way I see it. Not the local government, certainly not foreign governments, not my browser maker, not even technologists that opine on the matter of the "internet of things", and not all the people that actually work with the design and implementation of anything to do with computers and/or networking and standards. I listened to US congress having a hearing not too long ago about their supposed claims of not being able to read off this one particular mobile phone in a criminal investigation (iirc, after this show and spectacle in that US congress hearing , later it turned out that a company managed to copy the content for the law enforcement), and seeing how a higher Apple representative basically happily bent over and acknowledged the suggestion of discussing the matter further with the committee after the hearing to help out, for me just made any public statements from Apple to the public about how they care about people privacy, now a moot point. Ofc, it should be pointed out that I don't own an Apple product. I don't even own a smart phone, as I have the impression that the new phones aren't very good security wise, and they seem to incorporate various features that acts like streaming user telemetry, which imo would be basically at odds with ones privacy needs.

I am also the kind of guy that repeatedly points out to others that people's notion of 'privacy' tend to be misunderstood. As, it ought to be obvious that the matter at hand would be foremost ones privacy needs, and not as 'a right' as such, which in any case would certainly be limited by the merit of making a definition of privacy, or, just with how the mere expectation of privacy is contested, by simply disallowing expectation of privacy in some arbitrary way.

-

#87 Reply

Posted by

bd139

on 04 Jan, 2018 13:24

-

I can't argue with you. It's the same opinion here.

I work on the grey man principle. Cut your life in two. You have the public life and the private life. The public life is in line with expectations. Your private life is offline, entirely.

You will see me mentioning various things like DaveCAD (pen+paper) and using lots of old rancid analogue equipment. This is done not wholly because I enjoy it, which is fortunate that I do, but because being so close to how things really work that I am scared of it. There needs to be a backup plan away from "network dependency".

-

#88 Reply

Posted by

Mr. Scram

on 04 Jan, 2018 13:27

-

I can't argue with you. It's the same opinion here.

I work on the grey man principle. Cut your life in two. You have the public life and the private life. The public life is in line with expectations. Your private life is offline, entirely.

You will see me mentioning various things like DaveCAD (pen+paper) and using lots of old rancid analogue equipment. This is done not wholly because I enjoy it, which is fortunate that I do, but because being so close to how things really work that I am scared of it. There needs to be a backup plan away from "network dependency".

There is no backup plan. Even if you arrange something, others will forcefully take it from you once it becomes of value.

-

#89 Reply

Posted by

Decoman

on 04 Jan, 2018 13:33

-

I think corporations would be the first to be screwed on a general basis.

So I think it makes sense that if you run an important business and have proprietary data, to be kept secret, having no operational security would be bad if having a more or less open computer network system (or bad practices regarding computer security in general, allowing phishing attacks and the like), or allowed people to just walk around the premises, or even inside your home, and even if you hired people randomly with no background checks at all.

I now am reminded of how thieves will steal the entire safe, if the safe is not nailed down.

It has been said though that locks are only there to slow down trespassers, and not to really prevent entry/theft.

-

#90 Reply

Posted by

bd139

on 04 Jan, 2018 13:38

-

Yes that's the biggest concern for me as well.

I am developing an exit strategy at the moment. I don't want to be around the gigantic turd if it goes up in flames.

-

#91 Reply

Posted by

BravoV

on 04 Jan, 2018 13:44

-

-

#92 Reply

Posted by

Decoman

on 04 Jan, 2018 13:51

-

Its on CNN -> http://money.cnn.com/2018/01/03/technology/computer-chip-flaw-security/index.html

The article states that "Flaws in chips are unusual." I am no expert, but I suspect that this statement is not true as a more objective statement. I've also read that there is a real risk of (any) computer chip being vulnerable to it being doped in a subtle way by an advanced adversary for further manipulating a chip in use, in desired ways.

-

#93 Reply

Posted by

Mr. Scram

on 04 Jan, 2018 13:56

-

I think corporations would be the first to be screwed on a general basis.

So I think it makes sense that if you run an important business and have proprietary data, to be kept secret, having no operational security would be bad if having a more or less open computer network system (or bad practices regarding computer security in general, allowing phishing attacks and the like), or allowed people to just walk around the premises, or even inside your home, and even if you hired people randomly with no background checks at all.

I now am reminded of how thieves will steal the entire safe, if the safe is not nailed down.  It has been said though that locks are only there to slow down trespassers, and not to really prevent entry/theft.

It has been said though that locks are only there to slow down trespassers, and not to really prevent entry/theft.

We know this to be true when t comes to computers too. Any adversary motivated enough will find a way to gain access. With enough mud thrown, something is bound to stick. You can only make yourself a less interesting target and more painful to hit.

-

#94 Reply

Posted by

Decoman

on 04 Jan, 2018 14:47

-

One thing I've learned about computers, is that it does not matter if the crypto is good, if the implementation is bad. And so, then things get really complicated, and a single wrong character in some piece of code somewhere, can lead to what is called a 'catastrophic failure' with regard to having some expected security.

An important aspect of computer security is probably how allowing physical access to an adversary makes having security more like an impossibility, as the risk of anyone tampering with physical hardware at some location is more like a feature, than a threat model.

-

#95 Reply

Posted by

Avacee

on 04 Jan, 2018 16:12

-

-

-

Doctor Who:

The trouble with computers, of course, is that they're very sophisticated idiots. They do exactly what you tell them at amazing speed, even if you order them to kill you. So if you do happen to change your mind, it's very difficult to stop them obeying the original order, but... not impossible.

-

#97 Reply

Posted by

SaabFAN

on 04 Jan, 2018 18:14

-

Doctor Who:

The trouble with computers, of course, is that they're very sophisticated idiots. They do exactly what you tell them at amazing speed, even if you order them to kill you. So if you do happen to change your mind, it's very difficult to stop them obeying the original order, but... not impossible.

No problem with a TARDIS

Wasn't AMD working on something to replace the x86-Architecture for consumer-computers? I remember reading something like that one or two years back. Would be the perfect time to present the new CPU-Architecture now

-

#98 Reply

Posted by

Ampera

on 04 Jan, 2018 18:34

-

Doctor Who:

The trouble with computers, of course, is that they're very sophisticated idiots. They do exactly what you tell them at amazing speed, even if you order them to kill you. So if you do happen to change your mind, it's very difficult to stop them obeying the original order, but... not impossible.

No problem with a TARDIS

Wasn't AMD working on something to replace the x86-Architecture for consumer-computers? I remember reading something like that one or two years back. Would be the perfect time to present the new CPU-Architecture now

There is so much going on right now in the computing world. New architectures are ALWAYS a great idea. Replacing what everybody is using with a better technology is definitely attractive, but the issue is not only what, but how do we get people to drop their over 35 years of software support on a single platform for something else? Who is going to be able to make enough of a statement for everybody to fight against everybody who WILL want to keep the x86 battleship tanking?

At the moment, there is no consumer oriented processing platform with the same power and app support as x86. ARM has a lot of app support, and POWER has very similar, no pun intended, power, but they just don't mix. I recall watching a computer chronicles episode where they were talking about DEC Alpha, MIPS, and PowerPC machines taking the stage, and asking if the market is going to expand towards them. (It was the episode about the original Pentium if you want to see it) About 25 years later, DEC Alpha is completely dead, MIPS is hard to come by, and PowerPC is completely dead with POWER being resigned to servers and supercomputing tasks.

There have been designs that fix so many problems with x86. Heck, just starting over with x86 and re-implementing a lot of stuff would make the platform WAY better, but the reason why everybody uses x86, and the reason why I can still run the first version of PC-DOS on a Threadripper is because of backwards compatibility with application code. As more and more code is written for x86, we sink deeper into why nobody will change.

-

#99 Reply

Posted by

JoeN

on 04 Jan, 2018 19:24

-

You can use NoScript and leave Javascript turned on for certain sites. I don't think Youtube is going to send you anything malicious.

-

#100 Reply

Posted by

tszaboo

on 04 Jan, 2018 20:59

-

https://www.techpowerup.com/240273/intel-aware-of-cpu-flaws-before-ceo-brian-krzanich-planned-usd-24m-stock-sale Intel CEO Brian Krzanich sold the maximum amount of shares in the company he could, keeping only the mandatory 250,000 minimum shares that come with his position at Intel. In total, Brian Krzanich's sold shares totaled 245,743 shares of stock he owned outright, and 644,135 shares he got from exercising his options. So, the man sold around 80% of his Intel shares while the company (and he himself, surely) knew the flaw would become public knowledge soon enough

Sounds like insider trading to me.

-

#101 Reply

Posted by

David Hess

on 05 Jan, 2018 00:17

-

But AMD CPUs have this Cortex A5 management unit running inside, and since it's part of the security subsystem, I assume it has a higher security clearance. What if the hacker can inject some bad code pieces to the ARM firmware, then using it to attack the ARM, then using the ARM to attach the Zen cores?

This would be a big deal but has nothing to do with the exploits being discussed.

-

#102 Reply

Posted by

dr.diesel

on 05 Jan, 2018 01:09

-

-

#103 Reply

Posted by

Jeroen3

on 05 Jan, 2018 06:47

-

It works... Intel i7-4710MQ

-

#104 Reply

Posted by

Mr. Scram

on 05 Jan, 2018 07:39

-

One thing I've learned about computers, is that it does not matter if the crypto is good, if the implementation is bad. And so, then things get really complicated, and a single wrong character in some piece of code somewhere, can lead to what is called a 'catastrophic failure' with regard to having some expected security.

An important aspect of computer security is probably how allowing physical access to an adversary makes having security more like an impossibility, as the risk of anyone tampering with physical hardware at some location is more like a feature, than a threat model.

Again, like in normal life if they want you, they have you. Obviously, there are many parties out there that collect vast amounts of zero days to use against anyone they please. However, the reality is most of us aren't important enough for zero days. Those are expensive and relatively rare and reserved for state level chess, or as the basis for a large criminal attack. There's bound to be some application or even libraries on your computer you haven't updated and that might be enough. If you somehow dodged that bullet in the most unlikely fashion, there's still social engineering. There are attacks that can catch even very careful people out and if they don't, the customer service of all the services you use aren't so well behaved. You can do everything right and still suffer from someone else's mistakes. There are a couple of well known cases where this happened.

The uncomfortable truth is that when your time has come, you're done. Of course, this applies to regular life too and people prefer to deny that too.

-

#105 Reply

Posted by

Mr. Scram

on 05 Jan, 2018 08:08

-

You can use NoScript and leave Javascript turned on for certain sites. I don't think Youtube is going to send you anything malicious.

They won't risk the fallout from doing so in this case, but I don't put it beyond them to not only index your behaviour when you use their software, but your behaviour elsewhere as well. Like a Facebook button, except it's not just your browsing behaviour, but everything you do on your computer. I realize this could be considered tin foil hatty, but it's been shown again and again that companies will overstep boundaries until the law tells them they can't, and even try to get away with as much as they can.

-

#106 Reply

Posted by

bd139

on 05 Jan, 2018 08:09

-

Indeed. It’s the “better to ask for forgiveness than permission” argument. Doesn’t wash when EU GDPR kicks in. Seriously large damaging fines for pulling that shit.

-

#107 Reply

Posted by

Mr. Scram

on 05 Jan, 2018 08:37

-

Indeed. It’s the “better to ask for forgiveness than permission” argument. Doesn’t wash when EU GDPR kicks in. Seriously large damaging fines for pulling that shit.

The GDPR seems a bit overreaching in some areas, but considering the things that have been going on that might just be what's needed. I just hope it isn't used to slap regular IT companies around, while the big parties dance between the raindrops with impunity.

-

#108 Reply

Posted by

bd139

on 05 Jan, 2018 08:38

-

It's about shafting the big guys and the finance sector. We're having to move a lot of mountains to make it work.

Looking at 17% loss on everything now with the patches:

https://lkml.org/lkml/2018/1/3/281

-

-

I hope the hell POWER wins some fans out of this.

Not sure if anyone caught this, still reading through the other 4 pages...

https://access.redhat.com/security/vulnerabilities/speculativeexecutionPPC is out too. I wouldn't be surprised if newer SPARC was also out since they also do branch prediction and/or out-of-order execution, but c'mon... who owns modern Oracle hardware?

-

#110 Reply

Posted by

bd139

on 05 Jan, 2018 11:38

-

Ah bugger. I had some hopes for POWER.

This dude is still OK!

-

-

Looking at 17% loss on everything now with the patches: https://lkml.org/lkml/2018/1/3/281

Yep. "The impact of this will vary depending on the workload. Every time a program makes a call into the kernel—to read from disk, to send data to the network, to open a file, and so on—that call will be a little more expensive, since it will force the TLB to be flushed and the real kernel page table to be loaded. Programs that don't use the kernel much might see a hit of perhaps 2-3 percent—there's still some overhead because the kernel always has to run occasionally, to handle things like multitasking.

But workloads that call into the kernel a ton will see much greater performance drop off. In a benchmark, a program that does virtually nothing other than call into the kernel saw its performance drop by about 50 percent; in other words, each call into the kernel took twice as long with the patch than it did without. Benchmarks that use Linux's loopback networking also see a big hit, such as 17 percent in this Postgres benchmark. Real database workloads using real networking should see lower impact, because with real networks, the overhead of calling into the kernel tends to be dominated by the overhead of using the actual network"

I wonder if the i5/i7 in a MacBookPro6,1 (running Snow Leopard) is affected by this? Or this only happens on newer cpus?

-

#112 Reply

Posted by

bd139

on 05 Jan, 2018 12:28

-

Snow leopard isn't patched. Only Sierra and High Sierra are.

I just moved two postgres and two nginx nodes over to new kernels. Here we go

-

#113 Reply

Posted by

Mr. Scram

on 05 Jan, 2018 12:37

-

Yep. "The impact of this will vary depending on the workload. Every time a program makes a call into the kernel—to read from disk, to send data to the network, to open a file, and so on—that call will be a little more expensive, since it will force the TLB to be flushed and the real kernel page table to be loaded. Programs that don't use the kernel much might see a hit of perhaps 2-3 percent—there's still some overhead because the kernel always has to run occasionally, to handle things like multitasking.

But workloads that call into the kernel a ton will see much greater performance drop off. In a benchmark, a program that does virtually nothing other than call into the kernel saw its performance drop by about 50 percent; in other words, each call into the kernel took twice as long with the patch than it did without. Benchmarks that use Linux's loopback networking also see a big hit, such as 17 percent in this Postgres benchmark. Real database workloads using real networking should see lower impact, because with real networks, the overhead of calling into the kernel tends to be dominated by the overhead of using the actual network"

I wonder if the i5/i7 in a MacBookPro6,1 (running Snow Leopard) is affected by this? Or this only happens on newer cpus?

Any except the most ancient Intel CPU is affected by this. Whatever the case, unless you have a reason to think you're not affected it's likely you are.

-

-

I wonder if the i5/i7 in a MacBookPro6,1 (running Snow Leopard) is affected by this? Or this only happens on newer cpus?

Any except the most ancient Intel CPU is affected by this. Whatever the case, unless you have a reason to think you're not affected it's likely you are.

Snow leopard isn't patched. Only Sierra and High Sierra are.

Ufff, In practice, then, ~ all the PCs in the world are vulnerable? And I'm going to have to abandon my beloved Snow Leopard? Shit.

-

#115 Reply

Posted by

nfmax

on 05 Jan, 2018 14:08

-

Snow leopard isn't patched. Only Sierra and High Sierra are.

Is Sierra patched already? I haven't seen that stated anywhere else yet. I just moved my 'testbed' MBP to 10.13.2 so I can use email/web and shut down my main iMac until the dust settles a bit. But the older Macs that won't run Sierra? Are they just scrap now? I have two PPC macs which run my music library and drive the scanner that HP couldn't be bothered to support on Lion (!). Ill probably have to set up a seperate airgapped cabled network for them now. To say nothing of my father's MBP which is too old for Sierra.

You would have to be mad to buy a computer or phone of any type now or for the next two or three years, without extreme need - although I gather Raspberry Pis of all versions are not affected.

-

#116 Reply

Posted by

Kalvin

on 05 Jan, 2018 14:08

-

Does this also affect virtualized OSs, like a Linux running in Virtualbox, ie. can the Linux running inside the Virtualbox running on Windows 10 host compromise the Windows 10 host?

-

#117 Reply

Posted by

nfmax

on 05 Jan, 2018 14:12

-

Does this also affect virtualized OSs, like a Linux running in Virtualbox, ie. can the Linux running inside the Virtualbox running on Windows 10 host compromise the Windows 10 host?

Yes

Basically the hardware protection between privilege levels has been demonstrated not to work.

-

#118 Reply

Posted by

wraper

on 05 Jan, 2018 15:05

-

Does this also affect virtualized OSs, like a Linux running in Virtualbox, ie. can the Linux running inside the Virtualbox running on Windows 10 host compromise the Windows 10 host?

Yep it does.

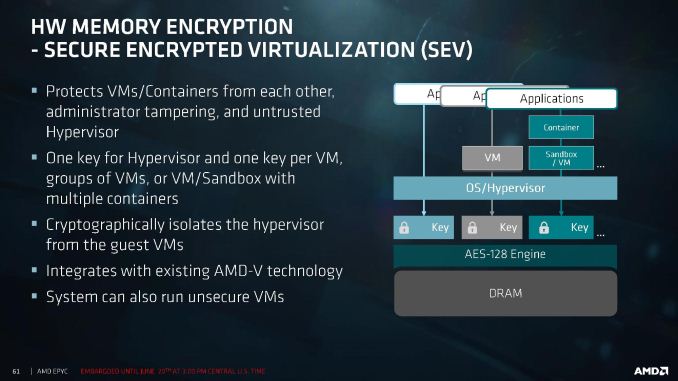

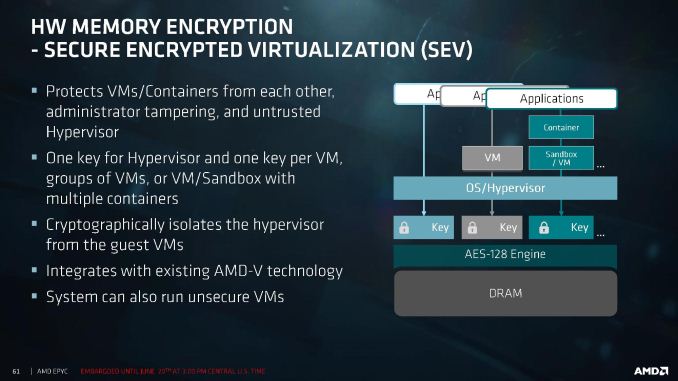

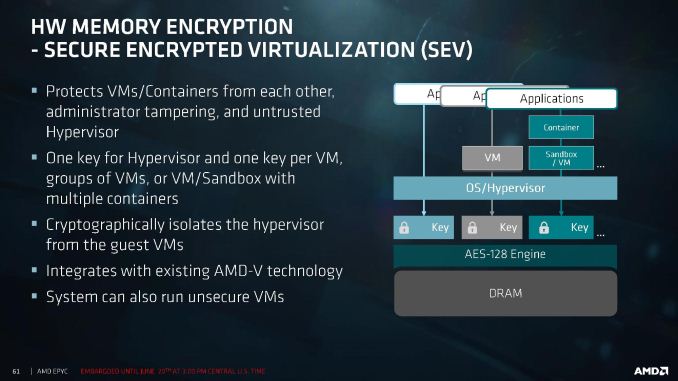

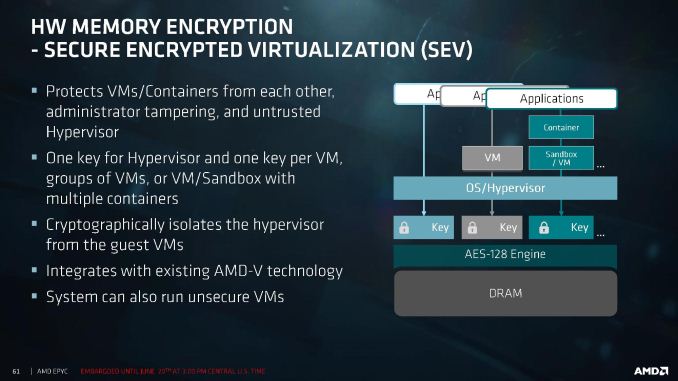

On a side note, AMD introduced RAM encryption in EPYC which basically makes it immune to this.

-

#119 Reply

Posted by

rrinker

on 05 Jan, 2018 15:06

-

Does this also affect virtualized OSs, like a Linux running in Virtualbox, ie. can the Linux running inside the Virtualbox running on Windows 10 host compromise the Windows 10 host?

That is the BIGGEST danger of this and why Microsoft rushed out patching their host systems for their Azure cloud environment ahead of their original planned date.

Mostly without incident but we've had a few customers with issues where things didn't come up cleanly after the host was restarted under their VM.

-

#120 Reply

Posted by

bd139

on 05 Jan, 2018 15:11

-

Yep it does.

On a side note, AMD introduced RAM encryption in EPYC which basically makes it immune to this.

Until someone works out how to read the keys with it

-

#121 Reply

Posted by

Mr. Scram

on 05 Jan, 2018 15:11

-

Does this also affect virtualized OSs, like a Linux running in Virtualbox, ie. can the Linux running inside the Virtualbox running on Windows 10 host compromise the Windows 10 host?

Yep it does.

On a side note, AMD introduced RAM encryption in EPYC which basically makes it immune to this.

If that works properly and sufficiently it might just net them a huge piece of the pie.

-

#122 Reply

Posted by

BravoV

on 05 Jan, 2018 15:21

-

If that works properly and sufficiently it might just net them a huge piece of the pie.

Yep, have a friend that works in big corporation at high level, just told me that regarding their company's major servers refresh program that is due this year, the upper management had decided to rule out Intel based servers as they're going to issue a major purchase order for this Q1.

I can imagine similar scenes are also happening and will happen at least for Q1 and Q2 this year throughout the world big companies.

Looks like 2018 is a good year for AMD's CEO Lisa Su at least.

-

#123 Reply

Posted by

dr.diesel

on 05 Jan, 2018 15:32

-

I plan to do the same, AMD is now competitive enough to suit my/customers needs, but also, Intel needs more competition.

-

#124 Reply

Posted by

cdev

on 05 Jan, 2018 15:39

-

-

#125 Reply

Posted by

Ampera

on 05 Jan, 2018 15:44

-

My next main machine will be AMD. Ryzen if there isn't something better out there.

-

-

Spectre & Meltdown - Computerphile

-

#127 Reply

Posted by

wraper

on 05 Jan, 2018 16:03

-

My next main machine will be AMD. Ryzen if there isn't something better out there.

Mine already is, and I use ECC RAM (ECC not officially supported but not locked out either).

-

#128 Reply

Posted by

cdev

on 05 Jan, 2018 16:05

-

The times they are a changin'

-

#129 Reply

Posted by

Mr. Scram

on 05 Jan, 2018 16:10

-

I plan to do the same, AMD is now competitive enough to suit my/customers needs, but also, Intel needs more competition.

Even without security deliberations, AMD offers a good product at a more than reasonable price. Unlike the previous generations, this seems to be a good choice.

-

#130 Reply

Posted by

bd139

on 05 Jan, 2018 16:13

-

Yeah even I'm looking at a Ryzen based machine to replace my HP Z620. Less power consumption, similar performance, quieter and smaller.

Edit: and not Intel

-

#131 Reply

Posted by

Mr. Scram

on 05 Jan, 2018 16:17

-

Yeah even I'm looking at a Ryzen based machine to replace my HP Z620. Less power consumption, similar performance, quieter and smaller.

Edit: and not Intel

Dat workstation though. Those HP ones tickle me the right way. What processor configuration does yours have?

-

#132 Reply

Posted by

RoGeorge

on 05 Jan, 2018 16:20

-

Spectre & Meltdown - Computerphile

All was clear until the last step.

How exactly are the speculative results extracted?

How come that the speculated values can still leave side effects behind, even after discarding the results?

What are those side effects, and how are they used to access a miss predicted and discarded calculation?

-

#133 Reply

Posted by

BravoV

on 05 Jan, 2018 16:39

-

My next main machine will be AMD. Ryzen if there isn't something better out there.

Damn Intel, already pull the trigger on Ryzen's board just now, its way too early for my budget timing.

Cause our local mobo distributors are really nasty and well known that they love to do price hiking for this kind of occasions. Also locally here mid and upper class motherboard stock are starting to dry out, as usually distributors won't stock pile them as many as compared to low end mainstream ones, and next batch of import may take months to arrive.

Just ordered Asrock X370 Taichi, hopefully this is enough for now.

-

#134 Reply

Posted by

Kalvin

on 05 Jan, 2018 16:46

-

How exactly are the speculative results extracted?

How come that the speculated values can still leave side effects behind, even after discarding the results?

What are those side effects, and how are they used to access a miss predicted and discarded calculation?

If I understood the video correctly, the exploits take advantage of the {timing] information whether or not some [injected] value has been cached by the CPU or not, due to the speculative nature of execution of the instructions of the modern CPUs. You just need to make the CPU to fetch some known data from the memory and use the available high resolution on-chip timers to measure how long does it take to execute that data fetch. If the execution time is "fast", the value was cached and if the execution time was "slow" the value was not in the cache. By using this direct timing information one can extract indirectly the wanted information for the exploit.

-

#135 Reply

Posted by

Cerebus

on 05 Jan, 2018 16:46

-

All was clear until the last step.

How exactly are the speculative results extracted?

How come that the speculated values can still leave side effects behind, even after discarding the results?

What are those side effects, and how are they used to access a miss predicted and discarded calculation?

Cache timings. The speculative fetches leave the fetched data in the cache(s). By requesting something from memory and timing the result, you know whether is was cached or not, so you can probe the cache to see whether it holds something or not, and hence whether it was the target of a speculative fetch.

Mutating that ability into

reading data requires a whole layer more and some knowledge of the data you're hunting that allows you to convert 'it was in the cache' to 'its value is x'. The obvious method is to conditionally fetch some forbidden data based on its content; this will fault, but not before it has speculatively executed the condition, which would control the fetch into cache, which gives you knowledge of whether the condition was met or not.

It's pretty easy to see how you could turn that into a binary tree that chases down the current value of forbidden_location.

-

#136 Reply

Posted by

Decoman

on 05 Jan, 2018 16:53

-

Seeing how there are all these news articles now on the net on this issue with primarily the two critical vulnerabilities nicknamed 'Spectre' and 'Meltdown', I can't help but think how helpless the world is, because at the end of the day, the news outlets seems to me to be more like entertainment than journalism, otherwise I would have wanted to see computer security to be be taken more seriously throughout the whole year, at least on some editorial level, so that there aren't just the occasional horrific event popping up.

And then I think that once reporting of computer security issues becomes this shallow, so as to being more of a public spectacle, I think that also makes the journalism that's is already there non objective, once a journalist makes general statements that maybe seems ok to the journalist there and then, but things considered, would be erroneous when simplifications and generalizations end up being poignant messages that dulls the broader range of issues with anything technical. I suppose that one type of flawed critical thinking would be to arrive at a conclusion of sorts, that dictate that something in particular is flawed (like a known vulnerability in a computer chip), when perhaps it is the underlying feature(s) that can be said to allow catastrophic failures in computer security to exist in the first place. A parallel to this idea of there being a horrible set of features in the first place, would be Adobe's Flash platform, which afaik is so badly tarnished with regard to what I understand as being an ever re-occurring events with 'remote execution vulnerabilities' in the code in the Flash plug-in.

So with regard to the Flash plugin.. some time back, I followed the advice of experts and finally un-installed Flash for good.

I wish anything related to computer software and hardware, was better compartmentalized, and having a perfectly good foundation to have computers running off that. And Linux wouldn't be that kind of software for me, which iirc, is known for working with usability, rather than security. When I one time had an interest in trying out a few Linux distros, the people on IRC seemed to be more like fanboys instead of sensible people, and sort of patting themselves on the back for knowing how to install stuff and set file flags, without really knowing how things work in the kernel. And with Linus living in USA, I feel I can't even trust the management, but that is just me. It didn't help when Linus some years ago was said to have sort of joked in relation to a serious question directed at him, in which it was asked something about if he had ever been approached by the US government to solicit cooperation from him or something like that, and then the man had said 'no', but nodded 'yes'. Not something to joke about.

-

#137 Reply

Posted by

Decoman

on 05 Jan, 2018 16:59

-

As a sort of off-topic comment of sorts, but related to computer security, I can highly recommend watching the yearly talks at the

'RSA conference', called