-

I finished reading section 8 of the Technical Report, which is the material germane to this thread. It is a good clear explanation of the problem/approaches and I found it very useful.

However, there is something missing from it and parenthetically from all of the material I have read so far. Specially, how do you use the Allan variance (or its square root, the Allan deviation) once you have computed it. I imagine someone setting up a system might want to know if a particular oscillator is suitable for use in that system. For example, a hobbyiest may wish to measure signal characteristics of some radio transmission system he is building. He wants to use a 10 MHz reference clock passed through a distribution amplifier to synchronize the instruments he is using to test his system.

For the sake of convenience, let's refer to the paper by Rutman & Walls (linked by GerryBags) instead of the NBS Monograph, since it contains everything we need and everybody has access to it.

There are (generally) two ways to view the stability of an oscillator -- in the frequency domain and in the time domain. In the frequency domain, one is concerned with the spectral densities of phase and frequency fluctuations and the resulting spectral density of the oscillator itself. In the time domain, one is concerned with variances, i.e. standard deviations. The utility of the Allan Variance is that it provides a link between these two domains. (See Table 1 of the cited paper.) In brief, the Allan Variance allows one to determine the dominant noise process of an oscillator on any given time scale. For example, if the (root) Allan Variance of an oscillator has 1/root(Tau) dependence over some time scale, it can be concluded that the dominant noise source on that time scale is white phase noise. If the (root) Alllan Variance of this same oscillator flattens out at longer times, it can be concluded that the dominant noise source is flicker frequency at longer times.QuoteI understand from the reading that I have done that computing the traditional variance of fractional frequency data doesn't work because it doesn't converge as the sample size increases. That is one of the reasons Allan created his variance measure. But, with the traditional variance (actually its square root, the standard deviation), if you assume a gaussian distribution of the fractional frequency process, 99.7% of the values lie within a 3 sigma band around the mean. So, if the designer had a traditional variance to work with, he could look at the range of frequencies within the 3 sigma band and decide whether that sort of jitter was acceptible for testing his system.

You cannot make the assumption that the distribution is Gaussian and, therefore, you can't conclude much from the standard deviation of the frequency of an oscillator. In general, a traditional variance provides very little information about the underlying behavior of an oscillator. As an example, consider two 10 MHz oscillators monitored over some long time period. Oscillator 1 might have very low "jitter" but it drifts linearly about 10 Hz during the monitoring period. Oscillator 2 might not have any linear drift, but it has about 10 Hz of random jitter. Despite having very different characteristics, these oscillators will have nearly the same traditional variance. Their Allan Variances, however, will be very different.QuoteSo far, I have found nothing like this for the Allan variance. How do you use it in a practical situation?

I'm guessing a lot of people on this forum are familiar with GPS-disciplined oscillators, where a local oscillator is locked to a signal extracted from GPS satellites. The big advantage of these devices is the ability to impose the long term stability of the GPS (Cesium) clocks onto a less expensive, local clock. But, how does one marry the local clock to the GPS signal? In other words, how quickly or how tightly does one lock the local oscillator onto the GPS signal? The answer to that question depends on the characteristics of both the local oscillator and the GPS signal, and the Allan Variance gives you those characteristics. For instance, the Allan Variance of a simple TXCO might indicate it should be locked onto the GPS signal with a short time constant, whereas the Allan Variance of a Rb oscillator might indicate that it should be locked onto the GPS signal with a relatively long time constant.

-

My "I read it on the internet" understanding of the Allan variance is it simply is treating the variance as a random variable. So one has then the mean and variance of the variance. One can take the variance and compute the FFT to look for periodicities in the variance. I *think* that would be the cyclostationarity, but I've never encountered that term before. So I'm just guessing.

I rather suspect that this is a lexical minefield where different specialties define slightly different meaning and scaling conventions to the same words.

No, you missed the mark on this one. -

https://fenix.tecnico.ulisboa.pt/downloadFile/3779572188799/Tn296.pdf "Characterization of Clocks & Oscillators"

When I looked at this, I only examined the table of contents to see if it might be interesting (which I thought it was). However, the URL supplied only the table of contents. The full report is here:

"Characterization of Clocks & Oscillators" -

I'm guessing a lot of people on this forum are familiar with GPS-disciplined oscillators, where a local oscillator is locked to a signal extracted from GPS satellites. The big advantage of these devices is the ability to impose the long term stability of the GPS (Cesium) clocks onto a less expensive, local clock. But, how does one marry the local clock to the GPS signal? In other words, how quickly or how tightly does one lock the local oscillator onto the GPS signal? The answer to that question depends on the characteristics of both the local oscillator and the GPS signal, and the Allan Variance gives you those characteristics. For instance, the Allan Variance of a simple TXCO might indicate it should be locked onto the GPS signal with a short time constant, whereas the Allan Variance of a Rb oscillator might indicate that it should be locked onto the GPS signal with a relatively long time constant.

This is all very reasonable, but doesn't solve the problem I posed. Let me try again.

I am a hobbyist who is building some circuit or system. I want to test that system using different pieces of equipment (e.g., oscilloscope, spectrum analyzer, frequency counter) simultaneously. I have two oscillators I can use to synchronize the equipment (through a distribution amp), say a rubidium oscillator and an ocxo. Which one do I use? From just playing around with my own rubidium and ocxo oscillators, it appears to me that the rubidium has good long-term stability, but not so good short-term stability. On the other hand, the ocxo has good short-term stability and not as good long-term stability. This intuition is born out by at least one study I have looked at (e.g., see slide 16 of this presentation)

What I need is information on the stability of these two oscillators that will help me choose which one to use. I am not designing oscillators, I am using them. Perhaps naively, I presumed that the stability measures now in use would provide information so I can make an informed choice. Did I presume incorrectly?

-

This is all very reasonable, but doesn't solve the problem I posed. Let me try again.

I am a hobbyist who is building some circuit or system. I want to test that system using different pieces of equipment (e.g., oscilloscope, spectrum analyzer, frequency counter) simultaneously. I have two oscillators I can use to synchronize the equipment (through a distribution amp), say a rubidium oscillator and an ocxo. Which one do I use? From just playing around with my own rubidium and ocxo oscillators, it appears to me that the rubidium has good long-term stability, but not so good short-term stability. On the other hand, the ocxo has good short-term stability and not as good long-term stability.

What I need is information on the stability of these two oscillators that will help me choose which one to use. I am not designing oscillators, I am using them. Perhaps naively, I presumed that the stability measures now in use would provide information so I can make an informed choice. Did I presume incorrectly?

You have the information. Does your system require better short-term or long-term stability? Only you can answer that question.

-

Exactly why do you need to go down this rabbit hole ?

Why do you need such good drift accuracy ?

-

@OP

What do you mean by stability? Amplitude, frequency or both?

What do you mean by short-term vs. long-term? ms vs s, min vs hours?

What's your metric for stability? Hz/s, standard deviation of frequency?

If understand it, you have a choice of two oscillators that you actually have and want to select the most stable for your application.

If you are looking for changes of a few kHz over seconds vs. over hours (I have no realistic idea ) then I'd do something along these lines:

) then I'd do something along these lines:

1. If you can interrogate your frequency counter a high enough rates (a few time a second) then just record an hour or two of the readings then use something like Excel to calculate standard deviations over some suitable statistic over blocks of say 1s, 2s, 5s, 10s, 20s, 50s, 100s etc etc and, of course, just plot the frequency.

2. Use another oscillator as a reference and then do demodulation (multiply) for both your oscs at the same time (to allow for reference drift). It would be a straightforward matter to compare the two demodulated signals statistically to see which is more stable according to your definition of it. I think a Fourier transform of each would help since the osc with the least line broadening would be your best bet.

I said earlier that I think digging into the whole stochastic process yada stuff is unnecessary. You can afford to be semi-quantitative about this and not purist. I stand by that

-

This strikes me as an interesting application for a sparse L1 pursuit.

Construct a dictionary/wavelet basis which consists of windowed sinusoids which vary in phase, frequency and amplitude as a function of time. This amounts to constructing an A matrix with mostly zero elements which has at any window in time a set of possible solutions to be considered and then solving Ax=y using an L1 solver such as linear programming. If the solution vector x is sparse, then you have the optimal decomposition of the signal into the basis. If it's not sparse then you will grow old and die before you get an answer. While that can happen, it's quite rare. David Donoho and Emmanuel Candes have done a lot of work in this area. Mallat gives a brief review of the some applications in "A Wavelet Tour of Signal Processing", 3rd ed.

In the context of oscillator performance, you can separate a sine wave which is phase shifted, from a sine wave whose frequency changes, from a sine wave whose amplitude changes and all combinations and permutations of those. That problem is NP-hard, and was considered unsolvable. But in 2004 Donoho proved that if a sparse L1 solution existed, it was the optimal L0 solution.

This is the major paper:

http://statweb.stanford.edu/~donoho/Reports/2004/l1l0EquivCorrected.pdf

The first 2 pages present the matter very clearly and succinctly. The math is the ugliest I've ever read. One of Donoho's proofs is 15 pages for a single theorem!

There is another aspect of it which I can't say how it fits in at the moment, compressive sensing by sampling at random intervals. This is an active area of research and the mathematics are rather painful to read. The gist of it is that if your samples are collected randomly aliasing does not take place and you only need about 10-20% of the number of samples required to meet the Nyquist criterion to achieve the same resolution. The ultimate example being the single pixel camera which substitutes a series of samples over time for spatial pixels. The monograph that I have is "A Mathematical Introduction to Compressive Sensing" by Foucart and Rauhut which appeared in 2013. Since then there have been others, but Focuart and Rauhut appeared just at the time I stumbled into the subject of sparse L1 pursuits.

http://statweb.stanford.edu/~donoho/Reports/2004/CompressedSensing091604.pdf

It's rather mind boggling. -

This strikes me as an interesting application for a sparse L1 pursuit.

To a man with a hammer, everything looks like a nail ... -

My "I read it on the internet" understanding of the Allan variance is it simply is treating the variance as a random variable. So one has then the mean and variance of the variance. One can take the variance and compute the FFT to look for periodicities in the variance. I *think* that would be the cyclostationarity, but I've never encountered that term before. So I'm just guessing.

I rather suspect that this is a lexical minefield where different specialties define slightly different meaning and scaling conventions to the same words.

No, you missed the mark on this one.

Which part? Perhaps you would be so kind as to explain in more detail. I looked at the referenced slide that introduces "cyclostationarity" though I'm not convinced that the cited deviations from stationarity are in any way cyclical. I should think that requires additional proof. But an oscillation modulated by other oscillations does not seem implausible. I can list many potential causes. Not the least of which is device heating due to the changing current. -

This is all very reasonable, but doesn't solve the problem I posed. Let me try again.

I am a hobbyist who is building some circuit or system. I want to test that system using different pieces of equipment (e.g., oscilloscope, spectrum analyzer, frequency counter) simultaneously. I have two oscillators I can use to synchronize the equipment (through a distribution amp), say a rubidium oscillator and an ocxo. Which one do I use? From just playing around with my own rubidium and ocxo oscillators, it appears to me that the rubidium has good long-term stability, but not so good short-term stability. On the other hand, the ocxo has good short-term stability and not as good long-term stability.

What I need is information on the stability of these two oscillators that will help me choose which one to use. I am not designing oscillators, I am using them. Perhaps naively, I presumed that the stability measures now in use would provide information so I can make an informed choice. Did I presume incorrectly?

You have the information. Does your system require better short-term or long-term stability? Only you can answer that question.

You seem to follow the "just try it and see what happens" school of design. That's fair. A lot of engineering, perhaps most, is done that way and I won't criticize it. But I am curious about the precise differences between various hobbyist oscillators; specifically their stability. That is why I am doing this project.

In order to understand those differences I want to understand how established measures of oscillator stability relate to practical questions, such as "if I use this oscillator, what are the probable bounds of its jitter? Is it likely that the frequency of this particular oscillator will vary by 10 Hz, 100 Hz, 1 KHz over a 2 hour period (given some parameters such as temperature, power line ripple, ...)?" Without understanding how Allan variance relates to this question, why should I be interested in it? -

I finished reading section 8 of the Technical Report, which is the material germane to this thread. It is a good clear explanation of the problem/approaches and I found it very useful. However, there is something missing from it and parenthetically from all of the material I have read so far. Specially, how do you use the Allan variance (or its square root, the Allan deviation) once you have computed it.

Think of the ADEV plot the way you would a phase noise plot, if you're more familiar with traditional PLL theory. To determine the best loop bandwidth for a given PLL, one common approach is to overlay the frequency-normalized phase noise plots of the reference source and the VCO being controlled. The point where they cross is usually the best choice of loop bandwidth, in the absence of any further constraints on the problem. ADEV works in the time domain rather than the frequency domain, but the same basic principle still applies.

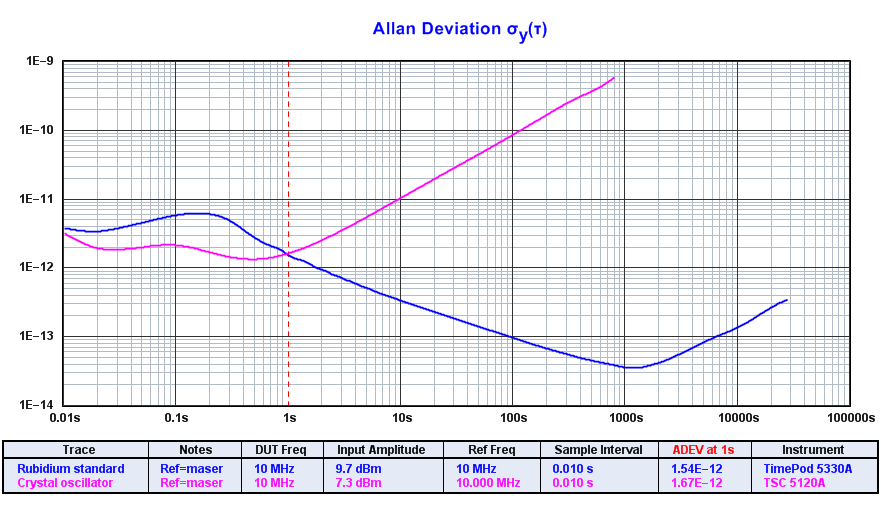

E.g., if you were looking to discipline a crystal oscillator with a rubidium standard, you might end up with a plot like this one. At taus below one second, the rubidium standard is noisier than the crystal oscillator. If you used a loop bandwidth much higher than 1 Hz, you would stabilize the crystal oscillator adequately but you would also lose its superior short-term noise performance. If you used a time constant much slower than that, though, there would be a big hump in the plot where the oscillator wanders around over intervals of a few seconds before being stabilized at longer taus. It will never be perfect, so your goal is to avoid corrupting the short-term performance while minimizing the hump. (You can see this optimization process at work in the plot of the rubidium standard by itself, in fact, since that's the exact problem its designers were faced with.)

One of the points Rubiola raises in his book is that the reason we use ADEV is because true frequency-domain analysis was computationally difficult back in the 1960s. You can go from an FFT to an ADEV plot, at least in theory, but not vice-versa. Ideally, the problem outlined above would be solved in the traditional phase-noise crossover sense. -

You seem to follow the "just try it and see what happens" school of design. That's fair. A lot of engineering, perhaps most, is done that way and I won't criticize it. But I am curious about the precise differences between various hobbyist oscillators; specifically their stability. That is why I am doing this project.

In order to understand those differences I want to understand how established measures of oscillator stability relate to practical questions, such as "if I use this oscillator, what are the probable bounds of its jitter? Is it likely that the frequency of this particular oscillator will vary by 10 Hz, 100 Hz, 1 KHz over a 2 hour period (given some parameters such as temperature, power line ripple, ...)?" Without understanding how Allan variance relates to this question, why should I be interested in it?

It seems to me you are getting two distinct types of advice. One is to get heavily into the theory etc and the other is to just try it. In an earlier post you say:QuoteWhat I need is information on the stability of these two oscillators that will help me choose which one to use. I am not designing oscillators, I am using them. Perhaps naively, I presumed that the stability measures now in use would provide information so I can make an informed choice. Did I presume incorrectly?

So, the pragmatic approach seems a fair one. But, I can see that the phrase "I need...information on the stability of these two oscillators" can be taken one of two ways. Either "I need to find a way to differentiate between two oscillators so I can choose one" vs. "I'd like to understand *why* one oscillator is better and how to measure the particular parameters the explain it mathematically."

Given all this and the fact that you have two oscillators to choose from (at least that's what seems to be the case), I'd do the pragmatic approach and the more rigorous/theoretical approach. It can be a valuable exercise since you can find surrogate measurements that are much simpler to make than the ones that strict adherence to the model would suggest.

-

My "I read it on the internet" understanding of the Allan variance is it simply is treating the variance as a random variable. So one has then the mean and variance of the variance. One can take the variance and compute the FFT to look for periodicities in the variance. I *think* that would be the cyclostationarity, but I've never encountered that term before. So I'm just guessing.

I rather suspect that this is a lexical minefield where different specialties define slightly different meaning and scaling conventions to the same words.

No, you missed the mark on this one.

Which part? Perhaps you would be so kind as to explain in more detail.Quote... the Allan variance is it simply is treating the variance as a random variable.

You're missing the point. Your statement doesn't even scratch the surface.QuoteOne can take the variance and compute the FFT to look for periodicities in the variance.

While (sort of) technically correct, this is not something that anyone would ever do. It would not yield useful information.QuoteI rather suspect that this is a lexical minefield where different specialties define slightly different meaning and scaling conventions to the same words.

This is incorrect. -

E.g., if you were looking to discipline a crystal oscillator with a rubidium standard, you might end up with a plot like this one. At taus below one second, the rubidium standard is noisier than the crystal oscillator. If you used a loop bandwidth much higher than 1 Hz, you would stabilize the crystal oscillator adequately but you would also lose its superior short-term noise performance. If you used a time constant much slower than that, though, there would be a big hump in the plot where the oscillator wanders around over intervals of a few seconds before being stabilized at longer taus. It will never be perfect, so your goal is to avoid corrupting the short-term performance while minimizing the hump. (You can see this optimization process at work in the plot of the rubidium standard by itself, in fact, since that's the exact problem its designers were faced with.)

One of the points Rubiola raises in his book is that the reason we use ADEV is because true frequency-domain analysis was computationally difficult back in the 1960s. You can go from an FFT to an ADEV plot, at least in theory, but not vice-versa. Ideally, the problem outlined above would be solved in the traditional phase-noise crossover sense.

You make many good points in this post, but you are focusing on oscillator design, not oscillator use. I wouldn't even think of designing an oscillator (other than, perhaps, a simple colpitts oscillator for some throw away project) because I am not an experienced oscillator designer and, more importantly, you can buy simple oscillator modules very cheaply (I just bought 7 10 MHz oscillator modules from Jameco for $10). My interest is using existing oscillators. So, as an example, I have both a 10 MHz Rubidium oscillator (an FEI FE-5650) and two 10 MHz ocxos (one using a Bliley module and the other an Isotemp module). I built the enclosures for them and for one designed a simple filter to turn the square wave into a sine wave (which doesn't work very well). However, the core oscillators are off-the-shelf.

I bought the core oscillators on eBay. All were rescued from obsolete equipment and are probably 20 years old. That means they have aged. I would like to know how they compare to new core modules. Do they conform to the aging parameters in their data sheets? Has their performance degraded in a way that dramatically affects their jitter characteristics? I would imagine others might like to know this as well, since hobbyists rarely buy new rubidium or ocxo modules. Most, I would imagine, got them from eBay as I did. -

You seem to follow the "just try it and see what happens" school of design. That's fair. A lot of engineering, perhaps most, is done that way and I won't criticize it. But I am curious about the precise differences between various hobbyist oscillators; specifically their stability. That is why I am doing this project.

In order to understand those differences I want to understand how established measures of oscillator stability relate to practical questions, such as "if I use this oscillator, what are the probable bounds of its jitter? Is it likely that the frequency of this particular oscillator will vary by 10 Hz, 100 Hz, 1 KHz over a 2 hour period (given some parameters such as temperature, power line ripple, ...)?" Without understanding how Allan variance relates to this question, why should I be interested in it?

At this point, I have no idea what you're asking. Maybe you can be more specific about what you want to do and what you want to know.

-

E.g., if you were looking to discipline a crystal oscillator with a rubidium standard, you might end up with a plot like this one. At taus below one second, the rubidium standard is noisier than the crystal oscillator. If you used a loop bandwidth much higher than 1 Hz, you would stabilize the crystal oscillator adequately but you would also lose its superior short-term noise performance. If you used a time constant much slower than that, though, there would be a big hump in the plot where the oscillator wanders around over intervals of a few seconds before being stabilized at longer taus. It will never be perfect, so your goal is to avoid corrupting the short-term performance while minimizing the hump. (You can see this optimization process at work in the plot of the rubidium standard by itself, in fact, since that's the exact problem its designers were faced with.)

One of the points Rubiola raises in his book is that the reason we use ADEV is because true frequency-domain analysis was computationally difficult back in the 1960s. You can go from an FFT to an ADEV plot, at least in theory, but not vice-versa. Ideally, the problem outlined above would be solved in the traditional phase-noise crossover sense.

You make many good points in this post, but you are focusing on oscillator design, not oscillator use. I wouldn't even think of designing an oscillator (other than, perhaps, a simple colpitts oscillator for some throw away project) because I am not an experienced oscillator designer and, more importantly, you can buy simple oscillator modules very cheaply (I just bought 7 10 MHz oscillator modules from Jameco for $10). My interest is using existing oscillators. So, as an example, I have both a 10 MHz Rubidium oscillator (an FEI FE-5650) and two 10 MHz ocxos (one using a Bliley module and the other an Isotemp module). I built the enclosures for them and for one designed a simple filter to turn the square wave into a sine wave (which doesn't work very well). However, the core oscillators are off-the-shelf.

I bought the core oscillators on eBay. All were rescued from obsolete equipment and are probably 20 years old. That means they have aged. I would like to know how they compare to new core modules. Do they conform to the aging parameters in their data sheets? Has their performance degraded in a way that dramatically affects their jitter characteristics? I would imagine others might like to know this as well, since hobbyists rarely buy new rubidium or ocxo modules. Most, I would imagine, got them from eBay as I did.

Without continuous monitoring you can't say much about the aging process, but you can certainly characterize the oscillators' current performance at both short- and long-term intervals. PN and ADEV are the core metrics needed for this.

Ideally, the manufacturers of your used/surplus oscillators will have specified the performance in terms of Allan deviation, phase noise, or both. So all you need is a reference with known performance to compare them to, and the necessary instrumentation to make the measurements. Now you have the classic man-with-two-clocks problem, of course. There is a reason why my forum avatar is a rabbit seen in infrared light, as might be encountered by a well-equipped explorer in the twisty passages of a deep, dark hole.

-

At this point, I have no idea what you're asking. Maybe you can be more specific about what you want to do and what you want to know.

See my post to KE5FX here . -

At this point, I have no idea what you're asking. Maybe you can be more specific about what you want to do and what you want to know.

See my post to KE5FX:

"I bought the core oscillators on eBay. All were rescued from obsolete equipment and are probably 20 years old. That means they have aged. I would like to know how they compare to new core modules. Do they conform to the aging parameters in their data sheets? Has their performance degraded in a way that dramatically affects their jitter characteristics? I would imagine others might like to know this as well, since hobbyists rarely buy new rubidium or ocxo modules. Most, I would imagine, got them from eBay as I did."

KE5FX gave you the answer ... measure the Allan Variance. -

Without continuous monitoring you can't say much about the aging process, but you can certainly characterize the oscillators' current performance at both short- and long-term intervals. PN and ADEV are the core metrics needed for this.

Ideally, the manufacturers of your used/surplus oscillators will have specified the performance in terms of Allan deviation, phase noise, or both. So all you need is a reference with known performance to compare them to, and the necessary instrumentation to make the measurements. Now you have the classic man-with-two-clocks problem, of course. There is a reason why my forum avatar is a rabbit seen in infrared light, as might be encountered by a well-equipped explorer in the twisty passages of a deep, dark hole.

The FEI FE-5650 spec does give information about phase noise and the Allan variance, but if I run tests on my aged unit and get a different Allan variance value, what does that tell me from a practical standpoint? That is, how would I use that information to make decisions?

The ISOTEMP module spec gives some information about phase noise, but nothing about Allan variance.

I can't find the data sheet for the BLILEY module, although I thought I had found it once upon a time.

Correcting the record: I got the BLILEY module from eBay, but the ISOTEMP module came with the AnalysIR OCXO board that I purchased on Tindie. -

KE5FX gave you the answer ... measure the Allan Variance.

We are going in circles. How does the Allan Variance tell me anything practical about jitter? -

KE5FX gave you the answer ... measure the Allan Variance.

We are going in circles. How does the Allan Variance tell me anything practical about jitter?

That is what it does. -

KE5FX gave you the answer ... measure the Allan Variance.

We are going in circles. How does the Allan Variance tell me anything practical about jitter?

That is what it does.

Let's use a concrete example. The FEI FE-5650 spec gives an Allan Variance of 1.4*10-11/sqrt(t) when the unit is new. Using that number (if you need other information, the URL to the spec is in my post to KE5FX), tell me how to determine that the frequency of the unit will not vary by more than x% (you choose x) over a two hour period with a probability of p (you choose p). -

Let's use a concrete example. The FEI FE-5650 spec gives an Allan Variance of 1.4*10-11/sqrt(t) when the unit is new. Using that number (if you need other information, the URL to the spec is in my post to KE5FX), tell me how to determine that the frequency of the unit will not vary by more than x% (you choose x) over a two hour period with a probability of p (you choose p).

It can't be determined from their specifications, because they do not state the range over which 1.4*10-11/sqrt(t) is valid. -

An oscillator is mathematically characterized as:

v(t) = [V0 + e(t)] * cos[w0*t + phi(t)], where V0 is the base oscillator amplitide, w0 is the base oscillator frequency (in radians/sec), and both e(t) and phi(t) are stochasitic processes that respecitively add amplitude noise and phase noise to the oscillator's output.

For any practical oscillator, the stochastic processes e(t) and phi(t) are cyclostationary, which means their moments (e.g., mean and variance) are normally not constant (which would be true for a stationary process), but periodic. That means over time they change in value, but are periodic over some timeframe.

My problem is how to properly sample cyclostationary processes such as e(t) and phi(t).

Those are very broad statements, especially when the time frame can be from ms to years. Also, there are some assumptions that might be irrelevant, or simply wrong, depending on the situation.

You mentioned surplus oscillators, synchronize multiple instruments, square to sin conversion of 10 MHz, rubidium clock, and so on.

What are you after? What exactly are you trying to do, or to achieve?

What is your measuring setup? What exactly do you plan to measure with the given setup?