-

Thanks bugi. Forwarded to the engineers for their study.

-

Found two older notes on triggering. These might be more about "know your scope" (and its limitations) stuff. My primary guess is that the trigger's signal path has more noise than the path for shown signal, and I'm just approaching the limits.

For below tests, I'm using direct BNC-BNC cable from wavegen output to channel input, 1M/high-Z modes or 50ohm modes as desired. 50ohm modes will result in (almost) the same, but of course signal amplitude is halved and thus vertical gain should be adjusted comparably. (Earlier I used just probe handheld on the wavegen output with same results). Values shown below are for 50 ohm modes.

First:

Defaults, wavegen 50ohm 500mVpp (square 1kHz), 50ohm for channel input, auto setup -> should give 100mV/div and (rising) edge trigger with 0V offset. (Note, adjust the 50ohm on the wavegen first, as it would otherwise "helpfully" readjust the amplitude value for you (but not the actual amplitude driven), to a value you did not want to set. I made mistake with that twice before realizing what was going on, and once after realizing.)

Increase vertical from that 100mV/div to 1V/div -> loses trigger, even though visually it looks like there is "plenty" of clear edge to trigger from (half a div).

Adjusting trigger level up by two steps to just +40mV (in my case) restores triggering. I'd have expected the triggering to work fine until the edge height is approaching few LSBs or so (i.e. noise); here it is still around 16 LSBs total, or ~8LSB from 0V to the square top (or from bottom to 0V). The 40mV adjustments is 1-2 LSBs. (I'm not sure how exactly it scales things, so those LSB calculations could be slightly off, but order of magnitude should be correct.)

If adjusting the trigger coupling to HF reject with 0V offset, it again triggers, but has a bit of jitter and trigger point seems to be near the top of the edge. I can understand the shift if triggering is done with own analog path (assuming e.g. analog filter, which will naturally slow down the relatively fast edge, and thus the trigger path's signal reaches the trigger level later), but the jittering? Shouldn't HF reject reduce noise and trigger jitter? (Also, I've come to understood that the triggering is digital, "It has an innovative digital trigger system with high sensitivity and low jitter", so the filtering should not cause shift, unless the HF reject is done in analog.. thus it would not be fully digital.) (EDIT: after thinking some more, digital filtering could also cause delay; it is just easier to compensate for in digital domain and/or to design filter with less effective delay.)

If the wavegen is set to 1Vpp (twice the amplitude), and the vertical correspondingly to 2V/div (and trigger level returned to 0V), the triggering is, suprise, stable, even though visually the situation should be the same as before. Lowering the trigger two steps to -80mV and it loses the triggering. So, almost the same, but slightly better "margin".

The puzzle for me is the difference in the ability to trigger vs. ability to show a clear signal, and on the other hand the difference in the triggering stability between those two amplitude & gain levels. In fully digital domain there should be no problem to trigger at least for one, if not two more steps of vertical gain (i.e. 2V/div and 5V/div). Then the edge starts to be truly small to see (but still there!).

The other case:

*snip* *snip*

... Well, this case got cleared after half an hour of digging into it this time. I was wondering why the wavegen's cardiac waveform was losing triggering on the mid-height peak before the lowest peak, when lowing trigger level downwards, even though the mid-height peak seems to have a "better" start (from slightly lower voltage and steeper slope). Zooming in on that mid-height peak reveals that there is a tiny sudden step on the "flat" area, just before the peak starts to rise. So, just a slightly poor quality waveform. Maybe the waveform edges are wrapping around there and developers forgot to adjust the waveform for proper continuity.

-

Found two older notes on triggering. These might be more about "know your scope" (and its limitations) stuff. My primary guess is that the trigger's signal path has more noise than the path for shown signal, and I'm just approaching the limits.

For below tests, I'm using direct BNC-BNC cable from wavegen output to channel input, 1M/high-Z modes or 50ohm modes as desired. 50ohm modes will result in (almost) the same, but of course signal amplitude is halved and thus vertical gain should be adjusted comparably. (Earlier I used just probe handheld on the wavegen output with same results). Values shown below are for 50 ohm modes.

First:

Defaults, wavegen 50ohm 500mVpp (square 1kHz), 50ohm for channel input, auto setup -> should give 100mV/div and (rising) edge trigger with 0V offset. (Note, adjust the 50ohm on the wavegen first, as it would otherwise "helpfully" readjust the amplitude value for you (but not the actual amplitude driven), to a value you did not want to set. I made mistake with that twice before realizing what was going on, and once after realizing.)

Increase vertical from that 100mV/div to 1V/div -> loses trigger, even though visually it looks like there is "plenty" of clear edge to trigger from (half a div).

Adjusting trigger level up by two steps to just +40mV (in my case) restores triggering. I'd have expected the triggering to work fine until the edge height is approaching few LSBs or so (i.e. noise); here it is still around 16 LSBs total, or ~8LSB from 0V to the square top (or from bottom to 0V). The 40mV adjustments is 1-2 LSBs. (I'm not sure how exactly it scales things, so those LSB calculations could be slightly off, but order of magnitude should be correct.)

If adjusting the trigger coupling to HF reject with 0V offset, it again triggers, but has a bit of jitter and trigger point seems to be near the top of the edge. I can understand the shift if triggering is done with own analog path (assuming e.g. analog filter, which will naturally slow down the relatively fast edge, and thus the trigger path's signal reaches the trigger level later), but the jittering? Shouldn't HF reject reduce noise and trigger jitter? (Also, I've come to understood that the triggering is digital, "It has an innovative digital trigger system with high sensitivity and low jitter", so the filtering should not cause shift, unless the HF reject is done in analog.. thus it would not be fully digital.)

If the wavegen is set to 1Vpp (twice the amplitude), and the vertical correspondingly to 2V/div (and trigger level returned to 0V), the triggering is, suprise, stable, even though visually the situation should be the same as before. Lowering the trigger two steps to -80mV and it loses the triggering. So, almost the same, but slightly better "margin".

The puzzle for me is the difference in the ability to trigger vs. ability to show a clear signal, and on the other hand the difference in the triggering stability between those two amplitude & gain levels. In fully digital domain there should be no problem to trigger at least for one, if not two more steps of vertical gain (i.e. 2V/div and 5V/div). Then the edge starts to be truly small to see (but still there!).

The other case:

*snip* *snip*

... Well, this case got cleared after half an hour of digging into it this time. I was wondering why the wavegen's cardiac waveform was losing triggering on the mid-height peak before the lowest peak, when lowing trigger level downwards, even though the mid-height peak seems to have a "better" start (from slightly lower voltage and steeper slope). Zooming in on that mid-height peak reveals that there is a tiny sudden step on the "flat" area, just before the peak starts to rise. So, just a slightly poor quality waveform. Maybe the waveform edges are wrapping around there and developers forgot to adjust the waveform for proper continuity.

"...unless the HF reject is done in analog.. thus it would not be fully digital.)"

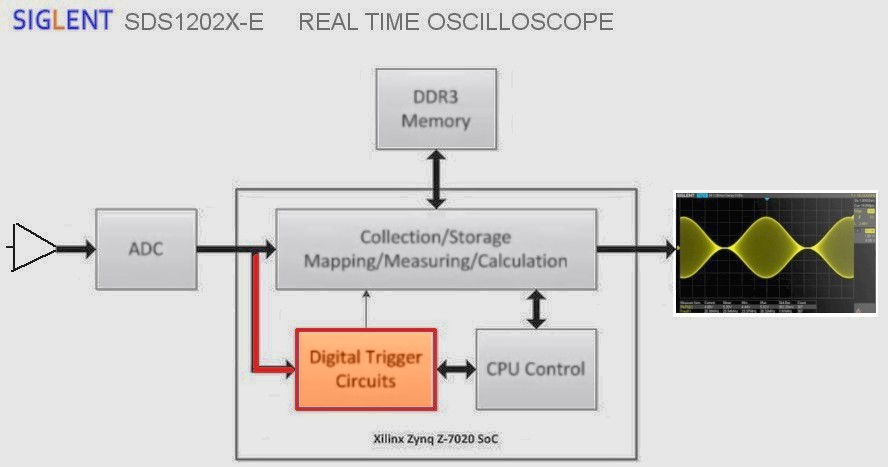

Image is for SDS1kX-E but SDS2kX principle is same. Trigger engine is after ADC .... and so on.

It is fully digital side trigger in main channels. Ext trig channel is conventional analog to trigger comparator principle and its performance is far behind digital trigger due to many reasons.

I can not fully follow and understand your explanation but somehow I feel that least trigger hysteresis is perhaps one thing what must be taken into account. Of course also digital trigger system need it. If there is not trigger hysteresis - well I think the oscilloscope is thrown away from the window quickly.

I think HF reject also affect amount of trigger hysteresis. But, I do not talk more about hysteresis now because it is not clear to me if this is least partially behind what you have observed.

And then, (SDS2kX) specifications:

Accuracy: CH1 ~ CH4: ±0.2div

Sensitivity: CH1~ CH4: 0.6div

Trigger Coupling: (of course these freq limits are not like brick wall filtered)

DC: Passes all components of the signal

AC: Blocks DC components and attenuates signals below 8Hz

LFRJ: Attenuates the frequency components below 900kHz

HFRJ: Attenuates the frequency components above 500kHz

Also trigger hysteresis is tiny bit handled here (yes different model but principle is same)

https://www.eevblog.com/forum/testgear/siglent-technical-support-join-in-eevblog/msg1254186/#msg1254186 -

The trigger hysteresis could very well explain the case without HF reject; there is only 0.25 div of rising edge to the 0V trigger level.

--

Which made me think about the slope trigger, as it has its own two levels and a rule to go through both in correct order, so maybe it would not have any extra hysteresis... More playing with that 1kHz square wave...

... Alas, seems that hysteresis affects also slope trigger's lower trigger level, but it has no hysteresis for the higher level.

For rising edge, the signal needs to go a bit below the lower trigger level (as with normal edge trigger with its internal hysteresis), but higher trigger level can be anywhere between lower trigger and the signal edge's top.

But for falling edge, the higher trigger level needs to be a bit (about that hysteresis amount) above the lower trigger level, but otherwise the trigger levels can be as close to the top or bottom of the signal edge as wanted. (Well, hysteresis in this direction seems to be between the two trigger levels, so the hysteresis could be considered to be for either one of them, or both, I just assume it is for the lower one.)

That is, on rising edge, triggering works even when the trigger levels are very close to each other, but for falling edge they need to be more apart (the hysteresis amount?). I think that non-symmetric behavior is not correct. I also wonder if the slope trigger really needs any built-in extra hysteresis at all, since the trigger itself already has the two levels (sort of user adjustable hysteresis, it is up to the user to pick good levels).

--

I played some more with the HF reject coupling, zooming and adjusting the trigger level up and down. The edge's slope seems to spread over 600-700ns (for trigger timing, not visually), deducing from how much the shown edge moves around with the trigger level change. Seems to be explained with the 500kHz filtering. It still made a tiny bit of slow drifting and that jittering between two timings, but I will think this more myself, armed with these new bits of information.

-

My scope updated just nice with the latest version, including the self-calibration afterwards. The calibration result was so far the best; earlier the offsets and gains were clearly "about there", now "almost or exactly there". Could be just lucky case this time. (I didn't assume that scopes would be precision instruments anyway, except on timing.)

It’s hard to tell whether the self-cal has actually been improved with the new 1.2.2.2R15 firmware – but it’s a fact that it has been changed many times before.

And yes, as with all wideband/HF gear, we just cannot expect an accuracy comparable to a proper bench DMM at DC and low frequency AC.Is the scope supposed to remember all previous settings after reboot?

Yes, the scope is supposed to restore its previous state after a reboot.

…

Bugs? Undocumented features?

Yes, there have been several complaints in the past that certain parameters have not been properly restored, and most of these problems have been fixed.

I’m currently not aware of any such issues but would not be surprised if there were still some left.

If you find something, please document it, describe how to reproduce it and post it here. We can then verify the issue and make Siglent aware of it.Would be nice (at least for me) if a menu selection that uses the adjustment knob would keep that knob active the same way as menu (numeric) value adjustments do. That is, value adjustments seem to not "time out" the knob (one can wait a minute and still adjust the value), but selections time out quite quickly. At least I use the default action of intensity (or whatever) adjustment pretty much never, but I am constantly making a selection change, looking at the view for a moment, and try to select another choice... only to end up tweaking the intensity instead

Agreed, but…

The SDS1004X-E doesn’t have the intensity adjustment as a default action for the universal knob, yet people are complaining as well.

A long time ago, I have suggested to make this an option in the Display or Utility menu (default action for the universal control) and it could offer “intensity” and “none” as well as a few other things maybe. Obviously, Siglent either didn’t like that idea or didn’t have the time to implement it yet.However, this would then benefit from some way to deselect the current menu item (return the knob back to intensity adjust) without causing changes in the selection. The current way of clicking the menu button first to select/activate it, then to step through the values makes it not possible to have another push for deselect. (I have more to say about this, but I need to check / massage the ideas a bit more first...)

There are several possibilities for deselecting a menu; the most obvious one is to just push the universal knob to confirm the current selection. For instance, when currently using edge trigger, open the trigger type menu and “Edge” will be the selected item as expected. Push the universal control and the menu will close without change.

However, most of us don’t like to push the universal knob, because we can accidentally change the selection at the same time, i.e. we need to be careful to only push the knob without rotating it. I for one do hate the push-action on the universal knob as well.

The strategy to deselect a menu without touching the universal control is to hit some other soft menu button that doesn’t have a menu with several selectable items behind it. Ideally an empty button without associated soft menu entry at all, like buttons 5 and 6 in the Cursors menu. Otherwise you can use a menu toggle item, like “Noise Reject” in the trigger menu. Hit that soft button twice – the first time you de-select the menu selection, the second time you revert the noise reject back to its original setting. Two keystrokes, yes, yet far more convenient than having to push the universal control.

Finally, you can also push some other button on the scope, like the current trigger mode (auto or normal for instance). This will exit the menu selection without changing anything and is just a single keystroke, yet a little farther away than just another soft menu button.10:1 probe, normal modes (default stuff), trigger at normal (and 0V), probe shorted, 10mV/div, showing about 10mV of noise (as expected). Adjusting position of the channel moves the offset pointer/trigger line smoothly (like 1 pixel at a time, 0.20mV per step), but the shown signal does NOT move up/down at all (it is still updating the data otherwise as expected), until about 11/12th step, when it jumps to the new height on the display. However, if trigger is set to single, showing the one span of noise, that signal view does move smoothly with the position adjustment. I would have expected that normal mode view to move smoothly with the position adjustment, too. Sure, it is mostly noise, but e.g. the average level of the "signal" could be looked at, but with that effect it is not always shown correctly. Explanations? Bug?

Sorry, I absolutely cannot confirm any problem with that. Some explanations:

10mV/div with a x10 probe is equivalent to the genuine (x1) 1mV/div input sensitivity. Other than the SDS1000X-E, which has true full resolution/full bandwidth 500µV/div sensitivity, the SDS2000(X) only has true 2mV/div sensitivity and 1mV/div is just a software zoom, hence kind of a fake. This is not great and Siglent doesn’t do that anymore for the newer designs like SDS1000X-E and SDS5000X, but then again, this BS is not uncommen and other vendors can be even worse, like Keysight MSO-X3000 (4mV) or Rigol 1000Z (5mV). Everything more sensitive on these scopes is just fake.

The trace area on your screen is 400 pixels high and shows 200LSB of the ADC. That means that the trace has a (vertical) width of 2 pixels (without noise) and the smallest voltage step is already two pixels on the screen. With the zoom at the highest sensitivity, this step is now 4 pixels. Yet the graphical resolution is high enough (or the screen small enough ) so that we see a smooth movement but…

) so that we see a smooth movement but…

The trace is fat and bright initially, because of the noise at that high sensitivity and the high waveform update rate, which displays lots of acquisition within one video frame on the display.

SDS2304X_Vpos_01

Now if we move the vertical position, the screen update from the sample buffer gets interrupted and just the last captured waveform remains, which then can be moved smoothly across the screen indeed, but as a single triggered waveform it is just a slim single line and much dimmer:

SDS2304X_Vpos_02

Yet position change is continuous and smooth, just when you stop turning the position knob, screen update from the sample buffer is instantly continued and the trace gets fat and bright again. This might leave the false impression of an erratic position update, especially when the display intensity is turned down, but in fact everything is fine on this front.

-

Found two older notes on triggering. These might be more about "know your scope" (and its limitations) stuff. My primary guess is that the trigger's signal path has more noise than the path for shown signal, and I'm just approaching the limits.

First, let me assure you that triggering on any Siglent X-series scopes is superb (which doesn’t rule out that it might still have some hidden bug left somewhere). I consider good triggering one of the most important features of a scope and would report any bug in this area as a high priority issue.

Since this DSO has a modern fully digital trigger system (except for the external trigger on the back), the trigger path is part of the regular signal path, hence cannot be any different.For below tests, I'm using direct BNC-BNC cable from wavegen output to channel input, 1M/high-Z modes or 50ohm modes as desired. 50ohm modes will result in (almost) the same, but of course signal amplitude is halved and thus vertical gain should be adjusted comparably. (Earlier I used just probe handheld on the wavegen output with same results). Values shown below are for 50 ohm modes.

As a general rule of thumb, do not use high-Z with direct coax connections. It might work okay in some special situations, especially for low frequencies, but for accurate results and flat frequency response, a continuous maintenance of the 50 ohms impedance (generator output, cable, scope input) is mandatory.First:

Look what is there in the datasheet: Sensitivity CH1 ~ CH4 ±0.6div

Defaults, wavegen 50ohm 500mVpp (square 1kHz), 50ohm for channel input, auto setup -> should give 100mV/div and (rising) edge trigger with 0V offset. (Note, adjust the 50ohm on the wavegen first, as it would otherwise "helpfully" readjust the amplitude value for you (but not the actual amplitude driven), to a value you did not want to set. I made mistake with that twice before realizing what was going on, and once after realizing.)

Increase vertical from that 100mV/div to 1V/div -> loses trigger, even though visually it looks like there is "plenty" of clear edge to trigger from (half a div).

And again, the trigger sensitivity is superb and you’ll be hard pressed to find any other scope that would be even better in this regard – especially not on the noisy ones.Adjusting trigger level up by two steps to just +40mV (in my case) restores triggering. I'd have expected the triggering to work fine until the edge height is approaching few LSBs or so (i.e. noise); here it is still around 16 LSBs total, or ~8LSB from 0V to the square top (or from bottom to 0V). The 40mV adjustments is 1-2 LSBs. (I'm not sure how exactly it scales things, so those LSB calculations could be slightly off, but order of magnitude should be correct.)

Any trigger (even the old style analog Schmidt trigger) has to have some hysteresis for stable operation on slow/noisy edges. The digital trigger in the SDS2000X is no exception here.

So no, just a few LSB of the ADC is not enough and the datasheet specifies 0.6 divisions, which would be about 12LSB. And of course a 500mVpp signal is just 0.5 divisions at 1V/div vertical gain, hence outside of the datasheet spec.

The fact that you still can trigger just fine by adjusting the trigger level proves that the 0.6 div from the data sheet is a worst case specification that also applies to the zoomed 1mV/div gain setting. With the true vertical sensitivities, you can go lower than that.

Because of the hysteresis, you need to adjust the trigger level a little higher when triggering on positive (rising) edges, and a little lower when triggering on negative (falling) edges. That simple.If adjusting the trigger coupling to HF reject with 0V offset, it again triggers, but has a bit of jitter and trigger point seems to be near the top of the edge.

Sorry, no, I cannot reproduce this. Quite to the contrary, I’m a bit baffled to learn that the hysteresis quite obviously is less when in HF reject mode, because now –as you say – the scope can trigger even with a trigger level of 0V. And it does this just fine:

SDS2304X_Trigger_HFRJI can understand the shift if triggering is done with own analog path (assuming e.g. analog filter, which will naturally slow down the relatively fast edge, and thus the trigger path's signal reaches the trigger level later), but the jittering? Shouldn't HF reject reduce noise and trigger jitter? (Also, I've come to understood that the triggering is digital, "It has an innovative digital trigger system with high sensitivity and low jitter", so the filtering should not cause shift, unless the HF reject is done in analog.. thus it would not be fully digital.)

Yes, the trigger system is fully digital, and the HF-reject filter is a DSP filter. But digital filters also cause phase shifts, although not a noticeable one if we look at a 1kHz squarewave that is triggered through a 500kHz lowpass filter. But if you approach and exceed the 500kHz cutoff frequency (where the trigger sensitivity is then already considerably lower), you can certainly see the phase shift and also get some jitter:

SDS2304X_Trigger_HFRJ_600kHz

This is just a property of the simple DSP filter (limited number of filter coefficients and data points) and doesn’t matter at all, since we’re already definitely in the territory that we want to suppress. You would certainly not chose a HF-reject trigger whenever you want to capture a high frequency signal at or even above 500kHz.

For a squarewave, I would not even exceed 100kHz, since the edge (which you want to trigger on) depends on its higher order harmonics and you want at least the 5th harmonic to pass through to the (numeric) trigger comparator without being attenuated by the filter.If the wavegen is set to 1Vpp (twice the amplitude), and the vertical correspondingly to 2V/div (and trigger level returned to 0V), the triggering is, suprise, stable, even though visually the situation should be the same as before. Lowering the trigger two steps to -80mV and it loses the triggering. So, almost the same, but slightly better "margin".

Yes, slight differences between various vertical gain settings are to be expected. 120mV difference at 2V/div is not much and certainly irrelevant for practical work.

The puzzle for me is the difference in the ability to trigger vs. ability to show a clear signal, and on the other hand the difference in the triggering stability between those two amplitude & gain levels. In fully digital domain there should be no problem to trigger at least for one, if not two more steps of vertical gain (i.e. 2V/div and 5V/div). Then the edge starts to be truly small to see (but still there!).

Other than that, the explanations above still apply. As per datasheet, stable triggering is guaranteed for 0.6div “behind” the trigger level. This means we need an amplitude of 0.6 divisions below the trigger level in case of a rising edge trigger and likewise 0.6 div amplitude above the trigger level in case of falling edge trigger. The fact that you can get away with lower amplitudes in most practical scenarios is just a bonus and certainly no reason to complain.

The puzzle is solved by thinking about the scope being rather dumb. It does not know that there is a clean waveform and an even lower hysteresis would work just fine. It just applies a standard hysteresis value that has been tested to work in 90% of practical situations with a low noise scope like this.

If for some reason the noise of the signal itself is quite high, you just enable “Noise Reject” in the trigger menu. Guess what that does?

Right, it just increases the hysteresis to about three times the standard value.

-

Slope Trigger.

There are now two trigger thresholds, and hysteresis applies to both of them.

That means, the lower threshold needs to be at least the amount of hysteresis above the bottom of the waveform.

A casual setup would look like this:

SDS2304X_Trigger_Slope_wide

The signal bottom is at -250mV and the top is at +250mV. The trigger thresholds are at -100mV and +100mV. Quite obviously, 150mV distance from the bottom is enough and the hysteresis is thus just 0.3 divisions.

But we can put the two thresholds much closer together:

SDS2304X_Trigger_Slope_narrow

Now the difference between upper and lower threshold is just 40mV (=0.08div) and it still works fine, because there is sufficient signal amplitude below the lower threshold to satisfy its hysteresis requirements.

We can even trigger that 500mVpp signal at a vertical gain of 1V/div if we adjust both threshold near the top of the waveform (in this case of slope triggering on a rising edge):

SDS2304X_Trigger_Slope_Min

With trigger levels at +100mV and +200mV we satisfy the hysteresis for both thresholds at the same time and the scope is triggering as expected. The lowest distance from the bottom is 350mV for the lower threshold, which equals 0.35 divisions.

-

On the slope triggering.

On rising edge, I guess the higher trigger level could also have its hysteresis (like the lower has, i.e. signal has to go enough below the trigger level), but since the higher level needs to be above the lower level anyway (even enforced in the UI), it becomes sort of moot point for that. I.e. upper level can be anywhere between lower trigger and top of edge, no extra margins needed. (Well, there is some tiny amount needed, but so small that it is not about hysteresis).

However, why does it not work in the corresponding way for the falling edge? The lower trigger can be as close to the edge's bottom as wanted, and upper trigger as close to the edge's top and still trigger, as long as the separation between triggers is more than about that hysteresis. I'd have expected that the upper trigger would need to be below the signal top by that hysteresis amount, that is, corresponding to the requirement of lower trigger having to be above signal bottom for rising edge.

Also, why do they need any hysteresis at all? The slope trigger in itself, by its definition, has hysteresis. Considering that the falling edge doesn't have hysteresis related to the signal (only between the triggers), which is already close to it. Hmm.. maybe I should test with more noisy signal.

I think I need to make some more tests on this and screenshots about these for better explanation, but no time for that today.Quote120mV difference at 2V/div is not much and certainly irrelevant for practical work.

Ooh, the reason why I original got into these (non-)issues is because it was very much relevant for practical work. Quite the complex and long waveform, I needed some way to get it stable, and the seemingly easiest way was to edge trigger on the peak of the highest ringing wave, which was only a little bit above the 2nd highest wave. Not a much of margin to play with. I don't remember the specifics (amplitudes etc.) any more, and the measured device (PC PSU) is now in bits and pieces.

I just tried to reduce the case to something simpler (and repeatable), like that square wave.QuoteThe puzzle is solved by thinking about the scope being rather dumb. It does not know that there is a clean waveform and an even lower hysteresis would work just fine. It just applies a standard hysteresis value that has been tested to work in 90% of practical situations with a low noise scope like this.

Yeah, I came to this conclusion yesterday, too, after reading the stuff behind the link given by rf-loop. I.e. it became a case of "know the scope and its limitations".

-

On the slope triggering.

Fully agree.

On rising edge, I guess the higher trigger level could also have its hysteresis (like the lower has, i.e. signal has to go enough below the trigger level), but since the higher level needs to be above the lower level anyway (even enforced in the UI), it becomes sort of moot point for that. I.e. upper level can be anywhere between lower trigger and top of edge, no extra margins needed. (Well, there is some tiny amount needed, but so small that it is not about hysteresis).However, why does it not work in the corresponding way for the falling edge? The lower trigger can be as close to the edge's bottom as wanted, and upper trigger as close to the edge's top and still trigger, as long as the separation between triggers is more than about that hysteresis. I'd have expected that the upper trigger would need to be below the signal top by that hysteresis amount, that is, corresponding to the requirement of lower trigger having to be above signal bottom for rising edge.

You’re absolutely right. Seems like you’ve found one of the remaining hidden bugs!

Also, why do they need any hysteresis at all? The slope trigger in itself, by its definition, has hysteresis. Considering that the falling edge doesn't have hysteresis related to the signal (only between the triggers), which is already close to it. Hmm.. maybe I should test with more noisy signal.

Don’t forget that we need two reliable thresholds to calculate the slope. If you dispend with the hysteresis, thresholds could be unstable and ambiguous due to slow edges and/or noise.

So I cannot agree with this point, but it seems like Siglent engineers have initially implemented the thresholds without all the necessary bells and whistles like alternating hysteresis and then later on there was some error report about unstable falling edge trigger (and the majority of tests as well as real work normally makes do with just edge trigger) and then implemented the required add-ons for the one and only threshold used for edge trigger and forgot to do the same with the 2nd threshold.

Just a wild guess…

Well, 80mV is only one single LSB at 2V/div. If your test scenario is that tricky that you have to rely on a single LSB accuracy/stability, you just need to be lucky to get the measurement working anyway. Do you believe there is any DSO, no matter how expensive, that will always and consistently provide the expected results when you have to rely on just one LSB (or even two)?Quote120mV difference at 2V/div is not much and certainly irrelevant for practical work.

Ooh, the reason why I original got into these (non-)issues is because it was very much relevant for practical work. Quite the complex and long waveform, I needed some way to get it stable, and the seemingly easiest way was to edge trigger on the peak of the highest ringing wave, which was only a little bit above the 2nd highest wave. Not a much of margin to play with. I don't remember the specifics (amplitudes etc.) any more, and the measured device (PC PSU) is now in bits and pieces.

Thing is, the 1V vs. 2V example is particularly nasty. When you turn the vertical gain control to switch between the two settings, you’ll hear a relay click. This means we have two different attenuator ranges. We are not talking about precision dividers like in a bench DMM, but one of the kind that is made for an oscilloscope with its traditional 2-3% DC accuracy, that has to operate up to several hundred MHz in return. AC accuracy is specified as +/-1dB up to 10% of the bandwidth for the SDS2000X series – and this is fairly typical. If you chose fine adjust for the vertical gain, you will notice that the attenuator changes at the step from 1.48V/div to 1.50V/div. At this point, you might see a larger step than usual, but also a smaller one. You might see virtually no change at all or even a slightly higher amplitude at 1.50V/div than at 1.48V/div, just because the 2nd attenuator might have more than some -1.33% error with respect to the first attenuator at the frequency of interest.

Of course, a slight gain error due to attenuator tolerances will not throw off the trigger, but the frequency response changes as well and this could indeed make a difference, because it affects the shape of fast edges. Now I have tested the SDS2304X and can safely state that the frequency response is fairly consistent without attenuator, i.e. for all gain settings up to 148mV/div. Above that (with the first attenuator active), I get a frequency response that is more flat, i.e. about -1dB at 270MHz but at the same time up to +2dB at 400MHz. This is well within tolerances and usually no concern at all. Yet I do not know how the 2nd attenuator behaves in this regard, because not even my high performance synthesizer with high power output option could deliver a signal of 4.25Vrms (=12Vpp) into 50 ohms, which would be required to properly test the 2V/div range across the scope bandwidth.

Anyway, I don’t expect major differences in the frequency response across all vertical gain settings like on some cheap bottom of the barrel scopes, but even slight deviations, that would otherwise be considered negligible, could have an impact whenever we have to rely on just one LSB or two.

To cut a long story short, whenever you have such borderline situations, you should try to find a better (less ambiguous) trigger condition, such as a related signal that has a predictable time correlation to the glitch you want to observe. Then you can use trigger delay to view the part of your signal you’re interested in. Even with just the original signal, a combination of trigger delay and hold-off time might give a more reliable trigger condition, e.g. triggering on something more unique, even if it’s far away from the signal portion you want to observe and use trigger delay to bring the interesting part of the signal into view and/or hold-off time in order to ignore multiple occurrences of the selected trigger condition and always trigger on the first in a row.

Take this periodic speech signal as an example. Edge trigger together with the appropriate hold-off time provides a stable picture. Use trigger delay or zoom to have a closer look at the interesting part (e.g. the peak amplitude):

SDS2304X_Trigger_Edge_Holdoff

“Foul!”, I hear you shout. “My signal is continuous, no gaps I could trigger on and the hold-off trick would not work!”.

Fair enough.

Lets get a stable trigger somewhere later, after the burst has started. We do have a bunch of peaks with very similar amplitudes. Just use a trigger level that is high enough to distinguish the bunch of peaks from the rest of the signal. Then again, we get a stable picture and can use trigger delay or zoom for closer inspection.

SDS2304X_Trigger_Interval

-

Quote

Don’t forget that we need two reliable thresholds to calculate the slope. If you dispend with the hysteresis, thresholds could be unstable and ambiguous due to slow edges and/or noise.

That same thing came to my mind while having a walk around.. I had forgotten there is more to the slope trigger than just 2 voltage levels. Perhaps because so far I have mostly used it for just those voltage levels, to trigger on edges that are large enough or at desired voltage range, time limits used only loosely to enforce some sort of edge (i.e. less than 30% of the period or such).

The practical case; IIRC, it could have been more than just few LSB (considering how much detail there was still to see), but I do admit it was still a tough squeeze, and the hysteresis could have been in the play.

I did manage to stabilize the view enough eventually, with some method I don't remember any more. (I might have written it in that project's notes, but, maybe some day... it was a failed project anyway, it was too broken for repairs.) If I had to guess, I may have even used single shot, ignore the actual trigger moment (just trigger somewhere/anywhere) and abuse the deep memory, just scroll to the interesting view, or something like that.

The signal was 5V standby supply secondary side; no other related signals that I could probe, since the primary side was definitely no-no (I don't have the probe needed for that). The tricky part for that waveform was that while it is continuous, it is not fully periodic; it switches between operation modes as needed. (I did manage to fix that 5Vsb, but the main PFC+PWM section had its chip busted, and no replacement available.)

Maybe I could manage better triggering for such a case now, with a bit more knowledge. I do have another PSU (with less issues) waiting...

-

Quote

Yes, the scope is supposed to restore its previous state after a reboot.

Problem is, it is nearly impossible to figure out reproduction, since the problem comes so randomly. I would have to record every action I make with the scope, reboot, go through all settings after that reboot to see if the issue appeared... And it would likely result in a really long sequence to do, if it even reproduces the issue. All I can say that if I use the scope more than a trivial amount, it seems to be about guaranteed that something changes after reboot.

Yes, there have been several complaints in the past that certain parameters have not been properly restored, and most of these problems have been fixed.

I’m currently not aware of any such issues but would not be surprised if there were still some left.

If you find something, please document it, describe how to reproduce it and post it here. We can then verify the issue and make Siglent aware of it.

(To clarify, with reboot, I mean long press on the power button to turn "off", then short one to turn it back on, the scope is powered all time, i.e. power button has light.)

And in the latest turn on (yesterday) it happened again, including another setting from the ones I mentioned previously: trigger type had changed, but as the new entry, also the trigger channel had changed from 1 to 2. (And wavegen waveform, and possibly some others I just didn't get to look at or don't have any effect.)

But this does give some ideas how to approach testing it. Does still take some work though, so don't hold your breath

-

I continued studying the "jumpyness" of the low level vertical position stuff, now using DC-output from wavegen with 50ohm setup and direct cable to have more consistent noise than with the probe (which was affected by probe and hand/arm positions etc. etc.). Also switched to use 2mV/div (the lowest true sensitivity) to eliminate any possible issues arising from using the "fake" 1mV/div. And used cursors to have easier time to spot the movements, and with and without 5secs of persistence (to have steadier noise "band").

And, it actually is even a bit weirder than I had described previously.

The same phenomena can still be observed, though the "jumps" happen just every 6 steps of vertical position in auto trigger mode, corresponding to 3 LSBs if my calculations are correct (instead of the every 11/12 steps as on 1mV/div). A single capture (trigger's single-single) shown moves up/down per every 2 vertical position steps (corresponding thus to 1LSB, so I guess this would be as expected).

(The calcs for LSB: 2mV/div, 8 divs = 16mV total, div by 200 (as mentioned for the resolution), 0.08mV per LSB, vertical position gets changed (numerically) by 0.04mV per step = 0.5LSB.)

The new finding this time was that if one takes a new single capture directly (another press of that single button), its offset will actually match how the auto-trigger's offset is shown, i.e. it will change only every 6th step (every 3 LSBs) of position adjustment, not every 2 steps (1 LSB). For any offset value among those 6 within the "same effective offset", the new single sweeps will be shown at the same level, and adjusting the offset/position of the "frozen" sweep will move it up/down every 2 steps of position adjustment.

For example, I could take a sweep at -1.04mV to -1.24mV and their level was the same (within the tight cursor "window"), at -1.00mV it would jump (a bit out of the window on one side), at -1.28mV it would jump (a bit out of the window on the other side).

Note: if the input coupling is set to GND, then even the auto-triggered line changes offset every 2 steps of vert pos change (as expected).

I know it is tiny stuff, but still...

4 screenshots, with 5sec persistence, auto-trigger mode. For every screenshot, cursors were not changed, only the vertical position was adjusted between the mentioned -1.00mV and -1.28mV. I looked at every step in between, but only recorded the significant ones. (I also have tens of other screenshots of the work getting to this point, but they don't really reveal anything more.) Same thing happens with other positions, those values are where I just happened to end up at the time of getting these pics.

Note how the "jump" in the signal level is definitely more than the 2 pixels (of 1 LSB), and is that same 6 steps * 0.04mV as what the vertical offset/position was adjusted to get that jump to happen.

I wonder, if the minimum resolution for vertical offset control (in analog side) is different (i.e. bigger steps), not matching the input resolution? (It does not have to be the same, if there is headroom in the input range (as there seems to be), but then the difference should be corrected in the digital side or simply have bigger steps for offset in the UI/control.) I couldn't find info on that offset step size in the datasheet.

I hope this version explains better what I have been after.

(P.S. the waveform update rate in the auto-trigger mode seems to also depend on the particular offset level, from butter smooth to "flashy", but I think that would be another story.)

-

I wonder, if the minimum resolution for vertical offset control (in analog side) is different (i.e. bigger steps), not matching the input resolution? (It does not have to be the same, if there is headroom in the input range (as there seems to be), but then the difference should be corrected in the digital side or simply have bigger steps for offset in the UI/control.) I couldn't find info on that offset step size in the datasheet.

Yes, now I’ve got it

I hope this version explains better what I have been after.

The offset is not a mathematical operation but a true physical internal voltage fed into the DC path of the scope input buffer in order to compensate any internal or external DC offset. This voltage is generated by a DAC which can have whatever resolution the designer has chosen. The acquisition hardware, i.e. PGA (Programmable Gain Amplifier) and ADC (Analog to Digital Converter) do not know where the DC component comes from – whether it’s part of the signal or internally generated. The scope software knows it of course, displays the internal offset and combines it with the ADC samples in order to calculate correct measurements.

Quite obviously, the resolution of the DAC used for the internal DC offset generation is not high enough for the highest sensitivities, i.e. below 10mV/div. At least from 10mV/div upwards (probe multiplier x1 assumed), the offset does not “jump” anymore. Maybe even at 5mV/div – it’s hard to tell.

All this comes as no surprise, as the offset compensation has to work up to 148mV/div (there is an error in the datasheet btw, claiming the attenuator thresholds to be at 102mV/div and 1.02V/div respectively, whereas they actually are at 150mV/div and 1.5V/div).

So let’s do the math: up to 148mV/div we get an offset range of +/-1V (x1 probe multiplier). The smallest step we can get for the offset appears to be some 240µV, so the DAC number range would be at least (2 x 1V)/240µV = 8333, which would even exceed the number range of an 13-bit DAC. Well, that doesn’t make much sense, so the actual conditions are obviously slightly different, but we can see that there is at least a 12-bit DAC involved, maybe with separate sign generation which makes it effectively 13-bit.

Anyway, the DAC does not absolutely need to have such a high resolution that it can match the ADC resolution even at the highest sensitivities – and here is why:

First we should ask, what the vertical position/offset is used for.

We certainly use it within the visible screen area to position the trace vertically as we like. For that, we normally don’t absolutely need super high resolution. Yes, sometimes we want to line up two traces precisely and we might not be able to do that below 10mV/div, but for the majority of applications it’s not that critical.

Then we have the situations, where a weak AC signal is riding on a high DC voltage, such as ripple and noise on a power rail. Well, there we can use AC-coupling for the input channel, hence get rid of the DC offset and again the position control (that handles the internal DC offset) is just a means for vertical trace positioning.

But now there are also the less common applications, where we want to measure both the DC and AC portion, hence do not want to get rid of the external DC offset by means of channel AC coupling. Consider a +5V power supply rail that has some ripple on top. We want to tweak the circuit and observe the ripple, but monitor the supply voltage at the same time. Let’s assume the ripple isn’t that high, say some 100mVpp. If we use a vertical gain that allows us to view both, we have to choose 1V/div and the ripple would be just one tenth of a division – we could barely see anything.

The solution is turning the position down, way outside the visible area to some -5V in order to be able to observe the ripple with high sensitivity:

SDS2304X_Offset_1

Assuming a x10 probe, we can now get a clear picture of the ripple at 50mV/div, but still accurately measure the DC voltage at the same time (mean value of the voltage, measures as 5.01V).

In this scenario it once again does not matter where within the visible screen area the AC portion of the signal sits, so the resolution of the offset compensation is not critical. An Offset of -5.05V works just as well as -4.95V:

SDS2304X_Offset_2

The true vertical gain in this scenario is 5mV/div (x10 probe factor!). Yet I was under the impression I was able to fine position the trace.

So we need to understand that the position control just applies a DC voltage to the input buffer, which in turn adds a DC offset to the signal, and this can also be used to compensate for a DC component of the signal itself. All it has to do is to bring the AC portion of the signal within the visible (hence measurable) screen area, but the exact position does not matter.

This should now also explain why the self-cal can never be perfect, at least not for the offset compensation – simply because the very same mechanism is used to compensate the internal (unwanted) DC offset. Since the resolution of the DAC has been found to be some 240µV, we cannot get an exact offset compensation even immediately after a self-cal.

-

All that theory I already knew. The "issue" I have with the results I found (or with not having 1 LSB level 0-offset self-calibration) is that the scope obviously knows how to adjust offset at the 1LSB resolution (as proven with the frozen sweep position adjustment and GND-coupled input), but the normal input samples are not behaving the same.

My addition to the theory section:

As long as there is headroom in the ADC (e.g. showing only 200 value range with 8-bit ADC which has total range of 256 steps), the "fine-tuning" of the offset (to within ADC's resolution, or actually even better than that if samples (EDIT: converted to) have enough bits) can be done with software/digital. (It can be done even with no ADC headroom, but then either the top or bottom end would have a bit of clipping.)

As an example: say, having settings where ADC resolution would correspond to exactly 1mV, but offset system provides only 10mV resolution, and we would like 6mV offset. If the system then chooses the closest analog offset (10mV), the ADC gives values that are 4mV off the mark (too high offset). But the system knows that, so it can just subtract 4mV offset from every sample in digital/software, and now the final samples are offset by the desired 6mV.

So, this scope does know how to do the math, as it can do the same software offset adjustment with the frozen sweep samples (single-triggered), but it seems it is not doing it with the incoming sample data flow. And because it has not corrected the incoming data, the shown values both in the running waveforms (except with GND-coupled input) and in the single-triggered frozen sweep can be also slightly wrong. In single-trigger view it is only calculating the "fine-tuned" offset change on top of the non-corrected sample data, it does not apply the correction at any point, even during this post-processing where it would have all the time it needs and then some.

At least, that is one theory on how the behavior could be explained.

In any case, the shown samples do not have the fine-step offset shown to user (except in every 1 in 6 offset or so, EDIT: and no way to know which one of those 6 steps would give a physical offset that matches the shown offset) (and ignoring absolute accuracy, only considering the relative accuracy and resolution). Either the scope should apply the corrective math (one signed 8-byte addition operation per sample) or let the user only adjust the offset with the actual resolution available (since it apparently can not be controlled as accurately as let to believe anyway). (I am also aware that likely the best offset step size in those lowest ranges would be about double of what the steps are doing now in 2mV/div sensitivity, I'd be fine with that.) Both solutions would end with samples with correct offset; one needs a tiny bit more calculations and gives smoother offset control, the other with visibly coarser offset control but matching what the analog side can actually do.

(Note that the corrective addition does not need to be done with every single input sample, but only for shown samples, but then everything else needs to consider this dualism of having different "effective input sample offset" and "higher resolution visual offset". E.g. trigger levels would need to work with the same effective offset as the input data is being handled, but the levels must be shown in the same "visual offset" as the samples are shown with. So, the added complexity of such solution would be just asking for more bugs... Adding an offset correction to every simple input data is simple, but it does need that one calculation, and there can be quite a number of samples coming in, is there enough processing resources in the system...)

An additional question is related to that GND-coupled input. How can its offset be controlled in that 1LSB resolution? I'd have assumed that it would be simply a switched connection to ground at the AFE input (or similar), and thus should have the exact same normal analog side processing. Showing the internal noise and offset calibration errors, and those ~3LSB jumps with offset adjustment. But since GND-coupled input offsetting moves every 2 steps (1 LSB), something is very much different for them. Is the GND switched to the path after the analog offset injection and given all of the offset with digital "correction"? Or even later, just before ADC (as it does not need any gain either)? Or simply digital simulation (with tiny bit of simulated noise on it)? -

All that theory I already knew.

Sorry, but from your replies this is not always absolutely clear to me – and then there might be others coming across this thread who may actually find it useful to get the full explanations posted here. I don’t mean to bore anybody, but still prefer to provide complete information.

So for this posting I apologize in advance that I will write a lot of things that you probably know already

The "issue" I have with the results I found (or with not having 1 LSB level 0-offset self-calibration) is that the scope obviously knows how to adjust offset at the 1LSB resolution (as proven with the frozen sweep position adjustment and GND-coupled input), but the normal input samples are not behaving the same.

These are totally different scenarios. Whenever the acquisition is stopped (like after a Single Trigger event), the DC-offset cannot control the trace position anymore. Now moving the trace on the screen is a pure graphical task.

The same applies for GND coupling. This is no physical shorting of the scope input as it has been on old analog scopes. It is just software and the (zero) trace is shown at the position where it is supposed to be according to the offset setting, hence the internal DC offset voltage cannot have any impact. There have been folks wondering why the offset is different between GND-coupling and actually shorting the screen input at high input sensitivities, and this is the answer to it.My addition to the theory section:

You are right, it could be done that way – on a very different scope that is, one that would be slow like a turtle.

As long as there is headroom in the ADC (e.g. showing only 200 value range with 8-bit ADC which has total range of 256 steps), the "fine-tuning" of the offset (to within ADC's resolution, or actually even better than that if samples have enough bits) can be done with software/digital. (It can be done even with no ADC headroom, but then either the top or bottom end would have a bit of clipping.)

As an example: say, having settings where ADC resolution would correspond to exactly 1mV, but offset system provides only 10mV resolution, and we would like 6mV offset. If the system then chooses the closest analog offset (10mV), the ADC gives values that are 4mV off the mark (too high offset). But the system knows that, so it can just subtract 4mV offset from every sample in digital/software, and now the final samples are offset by the desired 6mV.

Fact is, however, that this is not the case. The full ADC range is used for signal acquisition only, nothing of that is sacrificed for calibration and/or fine-adjust purposes.

Here’s the proof: a 500mVpp signal is too high in amplitude to fit on the screen at a 50mV/div vertical gain setting.

SDS2304X_Daynamic_500mVpp_50mV

Note that the peak to peak measurement is still correct (within tolerances), even though the screen height is only 400mV.

If we stop the acquisition and turn up the vertical gain setting to 100mV/div, we can see the full waveform, nothing misaligned, nothing clipped:

SDS2304X_Daynamic_500mVpp_100mVSo, this scope does know how to do the math, as it can do the same software offset adjustment with the frozen sweep samples (single-triggered), but it seems it is not doing it with the incoming sample data flow. And because it has not corrected the incoming data, the shown values both in the running waveforms (except with GND-coupled input) and in the single-triggered frozen sweep can be also slightly wrong. In single-trigger view it is only calculating the "fine-tuned" offset change on top of the non-corrected sample data, it does not apply the correction at any point, even during this post-processing where it would have all the time it needs and then some.

The scope does not do any adjustments when in Run mode. There is no time for that either. It just collects ADC samples in the sample memory and then flushes out the whole bunch to the display every 40 milliseconds. The SDS2000(X) does manipulate the sample data in Average and Eres acquisition modes though, and this limits the usable Sample memory to a total of 28k and slows down the waveform update speed to something close to the screen update rate on top of that.

Note: this is different for the newer SDS1000X-E models, which do it in hardware and can use deep memory and reach full speed in these modes.

There is also no point in modifying the data in Stop mode all of a sudden. The scope just shows the very last acquisition in this mode, just as it has been displayed during Run (there it was not alone but together with numerous previous acquisitions).

Only when you alter the vertical position in Stop mode, it does some math to reposition the trace to where it’s supposed to be with the new offset – and usually the assumptions are correct, so you don’t see any major jump when starting Run mode again.In any case, the shown samples do not have the fine-step offset shown to user (except in every 1 in 6 offset or so, EDIT: and no way to know which one of those 6 steps would give a physical offset that matches the shown offset) (and ignoring absolute accuracy, only considering the relative accuracy and resolution). Either the scope should apply the corrective math (one signed 8-byte addition operation per sample) or let the user only adjust the offset with the actual resolution available (since it apparently can not be controlled as accurately as let to believe anyway). (I am also aware that likely the best offset step size in those lowest ranges would be about double of what the steps are doing now in 2mV/div sensitivity, I'd be fine with that.) Both solutions would end with samples with correct offset; one needs a tiny bit more calculations and gives smoother offset control, the other with visibly coarser offset control but matching what the analog side can actually do.

Yes, now we’re talking about a change request.

I agree that it would be better to display the actual values, but I think I can understand why it is the way it is now. The UI is kind of universal and defines a certain resolution for the offset control. Some low level driver has to translate that request to the actual hardware, which is just not capable of fulfilling it at the very high sensitivities in this particular case. The UI doesn’t know that and it would not be just a small change to implement that. A new API would be required for the UI to query the driver about the capabilities of the hardware and then the proper restrictions have to be applied. But at least it would not be out of the question to do that.

The other approach though, applying some software processing during run to make up for insufficient DAC resolution, is clearly not going to happen – I can smell that in advance

(Note that the corrective addition does not need to be done with every single input sample, but only for shown samples, but then everything else needs to consider this dualism of having different "effective input sample offset" and "higher resolution visual offset". E.g. trigger levels would need to work with the same effective offset as the input data is being handled, but the levels must be shown in the same "visual offset" as the samples are shown with. So, the added complexity of such solution would be just asking for more bugs... Adding an offset correction to every simple input data is simple, but it does need that one calculation, and there can be quite a number of samples coming in, is there enough processing resources in the system...)

There are no samples not showing on the screen. This is one of the points of the Siglent X-series scopes, that they don’t hide any real data and users can always see everything that has been captured at a glance. A single video frame on the screen, updated every 40 milliseconds, contains up to thousands of trigger events, and the entire sample memory is cramped into that display. Yes, many samples will overlap that way, but you’ll never miss a peak or glitch as long as the effective sample rate is high enough to capture it in the first place, and this is also why intensitiy grading works so well with these scopes.

You have just identified a number of impacts that a software correction of the input offset would have on other areas, like triggering and measurements, so it is not just an easy coffee-break change. But the biggest argument against such an implementation would be the impact on performance, as already stated before. Post processing every single Sample in a scope that can use up to a total of 280Mpts (with both ADCs active) is just not going to happen.An additional question is related to that GND-coupled input. How can its offset be controlled in that 1LSB resolution? I'd have assumed that it would be simply a switched connection to ground at the AFE input (or similar), and thus should have the exact same normal analog side processing. Showing the internal noise and offset calibration errors, and those ~3LSB jumps with offset adjustment. But since GND-coupled input offsetting moves every 2 steps (1 LSB), something is very much different for them. Is the GND switched to the path after the analog offset injection and given all of the offset with digital "correction"? Or even later, just before ADC (as it does not need any gain either)? Or simply digital simulation (with tiny bit of simulated noise on it)?

I’ve already mentioned that at the beginning. Since the data from the acquisition are ignored in this coupling type, the scope has nothing to correct. It just draws a line at the position that is set by the vertical position control. It’s a pure graphical operation.

I for one don’t see any noise in GND mode, not even at the zoomed 1mV/div gain setting. But I admit that I do not know where exactly the switch is implemented. From some experiments, my first suspicion would be that simply the data transfer between ADC and sample buffer is stopped.

-

No worries, by all means, indeed be thorough (I usually do just the same with my responses in similar situation, that is, when I can), it was just to let you know what info I already had when making my earlier replies. But I'm still (re)learning (as obvious from the hysteresis -case).All that theory I already knew.

Sorry, but from your replies this is not always absolutely clear to me – and then there might be others coming across this thread who may actually find it useful to get the full explanations posted here. I don’t mean to bore anybody, but still prefer to provide complete information.

So for this posting I apologize in advance that I will write a lot of things that you probably know already Quote

Quote

Actually does not need to be slow (with proper hardware), but might not be cheap or easy, or possible with the tech in this scope. I was thinking such solutions like 15-20 years ago with DSP chips available back then, with IIRC just 8 parallel units capable of addition, though each could be independent, and half of them could do MACs, not just additions). These days it is nearly trivial considering all the parallel processing advancements - if one can choose the hardware, which obviously isn't the case here; the scope has what it has.My addition to the theory section:

You are right, it could be done that way – on a very different scope that is, one that would be slow like a turtle.

As long as there is headroom in the ADC (e.g. showing only 200 value range with 8-bit ADC which has total range of 256 steps), the "fine-tuning" of the offset (to within ADC's resolution, or actually even better than that if samples have enough bits) can be done with software/digital. (It can be done even with no ADC headroom, but then either the top or bottom end would have a bit of clipping.)

As an example: say, having settings where ADC resolution would correspond to exactly 1mV, but offset system provides only 10mV resolution, and we would like 6mV offset. If the system then chooses the closest analog offset (10mV), the ADC gives values that are 4mV off the mark (too high offset). But the system knows that, so it can just subtract 4mV offset from every sample in digital/software, and now the final samples are offset by the desired 6mV. Quote

QuoteThere is also no point in modifying the data in Stop mode all of a sudden. The scope just shows the very last acquisition in this mode, just as it has been displayed during Run (there it was not alone but together with numerous previous acquisitions).

The latter part is where I see difference; it does not move the trace where it should be (considering the numeric offset value), but moves it relatively to the captured level. For example, I could take a single trace at the earlier -1.24mV offset level, then move the offset to the -1.04mV level (5 offset numeric steps up, two visible 1LSB steps on the view up), then if I press either another single capture or the auto trigger, the new samples appear back on the same lower level that I had at -1.24mV (or with any offset between that and -1.04mV). It is not a major jump, per se, but still a jump.

Only when you alter the vertical position in Stop mode, it does some math to reposition the trace to where it’s supposed to be with the new offset – and usually the assumptions are correct, so you don’t see any major jump when starting Run mode again.

Basically, the uncertainty even on relative error of the offset is around that 3LSB.

So, either the stop mode could use correct fine offset and thus cause a small visible jump from run mode to stop mode and have as good a visible offset level as possible, or work like it does now, having a similar jump from stop mode when taking new sweep or switching to running mode and having unknown small amount of error in the shown offset vs. shown signal.QuoteThere are no samples not showing on the screen. This is one of the points of the Siglent X-series scopes, that they don’t hide any real data and users can always see everything that has been captured at a glance. A single video frame on the screen, updated every 40 milliseconds, contains up to thousands of trigger events, and the entire sample memory is cramped into that display. Yes, many samples will overlap that way, but you’ll never miss a peak or glitch as long as the effective sample rate is high enough to capture it in the first place, and this is also why intensitiy grading works so well with these scopes.

The maximum rate of incoming samples is, I think 4Gs/s (2+2). A modern cheap DSP tech or a parallel processing unit in a CPU can handle that trivially. E.g. 64 byte size additions in parallel (and the other operands are the same number, so no bandwidth wasted in moving varying other operands, only for the samples), 62.5MHz rate is enough. (Even original Pentiums MMX stuff was close to handle that, 20 years ago.) As I mentioned above, I was musing this kind of stuff back then (not for scope samples but SDR processing). Not quite as trivial back then, or needed a chip that cost hundreds of $$$.

You have just identified a number of impacts that a software correction of the input offset would have on other areas, like triggering and measurements, so it is not just an easy coffee-break change. But the biggest argument against such an implementation would be the impact on performance, as already stated before. Post processing every single Sample in a scope that can use up to a total of 280Mpts (with both ADCs active) is just not going to happen.

However, the alternate version does not need to process all samples individually; it could first process them to the visual data (a maximum of 640x400 in pixels in separate temporary layer), then translate those pixels by the calculated screen-space offset. About two orders of magnitude less of calculations. However, there would be that complexity then. Well, since it does need to do the addition (and other stuff) to place to samples into pixels in the first place, it is just a matter of changing that one value.... see the paragraph after the next one.

Also, even if calculating the offset correction for each sample in stop mode, as I said, there is (usually) ample time, relatively speaking. A human=slow user is looking at it, so it does not have to be "instant", just faster than what it takes for a user to "process" the data he is seeing.

And anyway, the scope is doing that offset calculation stuff already in stop mode, just with the slightly "wrong" offset, so processing time obviously hasn't been a problem (in that mode). All it would need to do is to add the internal corrective value to the numeric offset number before doing what it is already doing. (Though, as mentioned, this would result in the "jump" to happen when switching from the running mode to stop mode, and running mode still showing slightly wrong offset.)QuoteI for one don’t see any noise in GND mode, not even at the zoomed 1mV/div gain setting. But I admit that I do not know where exactly the switch is implemented. From some experiments, my first suspicion would be that simply the data transfer between ADC and sample buffer is stopped.

See the attachment, using single trigger, 1V/div (it looks similar at any sensitivity, but they are samples and affected by sensitivity changes afterwards). Whether to call it noise or something else, I don't know, but it certainly isn't a flat line.

-

See the attachment, using single trigger, 1V/div (it looks similar at any sensitivity, but they are samples and affected by sensitivity changes afterwards). Whether to call it noise or something else, I don't know, but it certainly isn't a flat line.

Siglent SDS2000X is not alone with this. Same with SDS1000X-E series and SDS2000 (no X).

Input coupling "to GND" still have some changes in the sample queue. Also if all ADC's works non interleaved mode. (all channels on). -

Actually does not need to be slow (with proper hardware), but might not be cheap or easy, or possible with the tech in this scope. I was thinking such solutions like 15-20 years ago with DSP chips available back then, with IIRC just 8 parallel units capable of addition, though each could be independent, and half of them could do MACs, not just additions). These days it is nearly trivial considering all the parallel processing advancements - if one can choose the hardware, which obviously isn't the case here; the scope has what it has.

Rumor has it that the SDS2000X uses an Analog Devices 400MHz Blackfin DSP. This is a capable DSP for sure, but has to do a lot of things after all. I cannot tell any internals, so the tiny hint that resources are limited with this architecture shall suffice.

I have already mentioned the newer SDS1000X-E series which has the processing for Average and Eres implemented in hardware, so there is almost no slowdown – but still there is some, even though the max. memory depth is limited to 1.4Mpts. This platform is based on the Xilinx Zync SOC, which is a very powerful architecture and can handle a huge memory. Anyway, the base philosophy has been kept the same – I have not thoroughly checked yet, but would be very surprised if offset were handled any different than in the SDS2k.The maximum rate of incoming samples is, I think 4Gs/s (2+2). A modern cheap DSP tech or a parallel processing unit in a CPU can handle that trivially. E.g. 64 byte size additions in parallel (and the other operands are the same number, so no bandwidth wasted in moving varying other operands, only for the samples), 62.5MHz rate is enough. (Even original Pentiums MMX stuff was close to handle that, 20 years ago.) As I mentioned above, I was musing this kind of stuff back then (not for scope samples but SDR processing). Not quite as trivial back then, or needed a chip that cost hundreds of $$$.

Even though it might seem trivial if looked at in isolation, we should not forget that this is not the only task for this DSO. Anyway, according to my previous hint, we won't get any additional bells and whistles for the SDS2000(X). Even if we could, I'd certainly have different priorities, e.g. long memory for Average and Eres – because other than some offset inaccuracy at the highest sensitivities, we run into serious (aliasing) troubles pretty easily with just 28kpts total for those acquisition modes.However, the alternate version does not need to process all samples individually; it could first process them to the visual data (a maximum of 640x400 in pixels in separate temporary layer), then translate those pixels by the calculated screen-space offset. About two orders of magnitude less of calculations. However, there would be that complexity then. Well, since it does need to do the addition (and other stuff) to place to samples into pixels in the first place, it is just a matter of changing that one value.... see the paragraph after the next one.

It is 700 x 400 screen pixels btw.

Without knowing the internal architecture, we could speculate all day how things could be done differently. No one at Siglent will seriously consider a radical change in the system architecture just for bypassing a minor issue, that could easily be addressed by just fitting a higher resolution DAC for the offset control.

I'm not in my lab anymore until next weekend, so I cannot look right now how the newer SDS1000X-E handle vertical position changes down at their highest sensitivities. There we have even +/-2V offset and one LSB in the 500µV range is just 20µV. We’d need a DAC capable of 200k steps, some 18 bits…

Maybe rf-loop is reading this and could have a look?And anyway, the scope is doing that offset calculation stuff already in stop mode, just with the slightly "wrong" offset …

I have stated it already - Stop mode is completely different. It only shows a single acquisition and no new data to be combined with the existing ones and no building up of millions of data points for a single video frame every 40ms. Consequently, there is also no intensity grading in Stop mode. All in all, it’s not only very different but also a lot easier.

See the attachment, using single trigger, 1V/div (it looks similar at any sensitivity, but they are samples and affected by sensitivity changes afterwards). Whether to call it noise or something else, I don't know, but it certainly isn't a flat line.

Yes, just like you I found the obvious answer while taking a walk. I’m convinced now that the GND coupling is simulated by shutting off the VGA output, not the ADC. This could even be a regular function of the VGA. In any case it is done after the input buffer and the internal offset voltage doesn’t come into play here anymore.

The difference between no input signal and GND coupling is almost always there, and quite obviously the scope tries to apply some offset correction to the GND coupling as well, as some have observed the real input providing a better zero reading than the GND coupled trace at times.

All in all I haven’t spent much time in the past to clear such phenomena up, simply because it did never matter for my practical work and I have never used GND coupling anyway – why should I? This is just a weird relict from old analog scopes in my book. There we needed it for quick trace nulling, but on a modern DSO we have auto-cal for that. I know, this is not the point here in this discussion; but it explains why I never bothered to find out what the scope exactly does in GND coupling mode. Yet I’m absolutely sure it does not get any real input signal anymore, also not the internal generated DC offset.

What we can see in stop mode is some granular noise from the ADC, independent of the vertical gain setting, because the gain of the VGA doesn’t have any impact when it is in “output shutdown” mode.

-

I understood that it is different, and was trying to emphasize that in that situation, it could show the signal with the corrected offset down to that 1LSB ~ 2 pixels ~2 steps of vertical offset with no extra effort. But it does not. And what the differences in the use would be between current way and the corrected way I mentioned there earlier.And anyway, the scope is doing that offset calculation stuff already in stop mode, just with the slightly "wrong" offset …

I have stated it already - Stop mode is completely different. It only shows a single acquisition and no new data to be combined with the existing ones and no building up of millions of data points for a single video frame every 40ms. Consequently, there is also no intensity grading in Stop mode. All in all, it’s not only very different but also a lot easier.

But probably the most consistent way would be to simply have the little bit of extra code to handle bigger than 1 LSB offset stepping already in the control UI, i.e. let the offset step with the same amount as the circuitry can do. Maybe some day, maybe not. This "issue" certainly won't stop me using the scope (Just have to write a note on it to remind me of it. And of the hysteresis

(Just have to write a note on it to remind me of it. And of the hysteresis  )

)

-