-

I was reading some of gnassles

http://www.ganssle.com/rants/timetomoveonfromc.htm

so we all know the situation around ST for example but aparently they are not alone and that the whole HAL and Cube faff is more or

less the result of truck loads of peripherals. When we look at a MCU silicon today we find far out in the corner a little dot, that's the

CPU everything else is memory and peripherals, we all know this.

So in 5-10 years from now how will embedded world look like? Will there for example be 2 dollar MCU's containing

10 very fast CPU's and only rudimentary interface logic and SW based peripherals? Wouldn't that be nice?

All the HAL/CMSIS crap is gone and you can decide your self what kind of peripherals you need and how many.

Will this MCU be running ADA since the downsides of C is becoming more and more evident?

Your take on this? -

That article is from 2010, C++ has evolved since then, would we see C++11 on MCUs? probably, ADA not so much but maybe Erlang, Haskell, F#, Scala, or a ton of other functional languages that are coming out of the woods.

But C++ is a concurrent and functional language (impure) since C++11.

C++14 supersedes C++11 and C++17 is in the works.

Of course a lot of programmers are stuck on C and the original C++, but C++11 and even C++14 should be on your list of things to learn soon if you are looking for a job

-

C++ supposed to have taken over C by 2010. It didn't happen.

Depending on what you mean by "take over", per my definition (50%+ of market) it will not happen for another 10 years at least.

Why? Lots of small devices will be made with Cortex-M0, under $1 today, with 64K flash. Great with C.

C++ excels over C with "programming of the large", but while more and more embedded projects will be larger and larger, each piece will be small. Distributed computing, Internet of Thing model. -

Ada!? My wife asked why I burst out laughing. Unfortunately she doesn't get humor.

-

Ada!? My wife asked why I burst out laughing. Unfortunately she doesn't get humor.

It was good for its day :-)

I worked for Intermetrics for a couple years after they bought Whitesmiths, and it's amazing: the "old guards" were mostly gone by then, but this was the company that wrote the Space Shuttle software. They built their own optimizing compilers. Talk about quality software - failure is not an option. Somany stories. -

That article is from 2010, C++ has evolved since then, would we see C++11 on MCUs? probably, ADA not so much but maybe Erlang, Haskell, F#, Scala, or a ton of other functional languages that are coming out of the woods.

Just what embedded programming needs. GC, asynchronous message passing and lazy evaluation. An unholy trinity of unpredictable memory use and program flow, just need to add some lock free programming to really pile on those impossible to pinpoint bugs. -

going by the 2014 embedded market study, C is still sitting at 60%, C++ has dropped to 19%, and good old assembly language is still ahead of everything else.

-

The OP is talking on the hypothetical future of multicore MCUs, which happens to be the direction hardware is currently going since we are hitting the limit on how long it takes the information to travel the silicon, so the solution is either going 3d, but that just helps only a little, or adding more cores.

So in 5-10 years from now how will embedded world look like? Will there for example be 2 dollar MCU's containing

10 very fast CPU's and only rudimentary interface logic and SW based perihperials? Would'nt that be nice?

All the HAL/CMSIS crap is gone and you can decide your self what kind of pheripherials you need and how many.

Current mobile chips already feature 8 cores or more. Will embedded MCUs follow that trend? that's the question at hand.

Myself I don't think that is going to happen in the next decade since 5-10 years is really not that big of a span of time to affect the MCU industry, but we are already seeing the migration to lower voltage cores and once they hit 200mV the only way to make more efficient and low power processors would be to add more cores.

Not sure what the physical limits are but 5GHz at 200mV core voltage rings a bell, so it's going to take more than 10 years in my opinion for this paradigm shift to affect MCUs.

The problem then will be that the hardware would surpass what the programmers can make use of the device using C or similar sequential oriented programming languages, maybe you can use 10 cores and keep them busy doing 10 separate tasks but what happens with 20, 50, 100, 1000 cores all with shared memory and resources? That's when functional and concurrent languages will come to play.

Again, I don't know if that will happen at the embedded level in the next 10 years, that sounds too aggressive of a timeline, but it will happen at the desktop and mobile level and it's going to be hard to make use of all that computer power with legacy sequential oriented languages.

Sooner or later, however, it will be the same paradigm shift for MCUs.

Fortunately a lot of people are dealing with HDL and are no strangers to concurrency versus sequential thinking. After all we are born as concurrent beings and we have to unlearn our instincts in order to learn sequential languages.

But whatever the future brings we will adapt.

-

Quote

author=miguelvp link=topic=56834.msg777916#msg777916 date=1444879221]

It would be strange if it did not.

Current mobile chips already feature 8 cores or more. Will embedded MCUs follow that trend? that's the question at hand.QuoteMyself I don't think that is going to happen in the next decade since 5-10 years is really not that big of a span of time to affect the MCU industry, but we are already seeing the migration to lower voltage cores and once they hit 200mV the only way to make more efficient and low power processors would be to add more cores.

Not sure what the physical limits are but 5GHz at 200mV core voltage rings a bell, so it's going to take more than 10 years in my opinion for this paradigm shift to affect MCUs.

Well diamond and graphene substrate is not to far away, progress have been made.QuoteThe problem then will be that the hardware would surpass what the programmers can make use of the device using C or similar sequential oriented programming languages, maybe you can use 10 cores and keep them busy doing 10 separate tasks but what happens with 20, 50, 100, 1000 cores all with shared memory and resources? That's when functional and concurrent languages will come to play.

Tiny local memories and one large main memory, i mentioned ADA now i'm going to mention FORTH, yes you can laugh now! http://www.greenarraychips.com/

http://www.greenarraychips.com/

144 CPU's is a interesting case.

Also is Samsung Exynos5422 Cortex™-A15 2Ghz and Cortex™-A7 Octa core CPUs -

Quote

Will there for example be 2 dollar MCU's containing

It's been tried, mostly not very successfully. Bitslice microcoded machines, Scenix/Ubicom chips, XMOS, Parallax Propeller, Atmel FPSLIC, Cypress PSoC, and probably others. And no, it wouldn't be particularly nice; the hardware to implement a UART or Ethernet controller is far simpler (and less power hungry) than the processor that can implement it in SW. And for every programmer or business that cries over the inefficiency of a vendor library, there are probably 10 that are happy enough to throw more clocks and memory at their product (because THEY scale easily enough (so far), while peripheral complexity keeps going up. And that's for the simple peripherals; by the time you get to gigabit ethernet, wifi, USB, Bluetooth, or TCP/IP, there are VERY FEW companies or people who are prepared to implement things from scratch (compared to a known-quantity library that everybody uses.)

10 very fast CPU's and only rudimentary interface logic and SW based perihperials? Would'nt that be nice? -

Current mobile chips already feature 8 cores or more. Will embedded MCUs follow that trend? that's the question at hand.

why the "Current mobile chips already feature 8 cores or more" is not called embedded MCU? i will be surprised if its not.So in 5-10 years from now how will embedded world look like? Will there for example be 2 dollar MCU's containing

why you want parallel computing with minimal IOs? the IOs need to be scheduled anyway and parallel computing will reveal its superiority in time consuming and complex algorithms. how many applications that call for this? cant the existing FPGA or the bigger multicored mcu deal with it? probably imho only in very specific, serious and expensive application, the margin thats not worth trying? the only advantage i can see is miniaturization but... in a very specific task miniaturization is not a critical motive, most of them are big big machines with abundant of IOs...

10 very fast CPU's and only rudimentary interface logic and SW based perihperials? Would'nt that be nice?After all we are born as concurrent beings...

we are both concurrent and sequential beings.. the task of cognition is concurrent, the task of intelligence or decision making is sequential...

ps: i should get a life again...

-

Software peripherals ?... You have the Parallax Propeller with 8 processors and you have XMOS chips with 1 or 2 cores and up to 8 threads per core... both with software peripherals

, today. The cheapest XMOS chip costs like 2 $, one core and 4 threads. There is also GreenHills massive 144-core forth uC, also with software periphs.

, today. The cheapest XMOS chip costs like 2 $, one core and 4 threads. There is also GreenHills massive 144-core forth uC, also with software periphs.

-

why the "Current mobile chips already feature 8 cores or more" is not called embedded MCU? i will be surprised if its not.

Cortex profile abbreviations:

Cortex-M is for microcontrolers.

Cortex-R is for real time

Cortex-A is for application (mobile chips are on this category)

I'm not the one deciding to not call them MCUs, ARM made that decision

But I digress, mobile chips are more of a systems on chip and they come with integrated GPUs, USB, HDMI, PCIe, etc, etc, etc. So it's not just the ARM processor and it's not an MCU.

Edit: plus mobile chips do run an OS.

-

Predict the future? We cannot even predict the weather for tomorrow!

I would like to see more versatile MCU with programmable (FPGA style) port logic - hybrids. This would allow designs to become even smaller and allow us to put IoT stuff in every nook and cranny. But I'm sure it has other uses as well.

And if you go there - do you also need some integrated language to describe it? At first probably not.

The other thing is parallelism. I don't see 1000 core MCUs being manufactured any day soon, but multi cores are already available. I do think a level of abstraction over that would be very beneficial. That way those cores can be utilized effectively and easily without chances of too much thread-safety issues.

IDEs can use some love too. I still cannot use unit tests or have source control in Atmel Studio for instance. These people are from the Dark Ages. I think the more software is running on MCUs the more the EEs have to become Software developers. Embedded has its own share of specific requirements for sure, but good software development practices (test and release process) is mandatory. So with the next generation of MCUs should also come the next generation of IDEs. Perhaps they should stop each trying to develop their own and combine the efforts in a plug-in based model like eclipse for instance? Then all the effort can go into building the actual tool and not the shell and the printing and the etc.

-

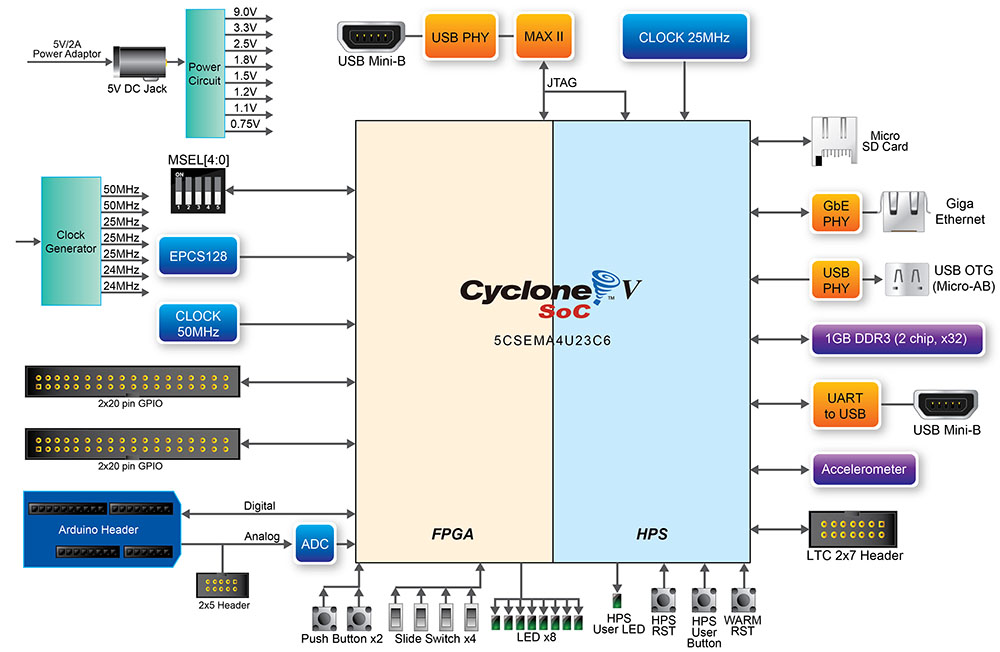

Hard core FPGAs are already around.

For example this one:

http://www.terasic.com.tw/cgi-bin/page/archive.pl?Language=English&CategoryNo=205&No=941

which I did buy but didn't have a chance to play with it yet other than running some demo software. It has a 925MHz Dual-core ARM Cortex-A9 hard core processor on it.

-

Using that for most IoT-ish projects is like buying a super computer to run a word processor program...

-

C and C++ are the cancer of the (embedded) computing. If the C / C++ would adopt Ada's strong type checking and runtime range checking, C/C++ would be quite usable languages for production work. And the macros with side-effects create quite often bugs that are hard to spot.

Although Linux is an example of a large-scale C project, even Linux would benefit for improved type checking, range checking etc.

Ada-like strict type checking won't cost a thing as it will performed at compilation time. The runtine-checks can be enabled or disabled as needes per module so the impact of the runtime-checks are well controlled. Runtime exception handling is not necessary to keep things simple and avoid code-bloat and it can be handeled by a general trap-function which will be invoked when something nasty happens, print the error location and restart the device.

Yes, I have been using C and C++ for the past 20+ years and will use it for the another 20 years for fun and profit. The more I use C/C++ more I see their weaknesses. I have seen too much of badly written C/C++ code and seen too many bugs resulting from the poorly designed languages that I cannot appreciate those languages very much. Unfortunately the reality is that decent C compilers are available for the most microcontrollers on the market for free, so the C/C++ nighmare will not go away anytime soon. -

The OP is talking on the hypothetical future of multicore MCUs, which happens to be the direction hardware is currently going since we are hitting the limit on how long it takes the information to travel the silicon, so the solution is either going 3d, but that just helps only a little, or adding more cores.

I dont think so. In fact, the market is going the opposite direction. Since it is cheaper and cheaper to put a core into your asic, we are basically outsourcing the MCU intensive tasks to the peripherals. The radio chip has an on-chip MCU. The USB controller has an on-chip MCU. The I2C extender is an MCU. The LCD controller has a built in controller, and it connects to the MCU with SPI instead of a parallel interface. They already make digital power management chips, with onboard logic.So in 5-10 years from now how will embedded world look like? Will there for example be 2 dollar MCU's containing

10 very fast CPU's and only rudimentary interface logic and SW based perihperials? Would'nt that be nice?

All the HAL/CMSIS crap is gone and you can decide your self what kind of pheripherials you need and how many.

Current mobile chips already feature 8 cores or more. Will embedded MCUs follow that trend? that's the question at hand.

Myself I don't think that is going to happen in the next decade since 5-10 years is really not that big of a span of time to affect the MCU industry, but we are already seeing the migration to lower voltage cores and once they hit 200mV the only way to make more efficient and low power processors would be to add more cores.

Not sure what the physical limits are but 5GHz at 200mV core voltage rings a bell, so it's going to take more than 10 years in my opinion for this paradigm shift to affect MCUs.

The problem then will be that the hardware would surpass what the programmers can make use of the device using C or similar sequential oriented programming languages, maybe you can use 10 cores and keep them busy doing 10 separate tasks but what happens with 20, 50, 100, 1000 cores all with shared memory and resources? That's when functional and concurrent languages will come to play.

Again, I don't know if that will happen at the embedded level in the next 10 years, that sounds too aggressive of a timeline, but it will happen at the desktop and mobile level and it's going to be hard to make use of all that computer power with legacy sequential oriented languages.

Sooner or later, however, it will be the same paradigm shift for MCUs.

Fortunately a lot of people are dealing with HDL and are no strangers to concurrency versus sequential thinking. After all we are born as concurrent beings and we have to unlearn our instincts in order to learn sequential languages.

But whatever the future brings we will adapt.

The way I see it, it will be small microcontrollers distributing the tasks into smaller chunks which are easy to write, easy to handle. Why would you need a big FPGA to do all these stuff? Interfaces are standard, and MCUs come with dozen SPI, I2C, USART. -

Since it is cheaper and cheaper to put a core into your asic, we are basically outsourcing the MCU intensive tasks to the peripherals. The radio chip has an on-chip MCU. The USB controller has an on-chip MCU. The I2C extender is an MCU. The LCD controller has a built in controller, and it connects to the MCU with SPI instead of a parallel interface. They already make digital power management chips, with onboard logic.

The way I see it, it will be small microcontrollers distributing the tasks into smaller chunks which are easy to write, easy to handle. Why would you need a big FPGA to do all these stuff? Interfaces are standard, and MCUs come with dozen SPI, I2C, USART.

Yes. As I'm sure you are aware, the main reasons for using an FPGA are tight latency/time constraints or in-chip throughput and/or tight coupling with processors, e.g. Zynq.

Message-passing (in all its myriad incarnations) will become the dominant communications paradigm because it is robust and scalable from high-performance computing, through cloud computing, through multi-core programming, to the kind of heterogeneous embedded systems you outline.

C is sufficient for such message passing. C++ is evolving to look like a badly specified[1] and poorly implemented C# wannabe.

How long was it before a complete C++98 compiler emerged? ISTR an email trumpeting it in about 2005, but maybe it was for a complete C99 compiler.

[1] see http://yosefk.com/c++fqa/ -

C and C++ are the cancer of the (embedded) computing. If the C / C++ would adopt Ada's strong type checking and runtime range checking, C/C++ would be quite usable languages for production work.

young kids always think they have more than enough... i mean cpu resources.. btw, there are few modern languages that fit your criteria, go get it. hint basic, java, python? etc... i'm tired of C/C++ vs another languages fight BS dont get me wrong i dont treat C/C++ as holy but there are lot more important things than that really...

dont get me wrong i dont treat C/C++ as holy but there are lot more important things than that really...

-

Ada-like strict type checking won't cost a thing as it will performed at compilation time. The runtine-checks can be enabled or disabled as needes per module so the impact of the runtime-checks are well controlled. Runtime exception handling is not necessary to keep things simple and avoid code-bloat and it can be handeled by a general trap-function which will be invoked when something nasty happens, print the error location and restart the device.

Oh! i need that one, right now! -

Quote

C and C++ are the cancer of the (embedded) computing. If the C / C++ would adopt Ada's strong type checking and runtime range checking, C/C++ would be quite usable languages for production work. And the macros with side-effects create quite often bugs that are hard to spot.

Although Linux is an example of a large-scale C project, even Linux would benefit for improved type checking, range checking etc.

Ada-like strict type checking won't cost a thing as it will performed at compilation time. The runtime-checks can be enabled or disabled as needs per module so the impact of the runtime-checks are well controlled. Runtime exception handling is not necessary to keep things simple and avoid code-bloat and it can be handled by a general trap-function which will be invoked when something nasty happens, print the error location and restart the device.

Did you hear about MIRSA ?, that seems to be the answer of the "(automotive) industry" to your exact requirement... instead of building a MISRA-C compiler, they go for a check after you write.... sorry but that is an abomination

-

Are you talking about static code analysis?

-

I'm wondering why no one mentioned Rust. I haven't played with rust yet, but it looks really promising in terms of features. (Automatic memory management, no GC, type safety, strong type system, etc)

-

I see that TI has a chip (AM437x) with a single GHx ARM A9 core and four "PRU-ICSS" Progrogrammable Real-time Unit and Industiral Communications Subsystem" and NXP has asymetric multicore chips (CM4+CM0); so we'll have to keep an eye on how those do vs more conventional multi-core chips. My experience suggests that SW engineering teams are lazy and would rather not keep track of two different kinds of code.