-

Quote

Let me know if anyone here actually has an understanding of thermodynamics

Most people here understand that you can heat a room with the waste heat from various bits of powered equipment. So, there is probably not much that you can contribute. -

Still haven't heard a single technical question. Let me know if anyone here actually has an understanding of thermodynamics and interest in engaging, more than happy to educate 'technical' people.

Typical home computers use only 100-150W, so why would you bother? Do you really think people will buy 1kW computers and sell off cycles just to make this work?

How do you keep the HDDs and SSDs from cooking?

If desiccant refrigeration actually works, why don't people use it now, with cheaper heat sources?

-

Sure, Jim. You may have us confused with Nerdalize?

The first prototype runs much hotter than a typical computer, hot enough today to boil water off the GPUs. With a few more degrees of heat we can also supply desiccant driven air conditioning and absorptive refrigeration. We're also using a phase change thermal storage system to store enough thermal energy from the 1st unit in 15 gallons of liquid to supply the domestic hot water and space heat for an 1100sf house.

As I mentioned, we're partnered with the Fraunhofer Institute who are researching high temperature computing today. They are currently running ASIC at 300C - we can make process steam with 300C.

Next? -

David,

"Typical home computers use only 100-150W, so why would you bother?" The 1st unit runs at 1600W, 150W really wouldn't do much.

"Do you really think people will buy 1kW computers and sell off cycles just to make this work?" They already do. Check out Cloud & Heat https://www.cloudandheat.com , Qarnot Computing http://www.qarnot-computing.com/ and Nerdalize http://www.nerdalize.com/.

"How do you keep the HDDs and SSDs from cooking?" We run 2 seperate loops on the 1st prototype and have a stratified cooling layout that keeps the memory alive on V2.

"If desiccant refrigeration actually works, why don't people use it now, with cheaper heat sources?" They do http://www.nrel.gov/docs/fy11osti/49722.pdf. If you could be paid to make the heat through distributed computation, why would you use a cheaper heat source?

The trick is to run the compute hard and hot. -

Quote

The trick is to run the compute hard and hot.

Remarkably deep technical insight! Thanks!

Maybe if you offered some free keychains or posters left over from the KS, we would "warm up" to the idea.

PS: This may come as a surprise, but I actually heat my kitchen by operating a refrigerator in it! -

"Remarkably deep technical insight! Thanks!"

The Department of Energy sure thinks it is, Jim.

Considering the entire industry is focused on running computers as cold as they can keep it is a pretty big departure from the norm. -

Hi,

As you've discovered, the audience here is generally technically astute, but also deeply unforgiving when it comes to even a whiff of marketing over substance. On a bad day I'm as guilty of this as anyone.

Your idea to use the waste heat from a computer for something useful, in preference to simply generating heat with a resistor, is fine. I don't think anyone's questioning that principle. For an audience with a strictly electronics (but not thermodynamics) background, it might do you no harm to explain why running a heat source at a higher temperature can be a good thing when it comes to making use of that heat elsewhere.

Given that you obviously understand this already, you shot yourself in the foot when you mentioned Solar Roadways in your campaign. That project was the poster child for marketing over feasibility. Guilt by association, I'm afraid.

If you want to win over a technical audience, you need to explain, quantitatively, what your product actually does and how it might be useful. A simple explanation about its power consumption, MIPS per Watt, operating temperature, and the method for ultimately extracting the heat produced and making use of it, would go a long way. Above all, you just need to demonstrate that you have a strong understanding of the physics, and that you're not trying to do something for which the numbers simply don't add up.

A purposely inefficient computer would be a bad idea... no better than a laptop plus a bar heater. One which is just as good in terms of MIPS per Watt as any other server, but capable of running at a high temperature and providing a means to make good use of the heat that would otherwise be wasted, is potentially quite a good one.

I still think you should use a standard desktop CPU and make coffee with it, though! -

Considering the entire industry is focused on running computers as cold as they can keep it is a pretty big departure from the norm.

Not really; try a search for "high ambient temperature servers".

http://www.geek.com/chips/googles-most-efficient-data-center-runs-at-95-degrees-1478473/

-

Given that you obviously understand this already, you shot yourself in the foot when you mentioned Solar Roadways in your campaign. That project was the poster child for marketing over feasibility. Guilt by association, I'm afraid.

Andy is right. Solar Roadways are an industry joke. The motherload of all unfeasible projects.

Being associated with them is technical industry poison.

Add in the fact of moving from a failed Kickstarter to Indiegogo, you are begging to have your project not taken seriously I'm afraid.

I know that's not fair, but that's the way it is.

Thankfully engineers are easy to convince, just show them the data. Talk is cheap. -

Thankfully engineers are easy to convince, just show them the data. Talk is cheap.

-

Is there any data on how this affects reliability, MTBF, etc. of the individual parts?

-

Is there any data on how this affects reliability, MTBF, etc. of the individual parts?

Running silicon based semiconductors hot enough to boil water is rarely beneficial for reliability

Surely a pretty high bandwidth internet connection is going to be required to be able to effectively sell compute cycles? Is the cost of this connection taken into account in the economics? -

Most of this type of things are based in some fact, therefore the idea to use that fact redeemed to be wasteful and use it into something useful is valid. What most of this inventors fails to notice is the fact that the development of product is always trying to be as efficient as possible. 40 years ago in the "Computer room" you could bake a loaf of bread, now you need a whole bunch computers to make the room just "pretty warm". I'm certain that one of the main goals of the engineers that tinker with making new computer circuits is to minimize the production of heat.

My point is that this "free energy" won't last forever, the only ones that will benefit from the "investment" will be the "invertor" who will get "free" money.

-

"Typical home computers use only 100-150W, so why would you bother?" The 1st unit runs at 1600W, 150W really wouldn't do much.

How many CPUs is that? Or, are you relying on high-power GPUs?Quote"Do you really think people will buy 1kW computers and sell off cycles just to make this work?" They already do. Check out Cloud & Heat https://www.cloudandheat.com , Qarnot Computing http://www.qarnot-computing.com/ and Nerdalize http://www.nerdalize.com/.

Those are in Europe - how can you make it work in the US, where we have hot summers, and slow Internet?Quote"How do you keep the HDDs and SSDs from cooking?" We run 2 seperate loops on the 1st prototype and have a stratified cooling layout that keeps the memory alive on V2.

Sounds like handwaving... what is the temperature of the low temperature loop?Quote"If desiccant refrigeration actually works, why don't people use it now, with cheaper heat sources?" They do http://www.nrel.gov/docs/fy11osti/49722.pdf.

OK, so they write reports about it, but why don't they actually use it?

-

How many MIPS per Watt does your device achieve?

-

Hey, now things are getting interesting! Fortunately it is my anniversary so I'll be out of pocket for several days and will have to get back to the conversation early next week. Have a good weekend all, looking forward to the discussion.

-

Still haven't heard a single technical question.

You have not even answered my first inquiries. I am waiting for answers on these points.Many of the new big computer centers use very efficient free cooling systems, where the

cooling is made by direct ventilations, with eventually some water evaporation help.

As an example, I run a 2000 core system with a 1.04 PUE which means that the cooling cost is only 4% of the

effective computer cost, compared to the 40-60 % this guy is mentioning. It is some

similar efficient cooling that is used by the facebook data centers.

They also seems to underestimate that they will need to evacuate the heat he will produce with

their high temperature computer.

He also forgot to say what does he do with all this heat in summer

when you want to cool the temperature of your house.

-

Is there any data on how this affects reliability, MTBF, etc. of the individual parts?

Running silicon based semiconductors hot enough to boil water is rarely beneficial for reliability

We can estimate it! (Arrhenius equation)QuoteThose are in Europe - how can you make it work in the US, where we have hot summers...

It's from Germany, they also have decent summers

Edit:

For 60°C to 100°C I got an acceleration factor of 13.7? This would reduce 100 000hours mtbf (~11years) to 7325hours (305days) -

Hi All, lets start here.

We are working on solutions to run computers significantly hotter and harder to specifically create heat with computation, we're hitting 200+ in the cooling loop today. That heat can be used for more than just running a boiler, we can power air conditioning and refrigeration as well with about 225-235 f. I know you are electronics guys so you will have to invest some google time looking for desiccant enhanced air conditioning and absorptive chillers/refrigeration - the building science guys get the implications pretty quickly. With the addition of refrigeration and air conditioning loads, thermal storage and proper sizing there is no room in our model for 'exhausting heat' - there is also a reason it's called project Exergy! If you want to talk about exhausting useful heat, track down Nerdalize or Cloud & Heat.

Since the biggest loads in our building currently run on heat (space and domestic hot water) or could be converted to run on heat (air conditioning and refrigeration) with well developed, existing technology, we could be getting two (or more) benefits out of the same energy that we are using to just run our heaters or computers today. (please don't tell me about the cost of natural gas, we're obviously targeting electric heating markets which are large and growing) One or the other could happen for free, from an energy perspective, if we used computers to make the heat that runs our homes/businesses/economy.

Interestingly, the majority of our US economy actually runs on heat, not electricity... but that is a far more confusing topic that I'd rather talk to economists about.

A gaming rig/laptop aren't going to make enough heat to have an impact so we aren't talking about converting current computers to a super computer/heater. We are building high temperature, modular 2-4kW computing appliances coupled with thermal storage that sit in the space a typical hot water heater sits today and performs local computation that datacenters are currently doing remotely near the arctic circle (JacquesBBB). Yes, there is an existing if small but growing market for this compute. The model is to keep the demand and compute local so we aren't shipping huge amounts of data to the arctic circle - which is pretty expensive, BTW. http://aceee.org/files/proceedings/2012/data/papers/0193-000409.pdf The data is also parsed across multiple machines taking care of security, redundancy and resiliency issues as well as cutting down dramatically on the need for high speed internet - at this point. Again, this already exists in some of the distributed computing projects https://en.wikipedia.org/wiki/List_of_distributed_computing_projects as well as distributed rendering.

(JacquesBBB) - we don't want to build data centers, we want to eliminate them so I am not going to spend time jousting about datacenter performance. The equation is simple: datacenters buy energy for compute and cooling. We are using existing energy consumption (space/water heat - refrigeration/air conditioning), which is already paid for, to compute. Datacenters pay significant sums to transfer information to the arctic circle - we want to keep it local and distributed on short hops which are inherently faster. -

Is there any data on how this affects reliability, MTBF, etc. of the individual parts?

There is plenty of manufacturer data on temperature and MTBF issues, each chip manufacturer publishes their heat/performance data. When we first started the prospects seemed pretty dismal given what they publish. Some R&D time figuring out how to run hot was the key, not the published data.

In general cool computers are happy computers and heat can dramatically reduce the lifespan of a computer if not managed correctly. It took us some time to figure out the major culprits in heat related failure, the biggest killer is electromigration. Heat and high current loads actually pick up and carry the conductor in chips, diminishing the conductor's capacity to transport electricity. There are several ways around this problem, the expensive solution is to move to a tungsten based conductor on an SoI chip. We're working with the Fraunhoffer Institute to attract some research funding to look into the macro economics of high temperature computing with SoI. They already have a series of SoI ASIC that run well and for typical lifespans at 300c - we can make high temperature steam with 300c.

The less expensive fix extends the life of the chip and is what we have been doing on the first prototypes; modulating the clock speeds and voltage based on temperature work like a charm. Lower voltage at higher temperatures reduces electromigration issues and allows things to live longer. More importantly, we're only designing these things with a 2 year lifespan in mind. The economics given the reduced electricity cost by distributing the compute to places with an existing heat load look pretty good. Two years is a little shorter than the average server replacement schedule but it is not far off. We're hoping to run them hard enough that, after their service life, the wheels are falling off and they'll have little resale value. If you stack up the ancillary benefits you can probably point to ROI on the systems that are well under two years. We'll rebuild the unit and cross ship it to the facility.

MTBF in high temp computing is a design issue that we, and others, are working on. If there is a market, it will be overcome.

-

Given that you obviously understand this already, you shot yourself in the foot when you mentioned Solar Roadways in your campaign. That project was the poster child for marketing over feasibility. Guilt by association, I'm afraid.

Andy is right. Solar Roadways are an industry joke. The motherload of all unfeasible projects.

Being associated with them is technical industry poison.

Add in the fact of moving from a failed Kickstarter to Indiegogo, you are begging to have your project not taken seriously I'm afraid.

I know that's not fair, but that's the way it is.

Thankfully engineers are easy to convince, just show them the data. Talk is cheap.

Only time will tell if Andy is right.

Solar Roadways is technically feasible and I'm more than happy to joust on that one as well. They are not, however, economically feasible. That doesn't mean they will be in the future or ever, that depends on factors that are related to but not dependant on economic feasibility. Faraday was also considered a loon in his day for many of the same reasons - how many electric motors power our life?

The planet?

Time will tell, not Andy. (nothing personal Andy!)

-

Is there any data on how this affects reliability, MTBF, etc. of the individual parts?

Running silicon based semiconductors hot enough to boil water is rarely beneficial for reliability

Surely a pretty high bandwidth internet connection is going to be required to be able to effectively sell compute cycles? Is the cost of this connection taken into account in the economics?

Agreed - you have to go through some serious machinations to get semiconductors to live at high temps. It took two years to get to a stable 180-200f and MTBF is still a question! As far as bandwidth is concerned, it really depends on the proximity and the load itself. Meshnets and the proliferation of fiber/high speed wireless should solve for this. We're not building for today's network, we're building for tomorrow's network.

There are some pretty interesting development happening around high speed, peer to peer networks. http://www.researchgate.net/publication/228550567_Reducing_Bandwidth_Utilization_in_Peer-to-Peer_Networks -

Most of this type of things are based in some fact, therefore the idea to use that fact redeemed to be wasteful and use it into something useful is valid. What most of this inventors fails to notice is the fact that the development of product is always trying to be as efficient as possible. 40 years ago in the "Computer room" you could bake a loaf of bread, now you need a whole bunch computers to make the room just "pretty warm". I'm certain that one of the main goals of the engineers that tinker with making new computer circuits is to minimize the production of heat.

My point is that this "free energy" won't last forever, the only ones that will benefit from the "investment" will be the "invertor" who will get "free" money.

A very good point, as chips get smaller and faster they generally produce less heat! But... they are smaller and faster so you can fit many more of them into the same space and typically generate the same heat and far more compute. Computers are pretty efficient heaters so the same 2-4kW of compute will likely still produce 2-4kW of heat, less the data shipped out of the computer back across the network as a result of the computation. This means the free energy will last until computers stop making heat, right?

As far as the 'Inventor who will get free money', that is rarely the case. The pioneers get the arrows and the settlers get the land. There is usually little monetary upside to pioneering. -

"Typical home computers use only 100-150W, so why would you bother?" The 1st unit runs at 1600W, 150W really wouldn't do much.

How many CPUs is that? Or, are you relying on high-power GPUs?Quote"Do you really think people will buy 1kW computers and sell off cycles just to make this work?" They already do. Check out Cloud & Heat https://www.cloudandheat.com , Qarnot Computing http://www.qarnot-computing.com/ and Nerdalize http://www.nerdalize.com/.

Those are in Europe - how can you make it work in the US, where we have hot summers, and slow Internet?Quote"How do you keep the HDDs and SSDs from cooking?" We run 2 seperate loops on the 1st prototype and have a stratified cooling layout that keeps the memory alive on V2.

Sounds like handwaving... what is the temperature of the low temperature loop?Quote"If desiccant refrigeration actually works, why don't people use it now, with cheaper heat sources?" They do http://www.nrel.gov/docs/fy11osti/49722.pdf.

OK, so they write reports about it, but why don't they actually use it?

1) We're using GPUs today

2) look up the thread - there are solutions for summer and we would have no economic reason to target regions with slow internet. The best distributed compute loads would be located in areas with high speed internet.

3) It's currently a separate loop, we can run it at whatever temperature we want. The real problem will be how to keep the next prototype's memory cool when it is all submerged in the same case and coolant - that will take some interesting engineering. We're already exploring ways of stratifying the coolant and have some good ideas, we'll see how they work at high temps over the next year.

4) There are a handful of companies licensed and building the tech now. It's pretty irrelevant, chillers run on the same temps. DEVAP is just far, far more efficient and an interesting application for DoE funding. -

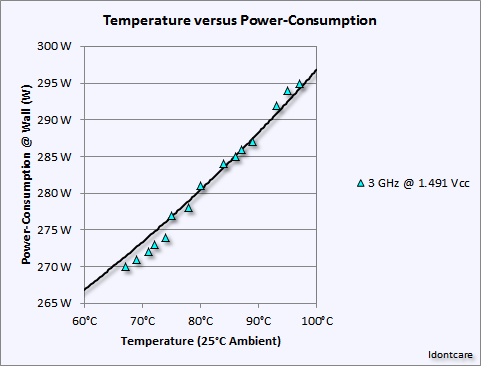

As die temperature increases, its common for CPUs to become increasingly less efficient, requiring more power to do the same operations. Do the benefits of this system outweigh the extra power consumption?

The increase in this example, for instance, is larger than the power consumed by a more powerful cooler so one could actually be wasting more energy by using less cooling power. Which isn't directly related to this particular use, but you're still drawing more power than you would otherwise, which is not "free".